From Slide Decks to Stopwatches: The Reality of Incident Response

At 2:07 a.m., cybersecurity strategy stops being a slide deck and becomes a stopwatch. An incident response retainer is not a prestige purchase or a panic tax. It is a decision about whether your team can contain damage fast when downtime is compounding by the hour.

Most organizations are stuck in a gray zone: decent tooling, but unclear decision rights. This guide helps you move toward operational clarity. We pressure-test retainer models against real incident economics and map what to keep in-house versus what to externalize for surge capacity.

Let’s run that math before the sirens do it for you.

Table of Contents

Before You Buy: What an IR Retainer Actually Solves

The core promise: response speed, not magic prevention

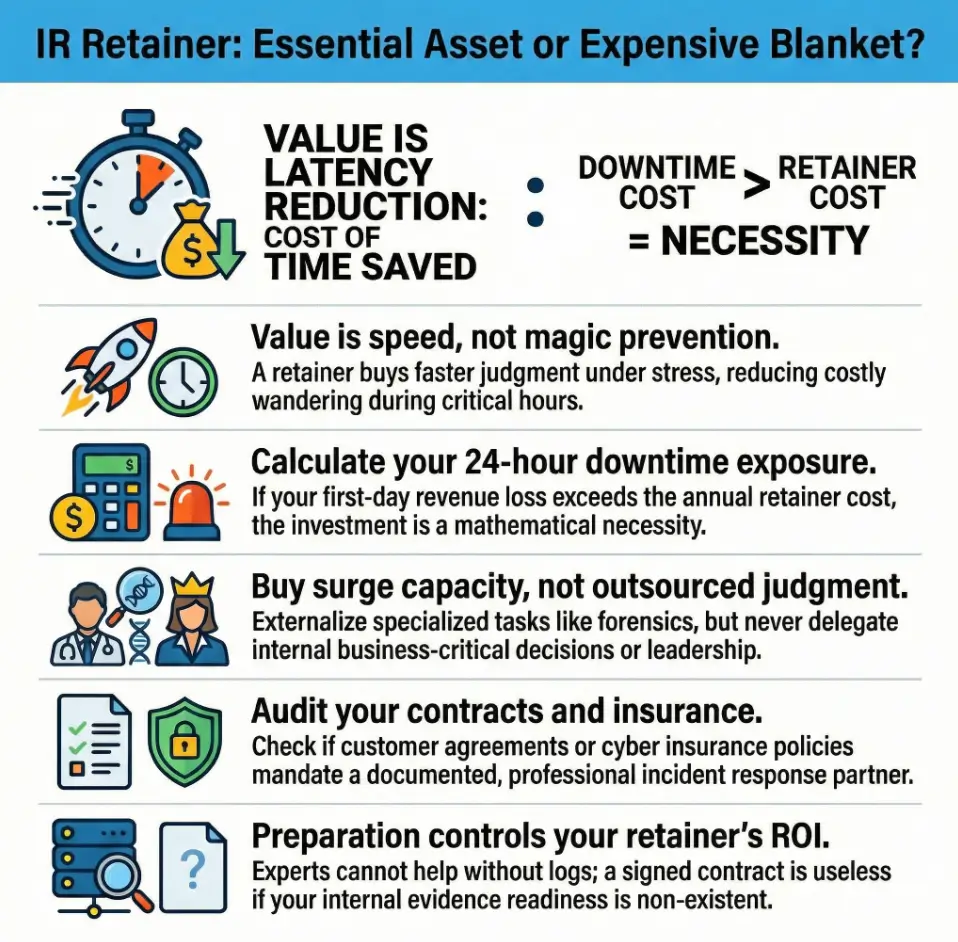

An incident response retainer is not a digital force field. It does not prevent ransomware by itself, and it does not replace security basics. Its real value is time compression. When something burns, the right people appear faster, ask better questions sooner, and reduce costly wandering in the first critical hours.

I once sat in on a breach bridge where the team had excellent tools and zero command structure. Sixty minutes disappeared debating who could authorize endpoint isolation. That hour cost more than many annual retainers. Not because the vendor was missing. Because decision design was missing.

What is usually included vs quietly excluded

Most retainers include some blend of triage support, containment guidance, forensics, and advisory coordination. Many also include readiness workshops, tabletop hours, and escalation pathways. Exclusions often hide in plain sight: after-hours multipliers, travel costs, specialty malware reverse engineering, cloud-specific deep forensics, and crisis communications outside scope.

Read contracts like weather forecasts. The storm path is in the fine print.

Here’s what no one tells you… most “coverage” depends on your prep work

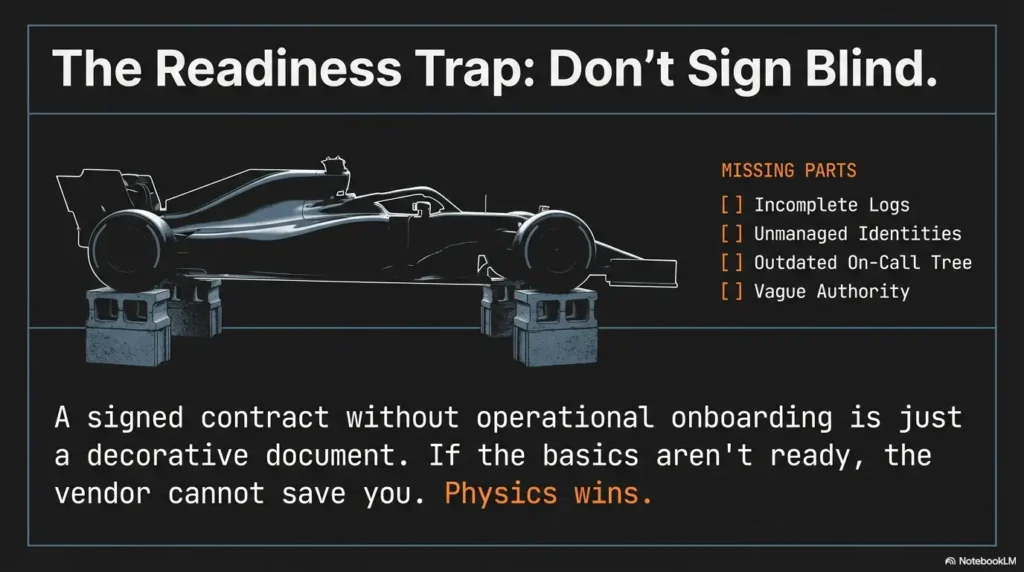

If your logs are incomplete, identities are unmanaged, and your on-call tree is outdated, a premium retainer feels like calling a race crew to a car with no wheels. They can still help, but physics wins. CISA guidance repeatedly emphasizes preparation, roles, and rehearsed communication as core incident response fundamentals. NIST’s incident response lifecycle also begins long before detection with planning and readiness.

- Speed matters most in first 4 to 8 hours.

- Scope exclusions decide surprise invoices.

- Preparation quality controls retainer ROI.

Apply in 60 seconds: Ask one vendor today: “What cannot be done in your base retainer during a declared incident?”

Fit Check First: Who This Is For / Not For

Best-fit profiles: regulated, 24/7 ops, lean internal SOC, high outage cost

If you process sensitive data, operate across time zones, or run critical systems where an hour of downtime hurts revenue, reputation, or safety, a retainer often makes practical sense. Healthcare, financial services, SaaS infrastructure, and logistics-heavy organizations tend to benefit early.

Poor-fit profiles: very low-risk footprint, mature in-house IR bench, limited digital dependency

If your digital attack surface is small, your team runs tested playbooks quarterly, and your incident leadership bench is deep, you may defer a retainer and invest in tighter controls, backup recovery speed, and periodic external assessments instead.

The “not now, maybe later” middle zone and what to improve first

Most companies live in the middle: not unprepared, not truly ready. That is where buying feels tempting and timing matters. In this zone, focus first on three levers: ownership clarity, evidence readiness, and decision authority. Then revisit retainer selection with fewer blind spots and better pricing leverage.

Incident Readiness Tier Map (Quick Visual)

Ad hoc response

No tested playbook

Basic tooling

Partial ownership

Defined playbooks

Quarterly exercises

Cross-functional drills

External coordination

Measured resilience

Board-level governance

Neutral next action: Place your org on one tier honestly before evaluating vendor proposals.

Question 1: How Expensive Is Your First 24 Hours of Downtime?

Revenue loss, operational stall, and customer trust impact

Incident economics are cruelly simple. If revenue stops, costs keep breathing. Customer support volume spikes. Executive attention diverts. Contract penalties may activate. Trust damage can outlive remediation by months.

One CFO told me, “I thought cyber risk was an IT budget line. Then one outage rewrote our quarter.” That sentence should be taped to every risk register.

A simple US-friendly downtime worksheet (hourly loss bands)

Use this quick model:

- Direct hourly revenue at risk: estimated blocked transactions or services.

- Operational drag: idle teams, overtime, contractor surge.

- Customer impact multiplier: refunds, churn, SLA credits.

Then calculate: (Direct + Operational + Customer multiplier) × likely incident hours.

Mini Calculator: First-24-Hour Exposure

Input A: Estimated revenue loss per hour

Input B: Estimated operational disruption per hour

Input C: Expected high-impact hours in first day

Output: (A + B) × C = rough first-day exposure

Neutral next action: Compare this number against one year of retainer cost, then decide with finance present.

Decision trigger: when delay cost exceeds annual retainer cost

If your modeled first-day exposure is larger than an annual retainer and your internal response depth is uneven, the math becomes less philosophical. You are not buying fear. You are buying latency reduction when minutes have payroll attached.

Question 2: Can Your Team Detect and Triage Without Vendor Help?

MTTD/MTTR reality test for small and mid-market teams

Many organizations can detect suspicious signals. Fewer can triage confidently at 3 a.m. Detection without decision is a dashboard hobby. Mean-time-to-detect and mean-time-to-respond are useful only if measured from first signal to containment action, not from first Slack message to eventual meeting.

Tooling depth vs analyst depth: where most teams overestimate readiness

Security stacks can look impressive and still underperform. The common gap is not sensor count. It is analyst availability, clear escalation, and executive decision rights. Tools are instruments. Humans are orchestra conductors. Without conductors, even violins sound like traffic.

Let’s be honest… alerts aren’t response if no one owns the playbook

In one tabletop, the SOC analyst had perfect logs and no authority to isolate endpoints. Legal had authority and no access to technical context. Leadership had urgency and no incident commander. Everyone worked hard. Progress crawled.

Show me the nerdy details

Measure detection and triage readiness with three timestamps: first malicious indicator seen, triage confidence established, and containment action approved. Track median and 90th percentile over last two exercises. If the 90th percentile exceeds your business tolerance window, external surge support is worth pricing now.

Question 3: Do Your Contracts, Insurance, or Regulations Push You Toward a Retainer?

Client security addendums, cyber insurance expectations, notification timelines

Retainer decisions are often pulled by external gravity. Enterprise customer contracts may require documented incident capabilities. Cyber insurers may prefer approved panel providers or evidence of formal response readiness. Notification clocks in legal frameworks do not pause while your team compares proposals.

Legal coordination and evidence handling requirements in the US context

In the US, counsel involvement, evidence integrity, and reporting discipline can shape downstream legal exposure. Chain-of-custody handling is not glamorous, but it is protective. SEC expectations for public company cyber disclosures and FTC enforcement themes have pushed incident governance from “IT issue” to executive accountability territory.

“Must have” signs from procurement, board, and compliance reviews

When procurement asks for named incident partners, when board risk committees ask response-time questions, or when compliance audits flag unresolved response governance, that is your signal. The question shifts from “Do we like this spend?” to “Can we defend not making it?”

- Check customer contracts for incident obligations.

- Review insurance policy language before renewal crunch.

- Align legal, security, and finance on notification reality.

Apply in 60 seconds: Pull one major customer contract and highlight every clause containing “incident,” “breach,” or “notification.”

Question 4: Will You Need Forensics, Legal, and PR Coordination Fast?

Why vendor ecosystem orchestration is the hidden value

The quiet superpower of a good retainer is orchestration. During a live incident, technical response is only one stream. You may simultaneously need legal counsel, cyber insurance notices, communications drafting, regulator posture, customer updates, and board briefings. Teams that already maintain a security testing strategy usually orchestrate these streams with less friction.

I have watched excellent engineers become accidental translators between counsel and executives while malware analysis was still underway. Not their fault. Wrong role at wrong moment.

Chain of custody, executive comms, and stakeholder messaging pressure

Evidence handling and communication sequencing matter because they prevent secondary damage. A premature statement can create legal risk. A delayed statement can create trust damage. A structured retainer can give you a choreography instead of improvised footwork on wet tiles.

Open loop: the one dependency most teams discover too late

Most teams discover too late that incident decisions depend on identity governance clarity. If privileged access maps are stale, containment choices become guesswork. Guesswork during breach response is expensive theater.

Question 5: Are You Buying Surge Capacity or Outsourcing Judgment?

What to keep in-house (decision rights, business context, approvals)

Keep business-critical decisions in-house: shutdown thresholds, customer communication posture, regulatory escalation triggers, and recovery priority across revenue functions. No vendor can feel your operational heartbeat better than your own leaders.

What to externalize (forensics depth, specialized malware response)

Externalize scarce, high-skill tasks: deep forensics, reverse engineering, large-scale log correlation under time pressure, and niche cloud artifacts. You are renting specialized muscle, not renting your steering wheel.

Avoiding the “we thought they would handle that” failure mode

Write explicit responsibility maps before signing. RACI clarity avoids finger-pointing under stress. I like a blunt exercise: each function answers “Who decides? Who executes? Who informs?” for 10 common incident actions. If more than two answers are fuzzy, pause procurement and fix governance first.

Decision Card: A vs B

When A (Surge Capacity): You have clear internal leadership but need rapid specialist horsepower.

When B (Outsourcing Judgment): You expect the vendor to make business trade-offs for you.

Time/Cost trade-off: A is usually healthier and cheaper long-term. B feels convenient until accountability questions arrive.

Neutral next action: Document 5 decisions your company will never delegate, then align vendor scope around them.

Question 6: Which Retainer Structure Matches Your Risk Profile?

Hours-bank vs subscription vs hybrid models

Three common models dominate:

- Hours-bank: prepaid hours, often flexible for incident and readiness support.

- Subscription: recurring fee for defined response and advisory tiers.

- Hybrid: base coverage plus variable surge components.

Early-stage teams often like hours-banks for budgeting clarity. High-availability organizations often prefer subscription or hybrid to reduce activation friction.

SLA clauses that matter under stress (response time, escalation path, geography)

Under stress, three clauses become your oxygen tank: initial response window, named escalation path, and geographic/legal fit of responder resources. If your operations span multiple regions, timezone and jurisdiction realities can make “fast” unexpectedly slow.

Cost predictability vs flexibility trade-off by company stage

Startups may value flexibility; larger firms may value predictability and governance posture. There is no universal best model, only best fit. The wrong structure can feel affordable in Q1 and painful in Q4 when real events test assumptions.

Show me the nerdy details

In proposal comparison, normalize all bids into three numbers: annual fixed cost, estimated variable cost at 1 incident, and estimated variable cost at 2 incidents. Then map those against downtime exposure scenarios. This reveals hidden volatility that headline pricing obscures.

Question 7: Are You Ready to Operationalize It Before the Incident?

Tabletop cadence, on-call contacts, and access prerequisites

A signed contract without operational onboarding is a decorative document. You need named contacts, role backups, communication channels, privileged access procedures, and tabletop cadence. Quarterly tabletop exercises are a reasonable rhythm for many teams; high-risk environments may run more frequent scenario drills.

Evidence readiness: logs, EDR visibility, identity controls, backups

Retainer outcomes depend on observability. If log retention is short, endpoint visibility is partial, identity controls are loose, or backups are untested, responders spend early hours reconstructing blind spots. That is avoidable friction.

Curiosity gap: why “signed but untested” retainers fail during real incidents

Because humans default to familiar pathways under stress. If no one practiced declaration criteria, call trees, and decision authority, the provider’s phone number becomes an artifact instead of an accelerant.

- Run at least one activation drill before year-end.

- Validate access and data visibility in advance.

- Rehearse escalation paths with executives.

Apply in 60 seconds: Schedule one 45-minute “retainer activation rehearsal” with legal, security, and IT ops this month.

Don’t Sign Blind: Common Mistakes That Waste Retainer Spend

Buying on logo prestige instead of incident fit

Brand reputation matters, but operational compatibility matters more. A perfect provider for a global bank may be clumsy for a midsize SaaS team with lean staffing and sharp uptime sensitivity.

Ignoring exclusions, overtime terms, and regional coverage limits

The biggest budget shocks rarely come from base fee. They come from conditional terms triggered at the worst time. Read overtime language like your future sleep depends on it. It does.

Treating the retainer as a substitute for internal incident ownership

No retainer can replace accountable leadership. Vendors can enhance execution, not inherit governance. If no one internally owns incident decision quality, spend leaks in every direction.

Quote-Prep List: Gather Before Comparing Vendors

- Last 12 months of incident-like events and response timelines

- Current log retention windows and visibility gaps

- On-call ownership map across IT, security, legal, comms

- Critical systems ranked by business recovery priority

- Contract/regulatory notification obligations by category

Neutral next action: Send this packet to all finalists so proposals are comparable.

Pricing Reality: What Changes Cost in the US Market

Typical pricing drivers: industry, complexity, response window, service scope

Costs shift with attack-surface complexity, compliance pressure, response-time commitments, and included service scope. A 24/7 rapid-response commitment with forensic depth and proactive readiness support prices differently than advisory-only structures. If you are still comparing defensive control stacks, this WAF vs RASP vs CSP for startups breakdown can clarify where preventive spend should sit versus response spend.

Hidden cost multipliers: travel, after-hours escalation, specialist add-ons

Hidden multipliers include holiday response, on-site deployment needs, specialist malware analysis, and external coordination requirements. Ask explicitly which services consume retainer hours and which trigger separate statements of work.

Mistake framing: what “cheap” often costs during an active breach

Cheap retainers can be expensive when escalation paths are vague, senior responders are unavailable, or scope walls appear mid-incident. Value lives where latency drops and decisions get sharper.

Short Story: The 4:11 a.m. Spreadsheet

A risk lead once told me about their “best bargain” retainer. It looked fantastic in procurement. Then came a weekend ransomware event. Their response window sounded fast on paper, but senior escalation required separate authorization, and forensic depth sat outside base scope. By the time the right specialists were engaged, 11 extra hours had vanished, customer tickets doubled, and leadership confidence cratered. Monday morning felt like a courtroom with coffee.

They switched providers, yes, but their biggest fix was internal. They mapped decision rights, upgraded logging visibility, and rehearsed declaration criteria with legal and operations. The second incident six months later still hurt, but the team moved with calm precision. Same people, different choreography. Their final lesson was simple: buy for worst-day performance, not best-case pricing.

Next Step: Run a 30-Minute ‘Need vs No-Need’ Decision Sprint

Score your org across 7 questions (1–5 scale each)

Use the seven questions above. Score each from 1 (low pressure/low need) to 5 (high pressure/high need). Keep it brutally practical, not aspirational.

Define threshold bands: no retainer, minimal retainer, full retainer

- 7–14: No immediate retainer. Strengthen readiness basics first.

- 15–24: Minimal retainer or targeted hours-bank with readiness package.

- 25–35: Full retainer with tested activation model and executive alignment.

Book one vendor scoping call with your top 3 decision gaps ready

Do not book five calls. Book one disciplined call and test quality fast. Bring your top three unresolved gaps: response-time expectations, scope exclusions, and governance interface.

- Score honestly with cross-functional inputs.

- Select model by risk-fit, not fashion.

- Test activation before contract celebration.

Apply in 60 seconds: Put a 30-minute decision sprint on next week’s calendar with security, legal, and finance.

FAQ

What is an incident response retainer and how does it work?

It is a pre-arranged agreement with a response provider for prioritized support during incidents, often combined with readiness services. You pay for access, defined service levels, and pre-scoped capabilities so you can activate quickly under pressure.

Do small businesses really need an IR retainer?

Some do, some do not. If downtime cost is meaningful, customer obligations are strict, and internal response depth is thin, even smaller organizations can benefit. If risk exposure is low and response maturity is strong, you may defer and improve in-house readiness first.

How much does an incident response retainer cost in the US?

Pricing varies by coverage model, response SLAs, scope, and complexity. Instead of chasing a single benchmark number, compare annual fixed spend, likely variable incident costs, and what is explicitly excluded. That gives a more honest budget picture.

Is an IR retainer required for cyber insurance?

Not universally. Many insurers do not mandate a specific retainer, but they may expect evidence of incident preparedness and may recommend or require panel processes in some circumstances. Check your policy wording and renewal discussions early.

Retainer vs MDR vs vCISO: which one comes first?

They solve different problems. MDR helps monitor and detect, vCISO supports governance and strategy, and an IR retainer supports high-pressure response execution. Sequence depends on your biggest current gap, but many teams pair MDR with a targeted IR retainer as readiness matures. To sharpen that sequencing, align choices with measurable outcomes from security metrics for founders.

What SLAs should I demand in an IR retainer contract?

Demand clarity on first-response timing, escalation path, responder seniority availability, communication cadence, and conditions that alter service level. Also confirm geography and after-hours handling to avoid surprises during real incidents.

Can we use retainer hours for tabletop exercises and readiness work?

Often yes, depending on contract design. Many organizations extract better value by using part of the retainer for drills, runbook refinement, and activation testing before incidents happen.

What happens if we exceed included hours during a major incident?

Additional billing terms usually apply, often at higher rates or with separate approvals. Clarify overflow pricing, approval flows, and priority continuity now, not during a breach bridge.

How fast should a provider respond after we declare an incident?

It depends on your risk profile and business tolerance. For higher-impact environments, faster response commitments are worth negotiating. More important than headline speed is reliable escalation to the right expertise.

How do we compare two IR retainers objectively?

Normalize both into comparable categories: fixed annual cost, variable incident cost, response timelines, exclusions, staffing model, and readiness support. Then test each provider against one realistic scenario from your environment. If your scoping process still feels fuzzy, start with a structured vendor security questionnaire to reduce blind spots before final negotiation.

Conclusion: Decide Before the Sirens

Remember the opening scene at 2:07 a.m.? The goal is not to predict every fire. The goal is to avoid negotiating your fire department while smoke is already in the hallway. A good incident response retainer can be a force multiplier. A poorly matched one is expensive décor.

Here is your honest next step: in the next 15 minutes, score the seven questions, identify your top three response gaps, and schedule one scoped vendor conversation with legal and finance in the room. If your first-24-hour exposure is high and your internal triage depth is shaky, delay is usually the most expensive option on the table.

Last reviewed: 2026-02-14.