Stop Losing Deals to the Security Questionnaire Treadmill

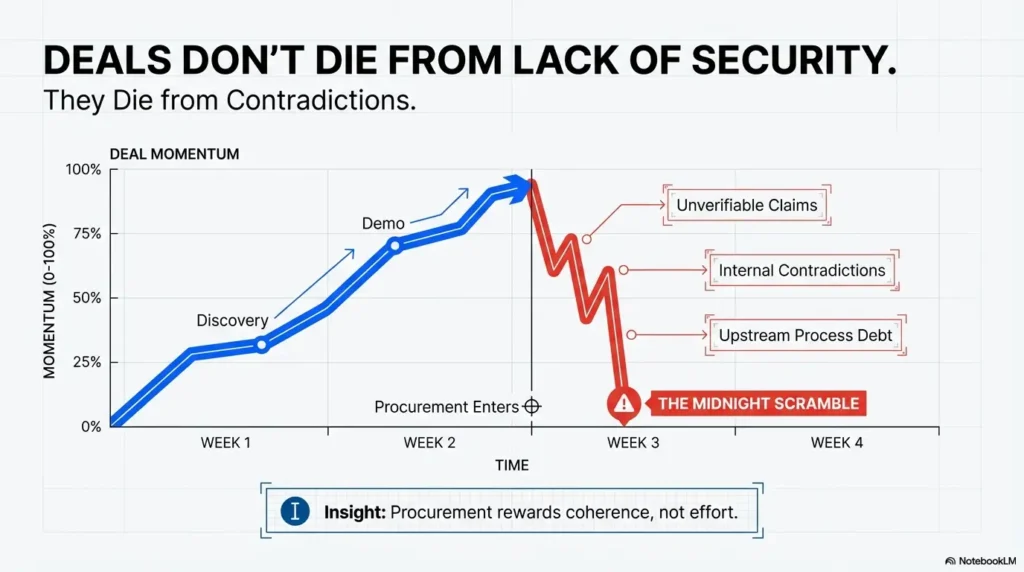

The deal doesn’t usually die in the pentest report—it dies in the questionnaire thread where three answers contradict each other and procurement quietly loses confidence. In startup vendor security review, that’s the moment pipeline momentum turns into midnight screenshot archaeology.

The pain isn’t lack of effort. It’s fragmented ownership, overbroad claims, stale response language, and evidence that looks fine internally but fails buyer scrutiny. By the time legal joins, every vague sentence becomes a week of clarification.

Keep winging it, and you lose more than time: cycle time expands, champions burn political capital, and close dates slide into the next quarter.

This guide gives you a practical operating model to answer faster with less thrash—using scoped control language, a reusable response library, and audit-durable evidence mapped to common frameworks like SOC 2, NIST, and SIG Lite. You’ll learn how to reduce escalations without pretending your program is more mature than it is.

The Shift

- 📉 Less improvisation.

- 🔄 More repeatability.

- ☕ Fewer heroic Fridays.

- ✅ More predictable approvals.

Let’s break down the 15 traps—and the fixes that actually hold up under enterprise due diligence.

Table of Contents

- Most delays come from contradictions, not missing certifications.

- Procurement escalates when wording sounds broad and unverifiable.

- A single response library cuts rework across every deal cycle.

Apply in 60 seconds: Pick one recurring question and attach one owner, one proof file, and one last-reviewed date.

First 10 Minutes: Why Deals Stall Before Security Even Starts

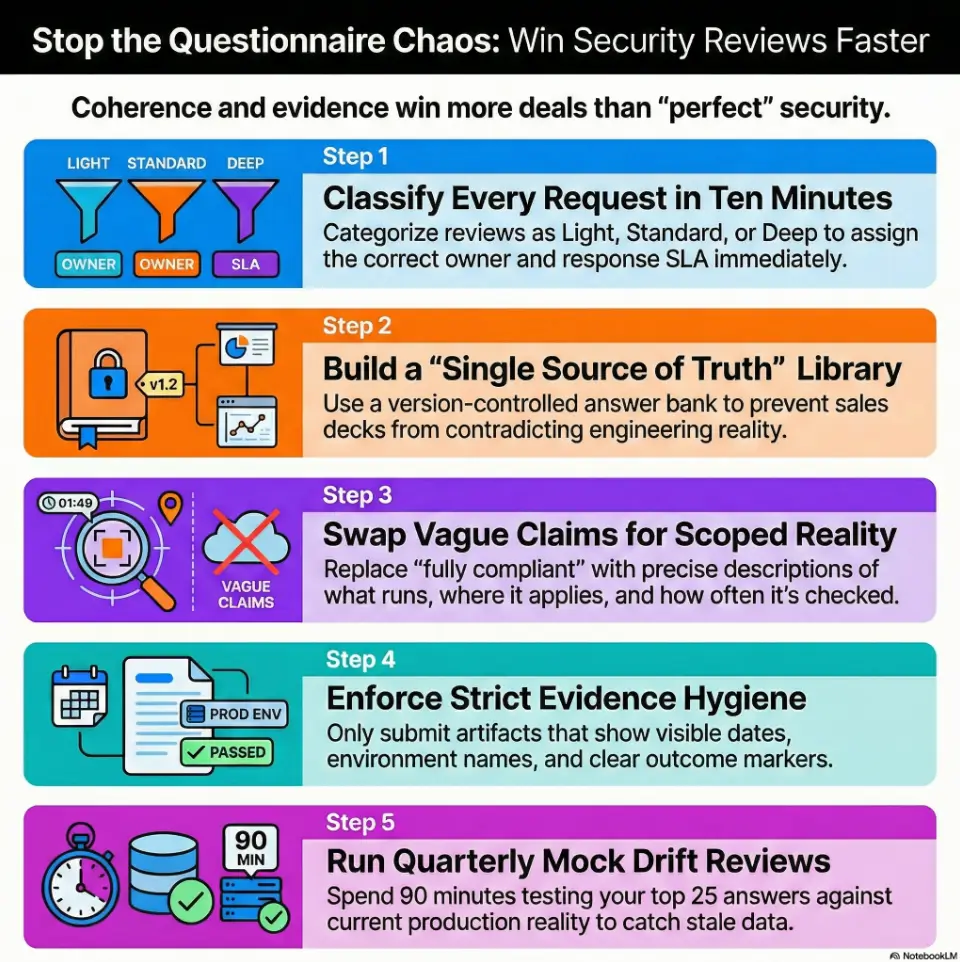

Intake triage: classify “light,” “standard,” and “deep” reviews on day one

If your team treats every questionnaire like a surprise exam, you’ll bleed time before page two. The first ten minutes should classify the review type and expected depth. A “light” review may be a short spreadsheet and one policy request. “Standard” often includes controls across access, logging, incident response, and vendor management.

“Deep” usually means legal/security/procurement will all touch the thread, with follow-up rounds and evidence validation. I learned this the hard way in a seed-stage sales cycle: we spent three days polishing answers for a customer that only needed six controls confirmed. Meanwhile, a real deep review sat untouched and slipped by nine business days.

Buyer intent decoding: compliance checkbox vs true risk investigation

Some buyers need documentation for internal gates. Others are actively scoring risk with decision impact. You can usually tell by the question style: checkbox buyers ask broad yes/no items; risk-driven buyers ask about scope boundaries, operating cadence, and proof that controls run in production. A subtle cue: when they ask “how often” and “who approves,” they’re testing operational maturity. This is where calm, specific language wins. Not “we enforce least privilege.” Better: “Privileged access is reviewed quarterly by Security + Engineering manager sign-off, with revocation tracked in ticketing.”

Let’s be honest… “urgent” usually means “we forgot security until legal got involved”

Urgent requests are often process debt upstream, not your team failing downstream. Treat urgency as a workflow signal: freeze ad-hoc debate, assign a single response owner, set response SLAs by review type, and run one daily checkpoint. Speed comes from less thrash, not heroics.

Eligibility checklist: Are you ready for intake triage?

- Do you classify incoming reviews into light/standard/deep? Yes/No

- Do you assign one owner per questionnaire? Yes/No

- Do you set a target turnaround by review type? Yes/No

Neutral next action: if you answered “No” to any item, write a one-page intake SOP before the next deal call.

Trap #1–#3: Copy-Paste Answers That Contradict Each Other

Trap 1: Different teams, different truths (sales deck vs questionnaire)

When Sales says “enterprise-grade controls” and Engineering says “in-progress hardening,” the buyer sees risk, not nuance. Contradictions don’t just trigger follow-ups; they reduce trust in everything else you send. One team’s enthusiastic phrasing can create a week of cleanup for another team. The fix is not policing tone. The fix is canonical language with approved scope and caveats.

Trap 2: Legacy answers that no longer match production reality

Old answers are sticky. They live in shared docs, slide decks, and copied emails. Six months later, the architecture has changed, but the answer bank hasn’t. I once found a sentence that mentioned a retired auth flow still pasted into active questionnaires. It looked small. It triggered five follow-up questions and one security call that no one had calendar space for.

Trap 3: “We use industry best practices” with zero substantiation

Buyers don’t reject this because it sounds arrogant. They reject it because it’s unverifiable. “Best practices” is a red-flag phrase when it floats without an operating detail. Replace vague claims with what runs, where it applies, and how often it is checked.

Fix: one canonical answer bank with version control and owner sign-off

Build a response library that includes: answer text, system scope, owner, last-reviewed date, linked evidence, and known exceptions. Keep it brutally short and operational. One paragraph per control domain beats long policy prose. Add a “do-not-overclaim” note directly beneath risky answer templates. You’ll reduce contradictions immediately. If you need a fast starting point for structure and ownership boundaries, adapt a penetration test SOW template pattern to your questionnaire response packet governance.

Show me the nerdy details

Use semantic versioning for your answer bank (v1.2.0 style), assign domain owners (IAM, IR, vendor risk, appsec), and set automatic review reminders every 90 days. Track delta notes: what changed, who approved, and what evidence was updated.

Trap #4–#6: Over-Claiming Controls You Can’t Prove Under Audit

Trap 4: Saying “encrypted everywhere” without key-management detail

“Encrypted everywhere” feels reassuring, but savvy reviewers immediately ask: at rest where, in transit where, with which key ownership model, and for which data classes? Over-broad phrasing can unintentionally imply customer-managed keys, HSM-backed workflows, or field-level controls you may not operate. Better to be precise and narrower than broad and brittle.

Trap 5: Claiming 24/7 monitoring without staffed response model

Many startups have 24/7 alert generation, not 24/7 human response. Those are not the same operational promise. If a buyer asks about monitoring, distinguish detection coverage from response coverage. “Alerts are continuous; human response is on-call with documented escalation” is clear and defensible.

Trap 6: “Least privilege” with no review cadence evidence

Least privilege isn’t a one-time architecture decision; it’s a recurring governance behavior. Without periodic review records, buyers assume privilege creep. Show your cadence, approval model, and revocation proof. Simple evidence beats polished wording every time.

Open loop: the single sentence that triggers the most follow-up questions

That sentence is usually “fully compliant.” It sounds final, but every reviewer knows controls are dynamic. Use “implemented for in-scope systems” + “exceptions documented” + “reviewed quarterly.” That phrasing invites less skepticism and fewer escalations.

Decision card: Broad claim vs scoped claim

- Choose broad claim when marketing copy needs simplicity (high follow-up risk).

- Choose scoped claim when questionnaire answers require audit durability (lower risk, faster approval).

Neutral next action: rewrite your top five risky claims into scoped operational statements today.

Trap #7–#9: Framework Confusion (SOC 2, ISO, NIST) That Slows Everything

Trap 7: Treating framework names as proof instead of mapped controls

Naming a framework is not the same as answering a buyer’s specific control question. SOC 2, ISO 27001, and NIST publications all help, but buyers still need control-level clarity. Think of framework names as map legends, not the map itself. If your team relies on labels alone, follow-ups multiply.

Trap 8: Sharing certificates/reports without control crosswalks

A report without a crosswalk forces the buyer to do translation work. They won’t. They’ll ask you to do it, often under tight deadlines. Build a reusable crosswalk once: buyer domain → your control statement → evidence artifact. That single artifact can save hours per review round.

Trap 9: No “equivalency” explanation for buyer-specific requirements

Every enterprise has custom language. If your control differs in mechanism but matches intent, explain equivalency clearly. Avoid defensive wording. Show intent coverage, frequency, and ownership. In one review, a buyer requested a control exactly as written in their policy manual; our team had a different implementation that was objectively strong, but we failed to explain equivalency early. Two weeks disappeared in translation.

Practical move: build a control mapping matrix once, reuse forever

Include columns for buyer question category, internal control ID, owner, evidence path, and refresh cadence. Add a confidence indicator (green/yellow/red) for response readiness. This turns every new questionnaire into assembly instead of improvisation. Teams planning evidence-heavy programs can also benchmark effort with a SOC 2 budget calculator for founders before committing headcount and tooling.

- Translate buyer language into your control language.

- Attach evidence where each mapped claim is verifiable.

- Document equivalency for non-identical but sufficient controls.

Apply in 60 seconds: Add one “equivalency note” column to your current questionnaire tracker.

Trap #10–#12: Evidence Hygiene Fails That Break Buyer Confidence

Trap 10: Screenshots with missing date, scope, or system name

A screenshot without context is just a picture. Buyers need who/what/when. Include visible date, environment name, and control scope in every evidence capture. If redactions are necessary, label what was removed and why. Unlabeled redactions can look like concealment even when harmless. If your team keeps tripping on proof formatting, standardize with a screenshot naming pattern for security evidence so every artifact is sortable and review-ready.

Trap 11: Policies uploaded without approval date or owner

Undated policies read like shelfware. Mature teams show approval history and accountable ownership. If you don’t have full policy governance yet, start simple: owner, approved date, next review date. That minimal metadata already improves trust.

Trap 12: Logs and tickets that prove activity—but not effectiveness

Activity is not outcome. A ticket shows something happened; it does not prove the control was effective. Pair activity evidence with outcome markers: closure quality checks, review sign-offs, or measured response times. One of my favorite internal jokes is “we have 400 tickets and no story.” Buyers feel the same. Give them the story.

Here’s what no one tells you… evidence quality beats evidence volume

Ten precise artifacts beat fifty noisy attachments. Curate evidence like a courtroom exhibit: relevant, dated, scoped, and easy to verify.

Evidence prep list before submitting a questionnaire

- Control owner listed?

- Approval or review date visible?

- System/environment scope visible?

- Redaction labels included?

- Outcome indicator attached?

Neutral next action: run this five-point check on your next three artifacts.

Trap #13–#15: Process Gaps in Incident, Vendor, and Access Governance

Trap 13: Incident response promises without tested tabletop records

Most teams can describe incident response. Fewer can prove they rehearsed it. A tabletop summary with date, scenario, participants, findings, and improvements is powerful. It signals that your plan can survive stress. Without that, incident response language can feel theoretical.

Trap 14: Third-party risk claims without subcontractor visibility

Buyers increasingly ask where subprocessors fit into your risk model. If your answer is “we rely on trusted vendors,” expect immediate follow-ups. Keep a maintained subprocessor list, review cadence, and minimum security due diligence criteria. You don’t need a giant program to start; you need visible accountability.

Trap 15: Access offboarding that depends on “manual reminders”

Manual reminders fail when calendars get crowded. Offboarding should be event-driven with clear ownership. If full automation is not possible yet, enforce a same-day offboarding checklist with evidence of completion. Review exceptions weekly. Predictability beats perfection.

Open loop: the governance question procurement asks right before signature

It’s usually some version of: “Who is accountable if this control drifts next quarter?” Governance is not policy pages; it’s named ownership plus review cadence. Nail this answer and last-mile friction drops dramatically.

Show me the nerdy details

Track three governance metrics monthly: control review completion rate, overdue exception count, and mean closure time for governance findings. Keep thresholds simple and visible to leadership. Many teams pair this with a founder-facing dashboard of security metrics that actually drive decisions.

Who This Is For / Not For

For: Seed-to-Series C SaaS teams selling into mid-market/enterprise

If your team is growing fast and deals increasingly involve procurement and security gates, this playbook is for you. It fits organizations where one person may wear multiple hats across security, platform, and compliance.

For: Founders, security leads, GRC owners, solution engineers

Anyone who touches questionnaire flow, evidence prep, or buyer Q&A can use this framework. It is especially useful when your security function is lean but buyer expectations are enterprise-level.

Not for: Teams needing jurisdiction-specific legal determinations

This guide helps with process design and response quality. It is not legal advice. Contractual obligations and jurisdiction-specific interpretations should go through qualified legal counsel. If you are clarifying contractual boundaries around test scope and downstream risk, review a practical explainer on pentest limitation-of-liability language.

Not for: Organizations requiring immediate forensic/incident counsel

If you are handling an active breach or legal hold scenario, switch to incident counsel and forensic responders first, then return to process optimization later.

Coverage tier map for questionnaire readiness

- Tier 1: Ad-hoc answers, no owner model.

- Tier 2: Basic answer bank, uneven evidence quality.

- Tier 3: Owner + review dates + mapped artifacts.

- Tier 4: Crosswalked controls + governance cadence.

- Tier 5: Reusable packet, low follow-up, predictable cycle time.

Neutral next action: identify your current tier and one upgrade step for this quarter.

Common Mistakes Startups Repeat (and How to Stop Repeating Them)

Mistake: answering each questionnaire from scratch every time

This feels thorough, but it burns hours and introduces inconsistency. Rebuild less; refine more. A structured library plus lightweight review beats endless reinvention.

Mistake: letting only security answer without legal/product context

Security controls intersect with contracts, data handling promises, and product behavior. Single-team answers can unintentionally overcommit. Use a three-lens check: security feasibility, legal language fit, product reality.

Mistake: ignoring “data flow” questions until late-stage review

Data flow ambiguity triggers deep review late in the process. Keep a plain-language data flow narrative ready: collection, storage, processing, retention, deletion. No diagram artistry required; clarity wins.

Mistake: no redline policy for unacceptable contract-security terms

Without redline boundaries, negotiations drift. Define non-negotiables upfront (for example, liability asymmetry, unlimited audit access, or impossible response obligations) and route exceptions through named approvers.

Short Story: The Thursday That Almost Killed the Quarter (120–180 words)

A small SaaS team I advised had a strong product and a warm champion. Everything looked fine—until security review landed at 4:40 PM on a Thursday. Sales copied old responses, Engineering patched two lines, Legal joined late, and by Friday afternoon the buyer flagged contradictions on encryption scope, incident timelines, and subcontractor disclosure.

Nobody lied. Everyone was busy. But the packet looked stitched together in panic. Over the weekend, the team rebuilt the response set from scratch: one owner per domain, one canonical answer per recurring question, one artifact per claim. On Monday they resubmitted a shorter packet with clearer scope notes. Follow-ups dropped by more than half. The deal didn’t close instantly, but trust recovered. That week taught a blunt lesson: procurement doesn’t reward effort. It rewards coherence.

- Set boundaries before urgency arrives.

- Answer with cross-functional reality, not single-team optimism.

- Translate data flow clearly before buyers ask twice.

Apply in 60 seconds: Add Legal and Product reviewers to your questionnaire handoff checklist.

Build the “Answer Once, Reuse 50 Times” System

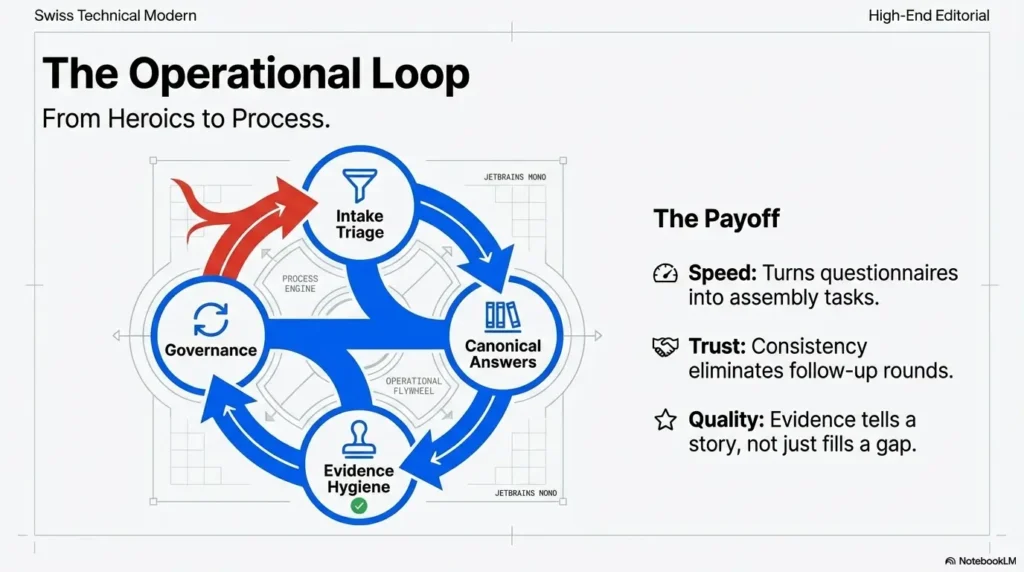

Step 1: Create a source-of-truth security response library

Keep one shared repository with controlled edit rights. For each canonical answer, include control scope, environment notes, owner, and approved fallback wording for “in-progress” controls. This reduces improvisation under deadline pressure.

Step 2: Tie each answer to evidence, owner, and review date

Answers without evidence become conversations. Answers with evidence become decisions. Add a simple metadata line under each response template: owner, evidence link, last reviewed, next review. If closure speed is slipping, codify expectations with a vulnerability remediation SLA framework so “in progress” has enforceable timing.

Step 3: Pre-map to common buyer control domains

Map once to common domains like IAM, encryption, logging, vulnerability management, incident response, business continuity, and vendor risk. This lets your team assemble responses quickly while preserving consistency.

Step 4: Run quarterly mock questionnaires for drift detection

Drift is inevitable: systems change, teams change, language drifts. A mock review every quarter catches stale answers before buyers do. Keep it lightweight—90 minutes, top 25 recurring questions, fix and publish updates by end of week.

Mini calculator: Questionnaire cycle-time savings

Inputs (max 3): monthly questionnaires, average hours now, target reduction %.

Example: 6 questionnaires × 7 hours × 30% reduction = 12.6 hours saved per month.

Neutral next action: estimate your next-quarter time recovery and assign where it goes (pipeline, hardening, or hiring).

Infographic: Startup Vendor Security Review Operating Loop

Light / Standard / Deep classification + owner assignment in 10 minutes.

Use scoped language, approved caveats, and versioned templates.

Every claim has dated, scoped artifacts with clear ownership.

Quarterly mock review to catch drift and refresh the packet.

Outcome: fewer contradictions, fewer escalations, faster procurement trust.

Show me the nerdy details

Common entities to align against in enterprise reviews include AICPA SOC reporting concepts, NIST assessment practices, and Shared Assessments SIG-based questionnaire structures. Use them as translation anchors, not as substitute proof.

FAQ

What do enterprise buyers actually look for in a vendor security questionnaire?

They look for consistency, scope clarity, and proof. Technical strength matters, but contradictions and vague claims often create more friction than missing advanced controls.

How long should a startup take to complete a standard security questionnaire?

It varies by complexity, but teams with a maintained response library and mapped evidence usually move materially faster than teams starting from scratch each time. The biggest driver is process maturity, not headcount.

Is SOC 2 enough to pass vendor security review?

SOC 2 helps, but it is rarely “enough” by itself. Buyers still ask product- and contract-specific questions, request clarifications, and expect control-to-evidence mapping.

What’s the difference between SIG Lite and a full questionnaire process?

SIG Lite is typically shorter and used for lower-risk or earlier-stage due diligence. Full questionnaires go deeper on control operation, governance, and evidence quality.

Can early-stage startups pass security review without a dedicated CISO?

Yes. Many do with clear ownership, disciplined documentation, and cross-functional collaboration across engineering, legal, and operations. Role clarity often matters more than title.

What evidence should be attached to reduce follow-up questions?

Attach artifacts that are dated, scoped, and attributable: policy metadata, access review records, incident exercise summaries, and control-operation evidence tied directly to claims in your answers.

How do we answer if a control is “in progress”?

Be direct. State current status, interim safeguards, target completion window, and accountable owner. Honest maturity signals are usually better received than over-claims.

Should legal and security respond together to questionnaire items?

For many items, yes. Security validates technical claims while legal ensures contractual language does not create unmanaged obligations.

What are red flags that make procurement escalate security review?

Common red flags include contradictory answers, broad unscoped claims, undated evidence, and unclear ownership for ongoing governance.

How often should we refresh our questionnaire response library?

Quarterly is a practical baseline, with interim updates whenever core architecture, vendors, or control ownership changes.

Next Step: One Concrete Action to Take This Week

Choose your top 25 repeated questions, map each to one owner + one proof artifact + one last-reviewed date, and publish a shared response packet by Friday.

This is where we close the loop from the opening moment—the midnight screenshot scramble. You don’t need a perfect program this week. You need a reliable operating rhythm. Start with the top 25 recurring questions, not 200. Give each answer one accountable owner, one strongest artifact, and one review date. Keep language scoped. Mark in-progress controls honestly. Then publish a shared packet and run one short rehearsal with Sales before the next live deal. For consistency in how you package findings and supporting artifacts, many teams borrow sections from a practical pentest report template and trim it to questionnaire-ready evidence blocks.

If you have fifteen minutes right now, do this: pick one high-friction domain (usually access control or incident response), replace vague claims with scoped statements, and attach evidence that a buyer can verify in under two minutes. That single step often cuts the next round of follow-ups immediately.

- Scope your claims before procurement scopes them for you.

- Map answers once, then reuse with discipline.

- Use governance cadence to keep trust from drifting between deals.

Apply in 60 seconds: Schedule a 30-minute “Top 25 Response Library” kickoff on your calendar today.

Last reviewed: 2026-02.