Beyond the Noise: Mastering a Decisive Workflow for Kioptrix and Beyond

Most Kioptrix Level sessions do not fall apart because the box is too advanced. They fall apart around minute 17, when clean enumeration quietly mutates into tab-hoarding, clue-chasing, and the kind of busy terminal activity that looks productive from a distance.

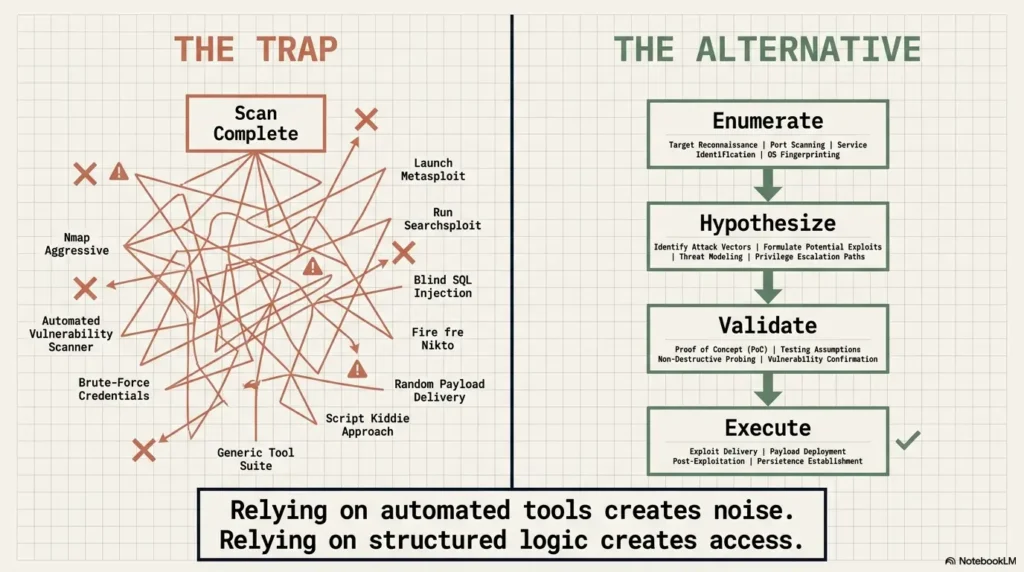

The real problem for beginners and career-pivoters isn’t a lack of exploits or talent. It’s the absence of a decision process. Without a way to rank evidence and test paths on purpose, your lab session quickly turns into static. If you keep guessing, you aren’t just losing time. You’re training yourself to confuse motion with progress.

This approach replaces “trying more things” with a disciplined workflow: Ranked Clues, Explicit Testing, and Visible Confidence Shifts.

By focusing on enumeration, hypothesis testing, and decision logging, you make vulnerable machines legible. The payoff? Clearer reasoning, better documentation, and a much stronger story to tell in interviews. Because a calmer process often beats a louder toolkit.

Table of Contents

Fast Answer: Why Kioptrix Level feels harder when you study without a decision process is that the lab stops feeling like a sequence and starts feeling like noise. The problem is often not lack of tools or intelligence. It is the absence of a repeatable way to choose what to test, what to ignore, and what each clue should change in your next move.

Every clue feels loud. The learner moves a lot, but the model in their head barely changes.

Each command has a reason. Each result changes the next move, even when progress is slow.

Kioptrix feels “hard” when the real problem is decision drift

The box is small, but your options multiply fast

Kioptrix Level is not giant, but it is noisy in the way a small room full of mirrors is noisy. The box presents enough services, enough possible paths, and enough familiar tool rituals to tempt you into action before you have a frame. That is where decision drift begins. You are no longer choosing. You are sliding.

Beginners often assume difficulty lives inside the machine. Sometimes it does. More often, especially on training boxes, difficulty blooms in the learner’s process. One open port suggests web enumeration. Another suggests version hunting. A third whispers old exploit notes from memory. Soon, three tabs become thirteen. The terminal looks busy. The mind looks crowded.

I have watched this happen in my own lab notes. A clean page begins with one target IP and one question. Twenty minutes later, the page has arrows, half-written tool outputs, and one sad little line that says, “maybe try something else?” It is almost comic. Not because the learner is foolish, but because the mind hates empty space and loves premature motion.

That is what makes a small lab feel larger than it is. Not the true attack surface alone, but the number of unranked choices you allow to stay alive at once.

Without a decision process, every clue feels equally important

This is the real tax. When you do not have a system for ranking clues, your brain assigns emotional weight instead. A flashy banner, a familiar service, an exploit you used once on a different box, a weird directory name, a suspicious version string. They all start wearing the same costume and shouting for attention.

But clues are not equal. Some are structural. Some are decorative. Some are misleading. Some are only useful after another piece of evidence lands. A version string with no confirmatory behavior is not yet a path. An open service with weak context is not yet a lead. Enumeration output is not a promise. It is a menu.

That distinction sounds small until you practice it. Then it becomes the difference between working with the box and wrestling your own impulses.

Why scattered effort creates the illusion of technical difficulty

When effort fragments, technical challenge looks bigger than it is. You run more commands, but your uncertainty does not narrow. You read more output, but your confidence does not improve. This creates a very specific feeling: “I am doing a lot, so the machine must be advanced.” Often, that is not true. You are simply paying interest on disorganization.

In help desk and sysadmin work, this pattern also appears. Tickets feel monstrous when the real problem is not the system but the lack of triage. Security labs are no different. The person who ranks evidence can look calmer not because they know more, but because they refuse to let every possibility sit at the same table.

- Small boxes can still produce too many plausible paths

- Busy work can disguise weak reasoning

- Clues need ranking before action

Apply in 60 seconds: Before your next command, write down which clue matters most and why it outranks the others.

Before the scan finishes, your workflow may already be broken

A lab gets harder the moment you confuse activity with progress

The first break in workflow often happens before enumeration is complete. The learner sees the terminal moving and assumes the session has structure. But activity is not structure. It is just movement with good marketing.

There is a tiny seduction in “doing something.” Launching another scan feels like agency. Pasting a command feels like momentum. Opening a browser tab feels like research. Yet none of those actions guarantee that your model of the target is improving. A good session is not defined by command count. It is defined by whether each action reduces uncertainty.

That is why two people can spend the same 45 minutes on Kioptrix and leave with completely different value. One has twelve screenshots and no narrative. The other has six observations, three discarded hypotheses, and one clear next test. Guess which person is actually getting stronger.

Why “try more things” is not the same as “think more clearly”

“Try more things” sounds brave. It sounds industrious. It even sounds gritty, like the kind of advice a mentor gives while leaning against a rack server with coffee gone cold. But in practice, it often means “increase input without improving judgment.”

More attempts can help when you have ranked paths and are testing them methodically. More attempts hurt when you are using volume to compensate for uncertainty. The difference is subtle but enormous. In one case, you are exploring. In the other, you are spraying a flashlight around a room because you do not want to admit you have not chosen where to look.

A learner once told me they spent an hour “being thorough.” Their notes showed seven unrelated actions and no explanation of why one followed another. That was not thoroughness. That was an anxious parade in a nice coat.

The first missing piece is usually not knowledge, but sequencing

Beginners frequently overestimate how much hidden technical knowledge they need and underestimate how much sequencing matters. Yes, knowledge helps. But order matters earlier. If you do not know what comes first, even familiar tools become confetti.

A simple example: seeing an old service version does not automatically mean “exploit now.” It may mean validate exposure, inspect related content, compare behavior, and check whether the apparent path fits the rest of the evidence. The order protects you from romance. And labs are full of romance. Flashy guesses, cinematic hopes, the dream of the shortcut. Lovely. Expensive.

| When you feel stuck | Bad default | Better move |

|---|---|---|

| Scan still running | Open random exploit pages | Write what question the scan should answer |

| One clue looks exciting | Commit emotionally | Ask what evidence would strengthen or weaken it |

| Too many options | Poke all of them | Rank one primary path and one backup path |

Neutral action: Use this table when your session starts to feel busier than clearer.

Start here first: what a decision process actually does for you

It turns the lab from a hunt into a chain of small judgments

A decision process does not make the box easier in the childish sense. It makes your interaction with the box legible. Instead of treating the session like a treasure hunt where the right answer is hidden in a bush, you treat it like a sequence of small judgments. What is exposed? What is probable? What deserves another minute? What no longer deserves one?

This sounds almost modest. Good. It should. Strong lab work is usually quieter than people expect. It is less fireworks, more bookkeeping with teeth.

That is one reason cybersecurity interviews often reward reasoning stories more than flashy tool recitations. The NICE Workforce Framework from NIST emphasizes tasks tied to analysis, investigation, documentation, and decision quality across many roles. In other words, the industry itself keeps telling learners a secret they often ignore: your value is not just that you can run commands, but that you can decide what they mean.

It helps you rank clues instead of reacting to all of them

Once you have a process, clues stop arriving as commands and start arriving as priorities. An old service version, a web page, a default directory, an error message, a login prompt, a banner. You no longer ask, “Which one is coolest?” You ask, “Which one is strongest, cheapest to test, and most connected to the rest of the box?”

That ranking step is where panic begins to thin. You are still uncertain, but now your uncertainty has furniture. It can sit somewhere. It no longer has to stampede through the room.

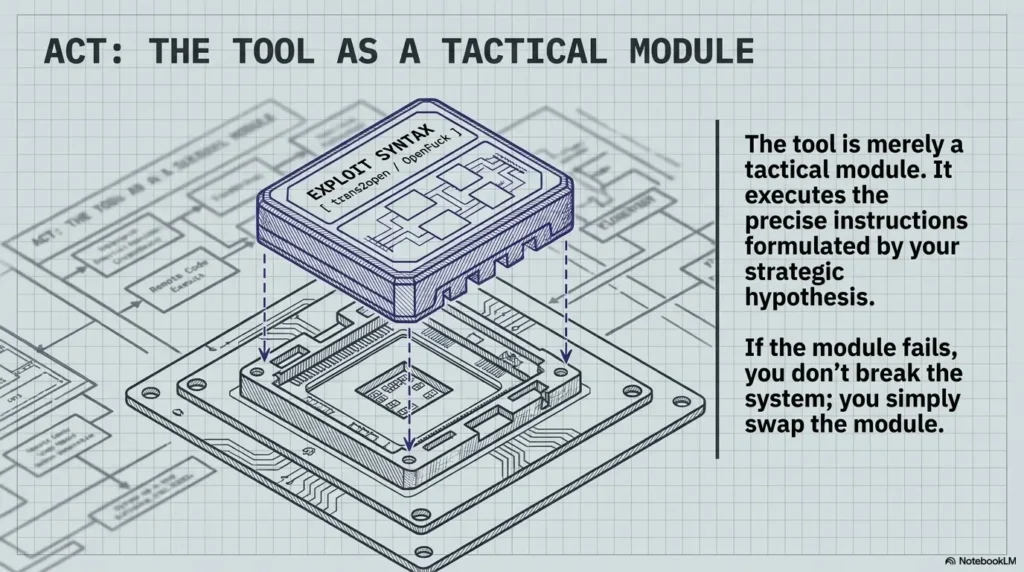

It gives each command a reason, not just a habit

The best shift a learner can make is moving from habit-driven commands to reason-driven commands. Many people inherit terminal routines like old family recipes. Scan this. Enumerate that. Run the usual wordlist. Check the usual exploit feed. Some of that is useful. But habit alone becomes brittle.

A reason-driven command has a sentence attached to it. “I am checking this because the web behavior suggests a likely misconfiguration.” Or, “I am validating this service because the version and exposure together might create a viable path.” That sentence matters. It tells you what success looks like, what failure means, and what should happen next.

Show me the nerdy details

In practice, a useful lab loop often looks like Bayesian-lite reasoning rather than tool choreography. You start with prior plausibility, collect evidence, and adjust confidence. You do not need formal math. You need a visible record of which signals strengthened a path, which weakened it, and which were merely adjacent.

Who this is for, and who it is not for

This is for learners who keep scanning, poking, and restarting without a clean plan

If you have ever told yourself, “I know I did something useful, I just cannot explain what changed,” this article is for you. If your notes look like a spilled drawer of commands, this is also for you. If you often reach the twenty-minute mark with six tabs open and no ranked path, welcome. Pull up a chair.

Many self-taught learners mistake this pattern for a talent problem. It is usually not. It is a process problem. And process problems are glorious because they can be redesigned.

This is for help desk, sysadmin, and beginner security learners building method over speed

People pivoting from help desk or systems work often underestimate how much transferable judgment they already have. Triage, incident notes, reproducing issues, narrowing variables, escalating based on evidence rather than hunches. That is not peripheral. That is a foundation.

Kioptrix can be especially valuable for this audience because it rewards exactly those habits when used well. You do not need to look like a movie hacker. You need to look like a person who can observe a system, form a hypothesis, test it cleanly, and explain why the next step made sense. If that sounds familiar, Kioptrix for help desk workers and Kioptrix for IT generalists both make the same case from slightly different angles.

This is not for people looking for a shortcut walkthrough instead of decision-making practice

A walkthrough can teach a route. It cannot teach your judgment unless you actively reconstruct the logic behind each move. If what you want is a shortcut to the flag, this piece will feel slower. That is intentional. Speed without explanation is a sugar rush. Fun for a minute. Then your energy falls through the floor.

- Yes: You often lose track of why you ran a command

- Yes: You restart labs when your notes become chaotic

- Yes: You want interview-ready reasoning, not only terminal muscle memory

- No: You only want a box-specific exploit sequence with no thought process

Neutral action: If you checked at least two “Yes” items, use the decision template later in this article on your next session.

Enumeration first, ego second

Why early certainty is one of the easiest ways to waste an hour

Nothing burns time like confidence that arrived too early. A learner sees a familiar service and mentally writes the ending before the middle exists. From there, every action becomes a search for confirmation. The box becomes a courtroom and the learner becomes a prosecutor with one suspect and not enough evidence.

Enumeration is supposed to delay that instinct. It is the discipline of letting the box speak before your pride does. Many learners hate this phase because it feels slower and less glamorous. But enumeration is not a warm-up. It is the floorboards. Skip them and the room still looks beautiful until your foot goes through.

I remember a lab session where I was certain a web angle was the story. The homepage looked suggestive enough to trigger all my favorite bad habits. Forty minutes later, the only thing I had exploited was my own patience. The eventual path was not hidden. It was simply quieter than my assumptions.

The best learners delay conclusions longer than beginners expect

One mark of maturity in lab work is the willingness to stay uncertain a little longer. Not forever. Not theatrically. Just long enough to gather enough context that your next choice deserves to be called a choice.

Strong learners do something beginners rarely notice: they keep their early hypotheses soft. They write possibilities in pencil, not in concrete. This makes them appear calm. In truth, they are often just less attached. And detachment is a superpower when the machine starts tossing out ambiguous signals like confetti at a wedding you did not mean to attend.

Let’s be honest… guessing feels faster right until it traps you

Guessing gives a thrilling sense of progress because it collapses uncertainty cheaply. That is why it is tempting. But cheap certainty often becomes expensive confusion. Once a learner falls in love with a path, they stop asking whether the evidence really deserves that devotion.

The goal is not to become cold or mechanical. It is to keep your curiosity from marrying the first plausible idea in the room.

- Enumeration is not delay for delay’s sake

- Soft hypotheses preserve flexibility

- Confidence should follow evidence, not charisma

Apply in 60 seconds: Label your current top path as “tentative” and write one thing that would disprove it.

The missing middle is where most learners get lost

Many people can scan and many people can exploit, but fewer can interpret

The missing middle in lab practice is interpretation. Most learners know what scanning is. Many know, at least in outline, what exploitation looks like. But between those poles sits the real work: deciding what the evidence suggests, what it does not suggest, and which paths deserve one more layer of attention.

This is where practice either becomes education or cosplay. The machine gives output. The learner must turn output into meaning. No tool can completely do that for you.

Interpretation is also what makes beginners feel “bad at labs” when they are actually bad at one narrower skill: moving from signal to decision. That is fixable. Very fixable. It just does not have the cinematic appeal of buying another course or memorizing another tool flag.

Why “What does this result mean?” matters more than “What tool comes next?”

“What tool comes next?” is not an evil question. It is just usually too early. If you ask it before interpreting the result in front of you, you are using tools as a substitute for thought sequencing. The better question is almost always, “What does this result mean in context?”

That phrase, in context, does heavy lifting. A service version alone means less than the service version plus reachable behavior. A directory listing means less than that listing plus permission clues. A login prompt means less than the prompt plus evidence of default creds, user naming patterns, or linked app behavior. Context is the bridge between fact and action.

How to move from raw output to a ranked list of plausible paths

A simple framework helps:

- What is the clue?

- What might it imply?

- How expensive is it to test?

- What result would strengthen it?

- What result would weaken it?

Notice how this turns interpretation into a small discipline rather than a mystical talent. You are not waiting for inspiration to arrive like a thunderbolt. You are building a ranked list with explicit logic. That is how confusion becomes workable.

Short Story: A learner I once coached kept saying they were “bad at exploitation.” Their notes told a different story. They were not failing at exploitation. They were failing one step earlier. Every enumeration result triggered a new branch, and none of those branches had a confidence score, a test condition, or a stopping rule. By the end of each session, they had touched five paths and understood none of them deeply. So we changed one thing.

Every clue got four labels: meaning, confidence, next test, and kill condition. The next week, they solved less quickly than they hoped, but far more clearly. More important, they could explain exactly why they moved from one path to the next. Their confidence stopped coming from whether they “popped the box” and started coming from whether they could defend their reasoning. That is when labs begin to teach rather than merely entertain. A simple Kioptrix decision tree or a structured recon log template can make this shift much easier to repeat.

Do not build the path backward from the exploit you want

Why learners often fall in love with the flashy route too early

Every learner has a favorite kind of story. For some, it is web exploitation. For others, privilege escalation. For others, some beautiful one-liner they saw in a write-up and have wanted to use ever since. The danger is not admiration. The danger is letting desire select the route before evidence does.

Backward-built paths feel smart because they are tidy. You start with the ending you want and hunt for clues that support it. It feels elegant. It is also one of the fastest ways to misread a target.

There is a peculiar embarrassment here too. Once you have emotionally invested in a flashy route, abandoning it feels like failure. So people cling. They keep testing weak branches because quitting the idea feels heavier than admitting it never deserved center stage.

Confirmation bias turns weak clues into fake confidence

Confirmation bias in labs rarely looks dramatic. It looks polite. You notice the clue that fits your hope. You interpret ambiguity as support. You ignore disconfirming behavior because it seems less exciting. Soon, you have built a castle from three bricks and enthusiasm.

That is why decision logs matter. A written process makes bias easier to spot. If you cannot explain why a clue matters beyond “it looks promising,” then the clue probably owns you more than you own it.

What changes when you let the evidence lead the route selection

When evidence leads, your path becomes less glamorous and more durable. You may choose the boring test first because it is cheaper and stronger. You may delay a sexy route because another clue better explains the machine as a whole. This can feel anticlimactic. Good. Real work often does.

The happiest surprise for many learners is that evidence-led routes are often faster in the long run. Not because they are magical, but because they produce fewer theatrical detours.

- Write your preferred exploit path in one line

- List two pieces of evidence that actually support it

- List one stronger competing path

- List one result that would make you abandon your favorite route

Neutral action: Complete this list before spending more than 10 minutes on a single attractive path.

Common mistakes that make Kioptrix feel harder than it is

Treating every open port like an equally urgent story

Open ports are invitations, not commands. New learners often treat every exposed service as a moral obligation. The result is a wandering session with no weight distribution. A better approach is to ask which service is most likely to yield leverage, which is cheapest to validate, and which aligns with the rest of the machine’s behavior.

You do not need equal attention. You need intelligent inequality.

Skipping note-taking because “I’ll remember it”

You will not remember it. Or worse, you will remember the wrong version of it. Lab memory is famously theatrical. It preserves the mood of the moment and drops the operational detail on the floor.

Notes are not a school exercise. They are a control surface for your own thinking. The absence of notes forces your brain to keep context live in working memory while also interpreting new data. That is a miserable bargain. This is exactly why a Kioptrix technical journal or the right note-taking tool for Kioptrix can quietly improve your sessions more than one more scanner ever will.

Re-running tools instead of updating your hypothesis

One of the most common stall patterns is rerunning familiar tools because they offer the comforting illusion of progress. When the session feels thin, learners return to scanning the way some people rearrange a desk instead of answering the email they fear.

There are times when rescanning is correct. But if you are rerunning tools without a changed question, you are often rehearsing instead of learning.

Mistaking dead ends for proof that you are bad at labs

A dead end is not a verdict on your talent. It is an informational event. It tells you something narrowed, something weakened, something failed to generalize. When recorded well, dead ends become part of the map. When interpreted emotionally, they become fog.

The learner’s job is not to avoid dead ends. It is to make them useful.

- Ports do not deserve equal attention

- Notes reduce cognitive drag

- Dead ends are data, not identity

Apply in 60 seconds: Review your last three actions and label each as evidence-gathering, hypothesis-testing, or comfort-seeking.

No, really, stop doing this

Do not switch strategies every ten minutes just to feel momentum

Strategy-switching feels productive because novelty wakes the brain up. But novelty is not always progress. Sometimes it is just escape in a nicer jacket. If you change direction every ten minutes, you never stay with a path long enough to learn whether it was weak or whether your testing was.

There is a difference between adaptive thinking and emotional dodging. One responds to changed evidence. The other responds to discomfort.

Do not collect commands without writing down why each one mattered

A long command list impresses beginners and bores future-you. What matters is not merely what you ran, but why you ran it, what you expected, and what changed afterward. This is the bridge between practice and narrative competence.

In interviews, nobody is moved by a recital of tools alone. But a concise explanation of why a clue changed your ranking of plausible paths? That sounds like someone awake during the work.

Here’s what no one tells you… confusion is often a sorting problem, not a talent problem

This is one of the kindest truths in technical learning. Confusion often means your material is unsorted, not that your mind is unfit. Once you sort, the fog thins. Not instantly. Not romantically. But steadily.

I have had sessions where the terminal felt like static until I paused, wrote three candidate paths, and forced myself to rank them. No new tool. No hidden trick. Just sorting. The moment felt almost annoying. You want the answer to be more glamorous than a piece of disciplined paper. Too bad. Paper wins more often than pride.

A usable decision process is smaller than most learners think

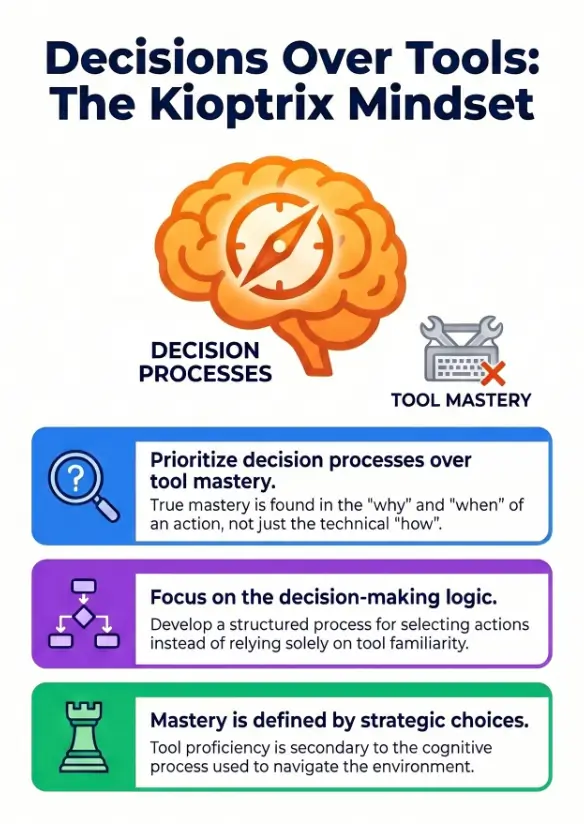

Step 1: Observe what is exposed

This first step is simple in wording and surprisingly easy to rush. Observe what is actually present before you invent the rest. Exposed services, application behavior, reachable content, error messages, authentication surfaces, naming patterns. Stay close to the machine. Do not decorate it too quickly with memories from other boxes.

Your task here is not to “solve.” It is to produce a trustworthy picture of what exists.

Step 2: Rank what is most promising

Next, rank by plausibility and cost. Which clue is strongest? Which test is cheapest? Which path best explains the evidence you already have? Which path, if disproved, would help you prune the tree fastest?

This is where many learners feel uneasy because ranking means committing. But you are not marrying the path. You are simply choosing what deserves the next candle in a dark room.

Step 3: Test one path with a clear reason

Pick one primary path and one backup path. Not four. Not nine. One and one. Then test with a sentence attached: “I am doing this because…” That sentence is your leash. It keeps you from sprinting into the reeds because a shiny error page winked at you.

Step 4: Record what changed, then choose again

After every meaningful action, write what changed. Stronger path? Weaker path? No signal? Contradiction? New sub-question? Then choose again. That is the loop. Observe, rank, test, update.

Notice how unglamorous this is. That is part of its power. The workflow is small enough to use under stress. Elegant systems that require perfect discipline usually die the first time the terminal spits out something strange. If you want a more concrete version of this loop, a Kioptrix methodology and a practical best practice path for Kioptrix Level can help turn the loop into muscle memory.

Use three inputs: number of active paths, number of notes written after the last action, and number of minutes since your last ranked decision.

If active paths > 2, notes = 0, and minutes > 15, you are probably drifting rather than progressing.

Neutral action: Cut to one primary path, one backup path, and write a one-line reason for each.

Show me the nerdy details

A compact loop survives cognitive load better than a sophisticated framework with too many branches. Under pressure, the best process is the one you can still execute when uncertainty rises. In incident response, troubleshooting, and lab work, lightweight loops often outperform ornate systems because they preserve decision quality when attention is thin.

When the lab stalls, the question is not “What now?” but “What changed?”

A good process notices when evidence got stronger, weaker, or irrelevant

When learners stall, they often ask the wrong question. “What now?” sounds sensible, but it is too broad. It treats the session like a blank slate. A better question is, “What changed?” That question forces you back into sequence.

Did your favorite path get stronger? Did a test fail in a way that weakens the hypothesis? Did a clue turn out to be merely adjacent, not central? Did two pieces of evidence stop fitting together? Those are process questions. And process questions rescue you from random action.

Why stalling often comes from not updating the model in your head

The mental model is the invisible map you are carrying around. Stalling happens when the map remains frozen while the evidence moves. You keep acting as if a path is promising because it once was. You keep exploring an angle because it is familiar, not because it is current.

This is where written updates matter. They externalize model revision. They make it visible when your confidence should go down, not just up. Many learners are comfortable increasing confidence and weirdly reluctant to lower it. But reducing confidence is not defeat. It is accuracy wearing a raincoat.

What to do when all current paths look thin

Sometimes every path really does look weak. That does not mean panic. It means choose the least expensive way to improve the map. Revisit base observations. Verify assumptions. Check whether your ranking criteria were distorted by excitement. Ask whether a path looks thin because it is bad or because you tested it lazily.

Thin paths are normal. The mistake is to treat thinness as a personal insult.

- Ask what changed, not just what to do next

- Confidence should rise and fall visibly

- Weak paths can still be useful if they sharpen the map

Apply in 60 seconds: Write one sentence beginning with “This path is weaker now because…”

Skill growth happens in the decision log, not just in the terminal

The notes that matter most are choices, assumptions, and reversals

Many learners keep notes like stenographers and then wonder why the notes do not help them think. Raw output has value, but the most educational notes are decisions, assumptions, reversals, and confidence shifts. These are the bones of reasoning.

A good decision log might say:

- Observed service X and behavior Y

- Ranked web path above service path because of exposure and test cost

- Expected A; saw B instead

- Reduced confidence in path 1 from medium to low

- Promoted backup path after contradiction

That style of note ages well. It can teach you next week, not just narrate what you already forgot.

Why interview-ready stories come from reasoning, not from tool lists

When employers assess junior security talent, they are often trying to answer a simple question: can this person think under uncertainty and explain themselves without theater? A clean reasoning story does exactly that.

You can say, “I noticed these services, ranked this path first because it was the cheapest strong hypothesis, tested it, updated confidence, and pivoted when the evidence weakened.” That sounds credible because it is credible. It mirrors real work more than a breathless command recital ever will.

NIST’s NICE materials and many entry-level security role descriptions reinforce this. Analysis, communication, documentation, and investigative reasoning are not side dishes. They are the meal. That is also why building Kioptrix interview stories from your sessions matters more than collecting glamorous screenshots alone.

How better documentation makes the next lab feel less chaotic

One quiet miracle of good notes is that they reduce the chaos of future sessions. The next box still has surprises, of course. But your personal process begins to feel familiar. You stop arriving in each lab like a tourist with no map and start arriving like a mechanic with a checklist and a tolerable amount of skepticism.

That shift matters psychologically. Labs stop being tests of identity and start being places where you practice decisions. Once that happens, frustration becomes easier to metabolize. You are not failing. You are refining.

| Tier | What your notes contain | What changes |

|---|---|---|

| Tier 1 | Commands only | You can replay steps, but not explain judgment |

| Tier 2 | Commands + outputs | You remember facts better, but pivots stay fuzzy |

| Tier 3 | Outputs + reasons | You begin to defend choices clearly |

| Tier 4 | Reasons + confidence shifts | You can explain pivots and dead ends |

| Tier 5 | Reasons + shifts + kill conditions | You are training judgment, not only repetition |

Neutral action: Aim for Tier 4 before worrying about fancier tooling.

FAQ

Why does Kioptrix Level feel harder than it looks?

Because small vulnerable machines can generate more plausible choices than beginners know how to rank. The difficulty is often not raw technical complexity. It is unstructured decision-making, weak note discipline, and premature commitment to one path.

Do I need more tools, or do I need a better process?

Usually a better process first. Additional tools can help later, but many stalled sessions come from poor ranking, unclear hypotheses, and lack of decision logging. A smaller, clearer workflow often improves performance faster than a bigger toolkit.

How do I know which clue matters first in a vulnerable lab?

Start with the clue that is strongest, cheapest to test, and most connected to the rest of the evidence. Ask which path would produce the most useful information even if it fails. That often reveals the best next move.

Is it normal to feel stuck after enumeration?

Yes. Enumeration gives you facts, not conclusions. The feeling of being stuck often means you are in the interpretation phase. That is normal. The fix is to rank paths, test one clearly, and write what changed.

Should I restart the box when I feel lost?

Not immediately. Restarting can erase the most educational part of the session, which is diagnosing how your reasoning drifted. Before restarting, review your notes, prune active paths, and ask what changed since your last strong hypothesis. Readers who struggle with this cycle may also find Kioptrix Level patience and a more repeatable Kioptrix practice routine useful companions.

How detailed should my notes be during practice?

Detailed enough to explain your decisions later. You do not need to capture every tiny terminal line. Focus on observations, hypotheses, reasons for tests, confidence changes, contradictions, and pivot points.

What is the difference between guessing and hypothesis testing?

Guessing jumps from possibility to action without defining what evidence would confirm or weaken the idea. Hypothesis testing states why a path is plausible, what result is expected, and what outcome would lower confidence.

Can a decision process help me even if I am still a beginner?

Yes. In many ways, it helps beginners the most. A simple decision loop reduces chaos, improves note quality, and makes each session easier to review. It turns practice from scattered effort into repeatable learning. If you are at that stage, Kioptrix for beginners and what to expect from your first Kioptrix lab fit naturally alongside this article.

Next step: build one page that decides your next move

Use a simple template: clue, meaning, confidence, next test

If you take only one practical step after reading this, let it be this: make a one-page decision sheet for your next Kioptrix session. Four columns are enough. Clue. Meaning. Confidence. Next test. That is it. No ornate dashboards. No productivity opera. Just one page sturdy enough to hold your thinking.

This template works because it forces interpretation into the open. A clue without meaning is just a fact. A meaning without confidence is just a vibe. A next test without a reason is just terminal weather.

Limit yourself to one primary path and one backup path

This constraint feels unfair right up until it starts helping. Limiting yourself to one primary path and one backup path keeps the session from expanding into an anxious festival of half-tested ideas. It also makes your review easier. You can later explain why path A beat path B and what evidence changed that ranking.

That is the loop this article promised to close. Kioptrix feels hard without a decision process because the box becomes a pile of ungoverned possibilities. Once you govern the possibilities, the same machine becomes more teachable. Not easy, exactly. But teachable. And teachable is where real confidence begins.

After each command, write one sentence explaining what changed

This is the smallest habit with the highest return. After each meaningful command, write one sentence beginning with one of these:

- This strengthened the path because…

- This weakened the path because…

- This did not change much because…

- This introduced a better path because…

That sentence converts the terminal from spectacle into training.

For readers building toward interviews or role pivots, this is also where your future stories come from. Platforms like Hack The Box Academy, TryHackMe, and older community write-ups can teach technique, but your employable edge will still come from the quality of your reasoning. The OSCP exam guide and related community discussions have long rewarded methodology, note discipline, and calm evidence handling over random heroics. The field keeps saying the same thing in different accents: think cleanly, document clearly, and let the evidence steer. If you want that habit to survive beyond one good session, a weekly Kioptrix habit, an honest self-assessment routine, or a broader Kioptrix learning path can keep the process from evaporating after the weekend.

- Build a one-page decision sheet

- Keep one primary path and one backup

- Log what changed after each meaningful step

Apply in 60 seconds: Create four headings on a blank page: clue, meaning, confidence, next test.

So here is the honest next move, small enough to do within 15 minutes. Open a blank note. Write the four columns. Launch your next lab. Do not try to be brilliant. Try to be sequential. If you do that, Kioptrix will stop feeling like a wall of static and start sounding more like a conversation you can finally follow.

Last reviewed: 2026-04.