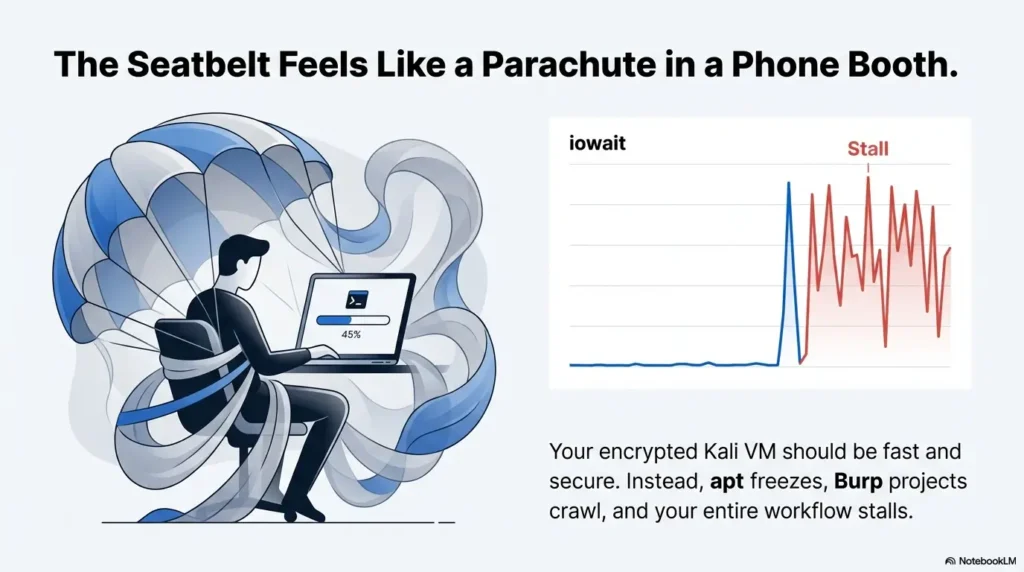

Boot takes forever, apt looks frozen, and Burp projects open like they’re being unpacked over dial-up—yet everyone’s first “fix” is the wrong one: disable LUKS.

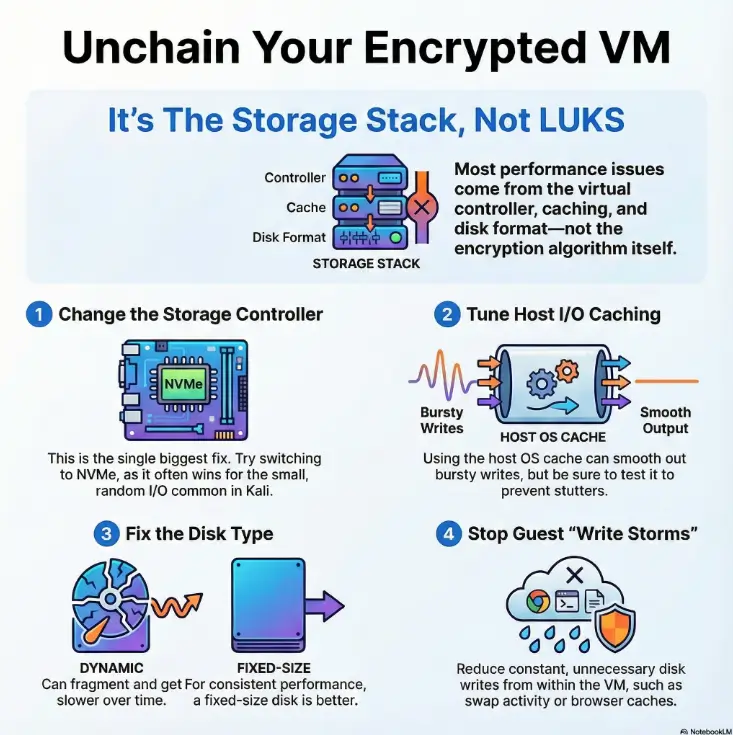

When a Kali encrypted VM is slow on VirtualBox, the culprit is usually disk I/O latency in the storage stack (controller choice, host I/O cache behavior, dynamic-disk growth, and constant small writes), not encryption doing some dramatic slowdown all by itself. Keep guessing and you’ll keep paying in wasted weekends, corrupted upgrades, and a VM that never feels trustworthy again.

This post gets you back to a responsive encrypted Kali—by proving where the bottleneck lives, changing the biggest levers first (NVMe vs SATA/SCSI, caching, disk strategy), and retesting with quick iostat/fio receipts so you can roll forward confidently or rollback cleanly.

Quick definition: iowait is the time your VM’s CPU spends waiting on disk—your system feels “slow” not because it’s busy computing, but because it’s stuck in line behind storage.

A slow Kali encrypted VM on VirtualBox is usually a storage stack problem—not “LUKS is too slow.” Fix it by validating VirtualBox’s storage controller choice (NVMe often wins), correcting host I/O cache behavior, avoiding dynamic-disk fragmentation, and reducing write storms (swap/journaling/browser caches). Benchmark before/after with quick I/O checks so you keep LUKS and regain responsive installs, updates, and daily tool workflows.

Table of Contents

Slow I/O proof: isolate disk from “Kali feels heavy”

Before we touch a single VirtualBox checkbox, we need to answer one uncomfortable question: Is it really disk I/O? Because I’ve watched smart people spend an entire Saturday “optimizing encryption,” only to discover their host SSD was 98% full and the VM was choking on write amplification. Ask me how I know. (Spoiler: it involved coffee, regret, and an apt progress bar that didn’t move for 12 minutes.)

Quick triage: boot time vs apt vs “typing lag”

- Boot slow + unlock prompt slow → could be PBKDF/unlock cost, could be disk. We’ll separate those in the next section.

apt update/apt upgradeslow → often storage controller + caching + small random writes. If you’re also hitting classic update friction like Kali “packages have been kept back” after an upgrade, solve that separately so you’re not timing a broken upgrade path.- UI stutters while disk is busy → classic high iowait (disk queue backpressure).

- Everything slow including mouse → sometimes host resource pressure (host paging, thermal throttling).

- Pick one real workload (e.g.,

apt upgradeor opening a Burp project). - Measure it once before changes, once after.

- Only change one lever at a time.

Apply in 60 seconds: Run uptime and look for high load with sluggish UI—then confirm with iostat.

Minimal benchmarks that map to real pain (iostat, iotop, one fio job)

Inside the Kali VM, run these while you reproduce the slowness (like apt upgrade or unpacking a big wordlist). You’re watching for disk saturation and queueing.

sudo apt-get update

sudo apt-get install -y sysstat iotop fio

# 1) Is the VM waiting on disk?

iostat -xz 1

# 2) Who is writing?

sudo iotop -oPa

# 3) One simple test (small random reads/writes are what kill VMs)

fio --name=randrw --filename=/tmp/fio.test --size=1G --direct=1 --rw=randrw --bs=4k --iodepth=32 --numjobs=1 --runtime=30 --time_based --group_reportingWhat you’re looking for:

- %util near 100% with high await (latency) → disk queue is the bottleneck.

- High iowait and “everything stutters” → storage path needs love.

- One process constantly writing (browser caches, logging, swap) → fix the write storm later.

Let’s be blunt— if the host disk is the limiter, no VM tweak saves you

If your host drive is nearly full, on a slow external HDD, or the host is swapping hard, VirtualBox can only do so much. The VM’s encryption becomes the scapegoat because it’s visible. The host’s disk pressure is the invisible bouncer.

Show me the nerdy details

High latency in a VM often comes from queueing: small random writes pile up, the host filesystem flushes, and the hypervisor serializes more than you expect. That’s why “more CPU cores” doesn’t fix it when %util/await says the disk path is the choke point.

The hidden fork: unlock-time vs runtime performance (stop blaming LUKS)

Encryption has two “costs,” and mixing them up is how people end up turning off security for zero speed gain. So let’s separate them like grown-ups.

Unlock cost (PBKDF) vs throughput cost (dm-crypt at runtime)

- Unlock cost is the “how long does it take to accept my passphrase?” moment.

- Runtime cost is “how fast can the VM read/write blocks all day?”

In practice, many “slow encrypted VM” complaints are actually runtime I/O latency caused by the VM storage stack—not the encryption algorithm doing dramatic villain laughs.

Why PBKDF tweaks change boot prompt time, not apt upgrade time

If you’ve ever thought, “Maybe I should lower the key derivation cost,” you’re not alone. I once did exactly that—then realized my apt slowness didn’t budge because the bottleneck was a VirtualBox controller choice I made on day one and never revisited. Lesson learned: unlock-time tuning is not disk tuning.

Curiosity gap: the one metric that reveals “LUKS isn’t your problem”

Watch iowait during a slow moment. If CPU isn’t pegged but the system is waiting on disk, your best speed wins come from the hypervisor storage path (controller/caching/disk format), not encryption parameters.

Controller choice first: NVMe vs SATA vs SCSI in VirtualBox (the biggest lever)

If you only change one thing, change this first. The storage controller is where “meh” becomes “why is this suddenly fast?”—especially for the small random I/O that Kali workflows generate (package installs, browser cache churn, Burp project writes, sqlite DBs).

What VirtualBox controllers really change (queueing, latency, interrupts)

Controllers aren’t just labels. They affect how requests are queued, how many can be in flight, and how the guest OS schedules I/O. That’s why two VMs on the same host disk can feel totally different. (And yes—if you ever got blocked by a VirtualBox storage misconfiguration like the Kali “No default controller” VirtualBox fix, that’s the “day one” issue that can poison every benchmark after it.)

When NVMe wins—and when it’s just noise

- NVMe often wins when your pain is lots of small random I/O (common in

aptand tooling). - SATA can be fine for simpler workloads or when the host/storage is the real limiter.

- SCSI (varies) can be useful but isn’t a guaranteed performance jackpot.

- Small random I/O pain → try NVMe first.

- Pure throughput pain → host disk and caching may dominate.

- Always benchmark before/after—don’t trust feelings.

Apply in 60 seconds: Note your current controller in VirtualBox settings, then plan one controlled switch and retest.

Curiosity gap: why the “fastest” controller can feel worse on your host

Here’s the twist: sometimes a controller switch exposes a host-side issue—like a slow external drive, host power-saving, or heavy host background indexing. The VM didn’t “get worse.” It just stopped masking the problem.

The caching paradox: the checkbox that helps—and the checkbox that hurts

Caching is where VirtualBox performance tuning becomes… emotional. Enable the wrong cache behavior and you can get stutters, flush storms, or the sensation that the VM “hangs” whenever it writes a lot. Enable the right behavior and suddenly your encrypted disk feels normal again.

“Use Host I/O Cache”: what it does in plain English

This setting influences whether the host OS page cache is used to buffer the VM’s disk operations. The host cache can smooth out bursty writes and reduce latency spikes—especially when the guest is doing many small writes.

Cache-flush storms: why “safer writes” can become “stutter city”

Some configurations force frequent flushes that translate into visible UI pauses. The VM isn’t “crashing.” It’s waiting. And waiting. And waiting. If you’ve ever watched your terminal stop responding mid-command while disk activity spikes, that’s the vibe.

Here’s what no one tells you… caching tweaks only matter after controller + disk format are sane

If you’re on a painful controller/disk combo, caching adjustments can feel like rearranging furniture in a burning building. Fix the big levers first, then use caching to stabilize the last 20%.

Show me the nerdy details

Cache behavior affects write ordering and flush frequency. High flush frequency can amplify the worst part of encryption-in-a-VM: small writes turning into expensive sync points. That’s why stutter can show up even when average throughput looks “okay.”

Disk type trap: dynamic growth + fragmentation that makes next month slower

This is the classic story arc: your encrypted Kali VM is fine in week one, tolerable in week three, and by week six you’re negotiating with your laptop like it’s a moody coworker. Dynamic disks are convenient—until they aren’t.

Dynamic vs fixed-size: where the performance tax hides

Dynamically allocated virtual disks grow as you write data. That growth can fragment over time depending on host filesystem behavior and where the VM file lives. The result: higher latency during real-world mixed workloads. You feel it as “random slowdowns,” especially when the VM writes lots of small files (package installs are basically a small-file festival).

VDI vs VMDK: portability vs performance reality

Use what fits your ecosystem, but understand the trade: formats optimized for portability aren’t always optimized for consistent low-latency I/O on your specific host. If your VM is a daily driver, “stable performance” usually beats “easy to move later.”

Curiosity gap: why your VM disk grows fine but performance shrinks monthly

Because growth is not free. Every “expand a little” moment can add overhead, especially if your host disk is under pressure. If you’re seeing worsening performance over time without changing encryption, disk growth/fragmentation is a prime suspect.

Short Story: The day my “secure VM” became unusable (120–180 words) …

Short Story: I built a Kali VM with full-disk encryption, felt very responsible, and promised myself I’d keep it clean. Then the VM became my dumping ground: captures, wordlists, browser profiles, Burp projects, random scripts named “final2.sh,” the usual. For a while, it was fine—until it wasn’t. One morning, apt upgrade turned into a slow-motion film.

The UI stuttered when I typed, as if the keyboard needed encouragement. I blamed LUKS. I blamed Kali. I blamed the universe. The fix was humbling: the disk was dynamically allocated and had grown in a messy, fragmented way on a host drive that was also crowded. After moving the VM to a healthier host volume and switching to a more stable disk strategy, the same encrypted VM felt normal again. Security stayed. Suffering left.

Guest write storms: swap, browser caches, and “death by a thousand syncs”

Encrypted storage plus virtual disks can handle a lot—until the guest starts writing constantly for no good reason. This is where “it feels laggy while doing nothing” often lives.

Swap strategy in VMs (including when zram helps)

If your VM is swapping, disk latency becomes your new personality trait. In many Kali VM setups, a small amount of memory pressure creates disproportionate pain because swap writes are frequent and disruptive. Depending on your workload, using a compressed RAM swap approach (like zram) can reduce disk writes. The rule is simple: reduce disk writes when the disk is your bottleneck.

Filesystem write-reduction basics (noatime, log settings—only what’s safe)

Some mounts and background services generate extra writes. Be conservative: you’re tuning for stability. Small, safe steps like reducing metadata writes (where appropriate) can help, but don’t turn your system into a science fair project.

Burp/ZAP + wordlists: where real pentest workflows create I/O spikes

Burp and ZAP projects, browser profiles, and large wordlists can create bursts of small writes. If you’re running these on an encrypted VM disk that’s already queueing, you’ll feel it as stutter. If your workflow is browser-heavy, it also helps to keep your tooling predictable—things like a clean Burp Suite WebSocket workflow and a stable interception setup (especially on ARM) like fixing “Burp browser not available” on Kali ARM64 reduce the “mystery writes” you accidentally blame on encryption.

I keep one personal rule now: don’t benchmark on an “idle” VM you never use. Benchmark on the workflow you actually run.

- If swap is active, performance becomes unpredictable.

- Browser/tool caches can quietly dominate writes.

- Reduce churn first; then the “big levers” shine.

Apply in 60 seconds: Run iotop -oPa during a stutter and identify the top writer.

CPU crypto acceleration check: confirm you’re not running “encryption in slow motion”

Sometimes the VM is slow because encryption is running without hardware acceleration, or because virtualization settings prevent the guest from using what your CPU already has. It’s like owning a fast car but driving with the parking brake politely engaged.

Verify AES instructions + dm-crypt performance signal

# Check if AES instructions are visible inside the guest

grep -m1 -o 'aes' /proc/cpuinfo || echo "AES flag not found"

# Quick cryptographic benchmark signal (shows relative capabilities, not your disk path)

sudo cryptsetup benchmarkIf AES flags are missing (or performance is unexpectedly low), it doesn’t automatically mean “bad CPU.” It can mean “guest isn’t seeing the right features.”

VirtualBox settings that indirectly throttle encryption throughput

In VirtualBox, CPU and virtualization options affect how efficiently the guest runs crypto and manages I/O. Make sure hardware virtualization is enabled in BIOS/UEFI and that VirtualBox is configured to use it. If you’re on a laptop, also consider host power profiles—yes, really. I once fixed a “Kali encryption slowdown” by changing a host power setting that was downclocking under sustained load. Not glamorous, but effective.

The stealth bottleneck: when “encryption is slow” is really “CPU features aren’t exposed”

If your CPU is pegged during disk activity and iowait isn’t the star of the show, crypto acceleration and virtualization settings move up the priority list. This is also where switching controllers won’t magically help—because your disk isn’t the bottleneck anymore.

Show me the nerdy details

dm-crypt performance is influenced by CPU instruction availability and scheduling. In a VM, overhead and missing CPU flags can turn “fine on bare metal” into “why is this so loud and slow?” The solution is often enabling the right virtualization features and confirming the guest sees modern CPU instructions.

“Don’t do this” fixes: the mistakes that waste weekends (or risk data)

This is the part where I save you from my past self. The past self who thought, “I’ll just tweak a few things,” and then had no idea which tweak did what. The past self who changed encryption settings hoping for faster package installs. We don’t do that anymore.

Mistake: Throwing RAM/cores at an I/O bottleneck

If disk queueing is the pain, more cores don’t fix the queue. They just create more work to wait on. Add resources only after the storage path is sane.

Mistake: Changing LUKS settings hoping for faster apt

Unlock parameters affect unlock time. Your daily disk responsiveness usually depends more on controller choice, caching behavior, disk format, and write churn.

Mistake: Editing five knobs at once (benchmark theater)

Change one major variable, retest the same task, log the result. It’s not slower; it’s calmer. And calm wins.

Who this is for / not for (save the right people time)

For you if: VirtualBox + Kali + LUKS + daily tool workloads

- You run Kali as a daily VM, not a once-a-month lab.

- Your VM is encrypted (LUKS/LUKS2, often with encrypted LVM).

- Your pain shows up in

apt, browsers, tool databases, or projects—especially if your setup looks like a real toolkit (see essential Kali tools you actually use and how they behave under disk pressure).

Not for you if: the host disk is near-full, failing, or thermally throttled

If your host is under disk pressure, you’ll fight symptoms forever. Fix the host foundation first. (I learned this after trying to “optimize” a VM sitting on a nearly full external drive. The VM did not respect my optimism.)

Not for you if: you can change hypervisors and want the fastest path

If you’re willing to switch hypervisors, you may get different performance characteristics—sometimes dramatically. If you’re weighing options, compare VirtualBox vs VMware vs Proxmox for a pentest lab and, if you’re on Mac, the real-world differences in VMware Player vs Workstation vs Fusion. But if you’re committed to VirtualBox (or need it), this guide stays inside that reality.

FAQ

Why is my Kali encrypted VM slow on VirtualBox but fine on bare metal?

Because the VM adds a storage translation layer: guest filesystem → dm-crypt → virtual disk → controller emulation → host filesystem → host disk. If any link is misconfigured (controller/caching/dynamic disk churn), latency spikes show up far more than on bare metal.

Does LUKS encryption slow down a VM, or just the unlock step?

Both can have costs, but they’re different. Unlock-time (PBKDF) affects how long it takes to unlock at boot. Runtime performance depends on dm-crypt plus the VM storage path. Many “slow VM” cases are storage stack issues, not encryption settings.

Is NVMe always faster than SATA for a Linux guest in VirtualBox?

Not always. NVMe often helps with small random I/O, but the host disk, caching behavior, and overall system pressure can dominate. The right answer is “try one controlled switch and benchmark.”

Should I enable “Use Host I/O Cache” for an encrypted VM?

It depends on your host storage and workload. Host caching can reduce latency spikes for bursty writes, but misconfiguration can also create stutters. Use the benchmark-first approach: baseline, change, retest.

Why does apt upgrade freeze my UI or make the VM stutter?

apt creates many small writes (package downloads, unpacking, metadata updates). If the disk queue is saturated, UI responsiveness suffers. It’s often a controller/caching/disk-format issue, sometimes combined with swap activity.

Do dynamic disks get slower over time in VirtualBox?

They can. As dynamically allocated disks grow, fragmentation and host filesystem behavior can increase latency—especially on busy or nearly full host volumes. If performance worsened gradually, this is a prime suspect.

Can TRIM/discard work with LUKS on a virtual disk?

TRIM/discard behavior is nuanced with encryption. In general, it’s possible for the guest to signal freed blocks, but whether it helps (and how it’s handled) depends on the whole stack. If you care about data-leak characteristics, be cautious and prioritize performance wins that don’t change discard behavior.

What’s the fastest benchmark to confirm disk I/O is the bottleneck?

Run iostat -xz 1 while reproducing the slowdown and look for high latency/queueing, then confirm with a short fio run targeting small random I/O. Repeat the same test after each change.

Is it safe to change encryption settings on an existing Kali install?

Some changes are safe; some are not. Treat encryption parameter changes as higher-risk than VirtualBox storage tweaks. If you’re not fully confident, keep LUKS settings stable and focus on the VM storage path first.

What’s a realistic “good enough” disk speed for pentest workflows?

“Good enough” means the VM feels responsive under your real workload: package operations don’t lock your UI, tools open without long stalls, and disk latency doesn’t spike into stutter territory. Your best target is consistency, not bragging rights. (And if your day-to-day stack includes proxy tooling, a clean network path matters too—e.g., a Proxychains DNS leak fix prevents “performance debugging” from turning into “why is everything routing weird?”)

Next step: the 15-minute “keep LUKS, fix I/O” action plan

This is the “do it now” section. Not someday. Not after your fifth cup of coffee. In the next 15 minutes, you can go from confused and annoyed to measured and in control.

Measure: one benchmark + one real task (before)

- Pick one real task (e.g., timed

apt upgradeor opening a Burp project). - Run

iostat -xz 1during the task and note latency/queueing. - Run one short

fiotest (30 seconds). Save the output.

Change in order: controller → caching → disk type → guest write storm

- Controller: Evaluate NVMe vs your current controller (one change at a time).

- Caching: Adjust host I/O cache behavior only after controller is settled.

- Disk strategy: If performance decayed over time, consider a more stable disk approach.

- Write storms: Reduce swap and background churn if iowait/stutter persists.

Re-test: same benchmark + same task (after), record the delta

Same test. Same duration. Same workload. The only difference should be your one change. This is how you build confidence without gambling with your VM.

Enter your before/after times to see improvement.

Neutral next step: Log the number in your notes so you can compare future tweaks.

Conclusion: keep LUKS, get your VM back

Let’s close the loop from the top: you don’t need to “pay” for encryption with a miserable VM. Most of the time, the real villain is boring—controller choice, caching behavior, disk strategy, and write churn. The win is also boring, which is the best kind of win: measure → change one lever → retest.

If you do only one thing in the next 15 minutes, do this: pick one real workload (like apt upgrade or opening a Burp project), baseline it, then make one storage-stack change and retest. When you can say, “This change saved me 30%,” you stop guessing—and your VM stops feeling like it’s dragging a piano. And if this VM is part of a larger practice routine, anchoring the rest of your workflow—like a Zsh setup for pentesters and a consistent lab environment (see Kali Linux lab infrastructure mastery)—keeps “performance debugging” from becoming your new full-time hobby.

Last reviewed: 2025-12.