Ship Fast, Stay Secure: The One-Hour MVP Threat Model

Most startup teams don’t need a heavyweight threat program to avoid their first security fire—they need one focused hour before launch. This MVP-stage threat modeling approach turns security from vague worry into a practical, one-page decision tool your team can run every sprint.

The real pain isn’t a lack of knowledge; it’s shipping pressure and fuzzy ownership. In early SaaS builds, this gap is where auth abuse and data leakage quietly slip into production. Delaying security means the bill arrives later as incident cleanup and lost customer trust during your critical growth window.

Identify top abuse paths fast, score risk with a lightweight method, and ship with:

- 3 Concrete Controls

- 3 Owners

- 3 Due Dates

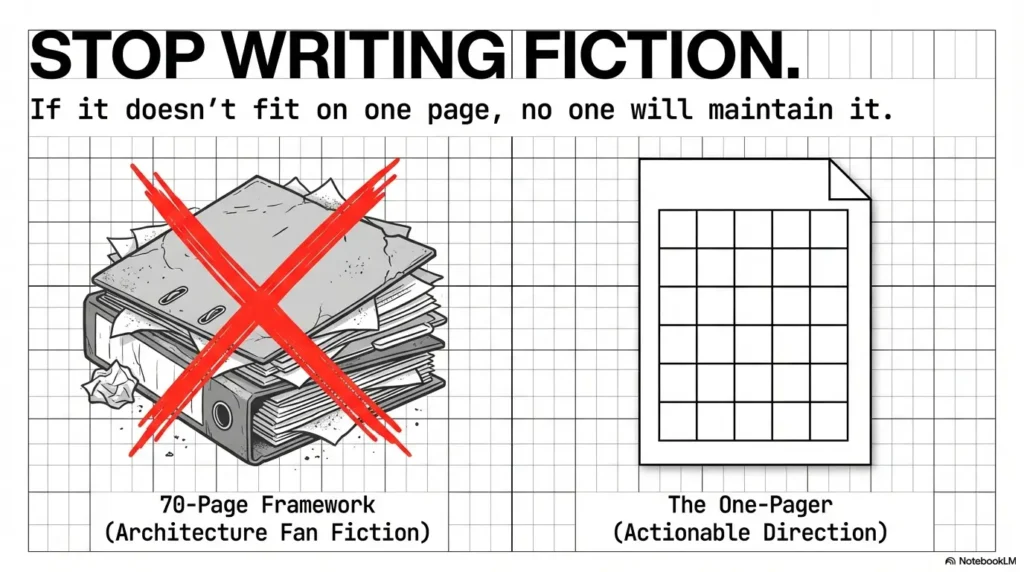

No 70-page framework. No checkbox theater. Just high-risk paths, effective mitigations, and a repeatable launch gate built for real MVP execution: crown-jewel assets, API exposure, and session hardening.

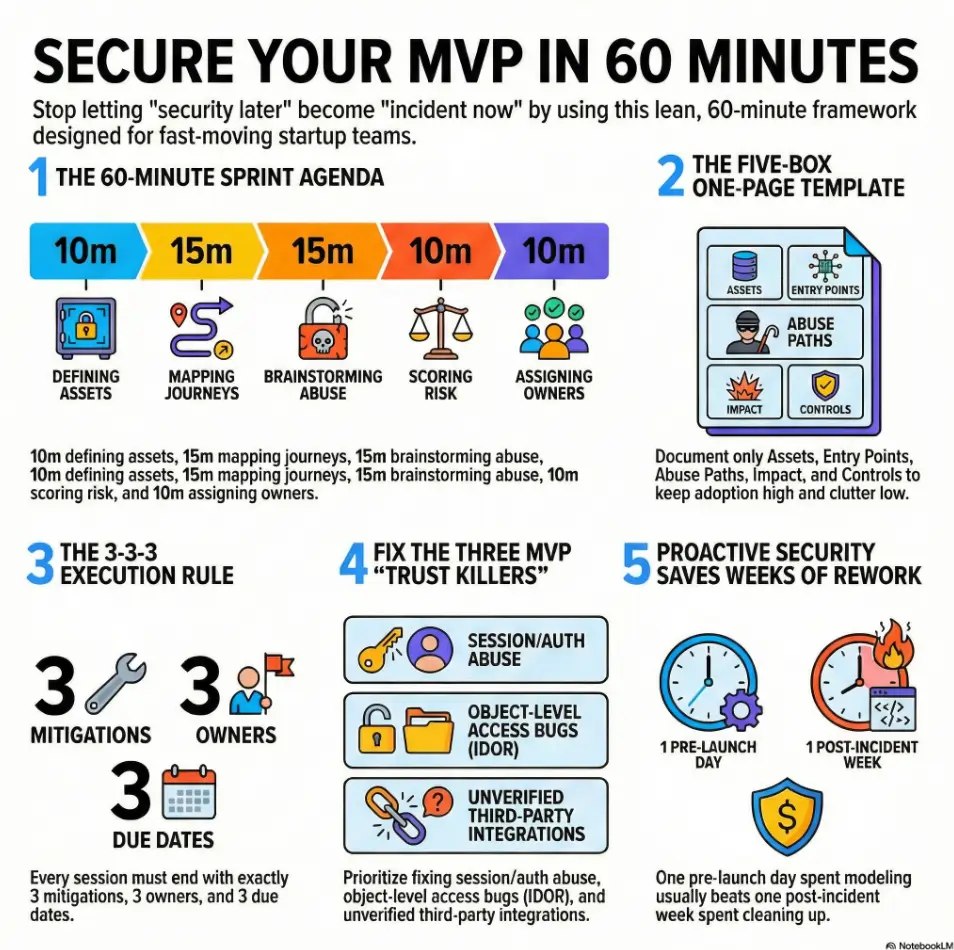

MVP-stage threat modeling can be done in 60 minutes with a one-page template: define your top user flows, list assets, identify likely threats (by abuse path), assign quick risk ratings, and pick 3 “must-do now” controls before launch. Keep it lightweight, repeat every sprint, and focus on preventing the most expensive early failures: auth abuse, data leakage, and insecure defaults.

Table of Contents

Why 60 Minutes Works at MVP Stage

The startup reality: speed beats perfection

I’ve never seen an MVP team fail because they lacked a fourth risk scoring dimension. I have seen teams fail because nobody owned login abuse until users started posting screenshots on X. A 60-minute format works because it respects reality: tiny teams, moving target requirements, and product pressure that does not care about your “someday security roadmap.”

In practice, short sessions force decisions. You identify the highest-risk path, pick the smallest effective control, and ship with eyes open. That’s the point. Not perfection. Direction.

The hidden cost of “we’ll secure it later”

“Later” sounds cheap. It almost never is. Fixing auth logic after launch can involve session invalidation, support tickets, trust repair emails, and emergency patch cycles that consume a full sprint. One founder once told me, “we saved two days by skipping the model and lost three weeks cleaning it up.” That ratio is brutally common.

What “good enough for launch” actually means

Good enough does not mean “no known risk.” It means:

- Your crown-jewel assets are explicitly named.

- Your top abuse paths have concrete mitigations.

- Every unresolved high-risk item has an owner and date.

NIST frames risk assessment as a decision-support mechanism, not a paperwork ritual, which aligns perfectly with this MVP approach.

- Time-box to 60 minutes.

- Prioritize top 3 risks, not all risks.

- Assign owners before ending the meeting.

Apply in 60 seconds: Put a recurring 60-minute threat-model block in your sprint calendar now.

Use This One-Page Model Before You Write More Code

What goes on the page (and what doesn’t)

One page. Five boxes. No architecture fan fiction. If your team needs to scroll, you’ve already lost adoption.

Include only what drives decisions this sprint. Exclude legacy trivia, hypothetical nation-state scenarios, and “future design sketches” without code paths.

The 5 boxes: Assets, Entry Points, Abuse Paths, Impact, Controls

Use this one-page template exactly:

One-Page Threat Model (MVP)

- Assets (Crown Jewels)

User PII, auth tokens, billing events, admin actions, source secrets. - Entry Points

Signup/login, public APIs, file upload, webhooks, admin panel, third-party callbacks. - Abuse Paths

“Attacker does X via Y to cause Z.” Keep each path to one sentence. - Impact

User harm, trust damage, outage, cost spike, contract risk. - Controls (Now / Next / Later)

Now = pre-launch must-do; Next = sprint+1; Later = documented debt.

Let’s be honest… if it doesn’t fit on one page, no one will maintain it

A quick memory: I once helped a team replace a beautiful 28-slide “threat deck” with one page on a whiteboard. Meeting time dropped from 2 hours to 45 minutes. Fix closure rate doubled in two sprints. Nothing magical happened. We just removed performative complexity.

Output in 60 minutes: 3 mitigations, 3 owners, 3 due dates.

Show me the nerdy details

The model uses a constrained inventory-and-abuse method: enumerate assets and externally reachable paths, then map concrete misuse chains. This is intentionally closer to attack-path analysis than full architectural decomposition, because MVP teams need high signal density per minute.

Who This Is For (and Not For)

Best fit: pre-seed to Series A teams shipping fast

If your team is 2–25 people and you release weekly, this is your lane. You need a repeatable, low-ceremony security rhythm that fits product reality.

Not a fit: regulated workloads needing formal audits

If you’re in heavily regulated healthcare, core fintech infrastructure, or government workloads, this method can be your fast pre-filter—but not your entire compliance strategy. You’ll likely need formal control mapping and deeper evidence collection. If your roadmap includes compliance milestones, align this sprint ritual with a realistic SOC 2 budget planning baseline early so security work doesn’t become a surprise line item.

Solo founder vs. small team: how ownership changes

Solo founder: you wear PM + engineer + incident commander hats. Keep control count brutally small. Small team: split ownership by domain (API, frontend, cloud config). The model works in both cases; the ownership map is what changes.

- One owner per top risk.

- One due date per mitigation.

- One review cadence per sprint.

Apply in 60 seconds: Add an owner field to every security ticket template.

The 60-Minute Sprint: Minute-by-Minute Agenda

0–10 min: define crown-jewel assets

Ask: “If this leaks, what hurts users fastest?” Usually: session tokens, PII, billing integrity, privileged actions. Keep asset list to 5–7 items max.

10–25 min: map top 3 user journeys

Pick journeys that move revenue or trust: onboarding, checkout, admin change flows. Don’t map every click. Map where attackers can change identity, permissions, or money.

25–40 min: brainstorm realistic attacker moves

Phrase each abuse path like this: “Attacker submits manipulated object ID on /api/orders/{id} to read another tenant’s invoice.” Keep it behavior-first and specific.

40–50 min: score risk fast (likelihood × impact)

Use 1–3 scale to keep momentum. If likelihood=3 and impact=3, it’s a pre-launch block unless there is a temporary compensating control.

50–60 min: choose top 3 mitigations and owners

Pick the smallest controls that close the biggest holes: token expiry tuning, object-level authorization checks, webhook signature validation, sane rate limits. As mitigations land, pair this with clear vulnerability remediation SLA targets so fixes don’t drift into “someday.”

Mini Calculator: Fast Risk Score

Input 1: Likelihood (1–3)

Input 2: Impact (1–3)

Output: Score = Likelihood × Impact

Interpretation: 1–2 = monitor, 3–4 = schedule next sprint, 6–9 = pre-launch action.

Neutral next action: Compute scores for your top five abuse paths and rank them.

When teams ask whether this “counts” as real risk assessment, point them to the principle: structured risk decisions with documented rationale. That is exactly the muscle you’re building.

Start With Abuse Paths, Not Framework Jargon

“How could this be misused?” beats “Which model are we using?”

I love good frameworks, but jargon can become procrastination in a nice blazer. Teams shipping MVPs need attack-path clarity, not taxonomy debates.

Use one standing prompt in meetings: “How would I break this in under 10 minutes if I were angry and bored?” It cuts through ceremony fast.

STRIDE-lite for MVPs (without the ceremony)

Borrow STRIDE logic lightly:

- Spoofing → auth/session abuse

- Tampering → parameter manipulation, webhook forgery

- Repudiation → missing audit trail for sensitive actions

- Information disclosure → IDOR/object leaks

- Denial of service → unbounded requests/cost blowups

- Elevation of privilege → role boundary bypass

OWASP’s API Security Top 10 keeps this concrete for modern SaaS teams, especially around broken object-level authorization and broken authentication. For teams building or reviewing endpoint-heavy products, this pairs well with a practical web exploitation essentials checklist mindset that emphasizes testable abuse cases over theory.

Curiosity loop: the one endpoint everyone forgets to threat-model

Webhooks and admin “support endpoints.” Almost every team models login and checkout. Fewer teams model support tooling with the same rigor. That blind spot is where “internal only” turns into “incident report.”

Show me the nerdy details

Abuse-path-first sessions produce better prioritization because paths already encode exploit preconditions and blast radius. Framework labels can be added afterward for documentation if needed, but path-first ordering improves fix velocity.

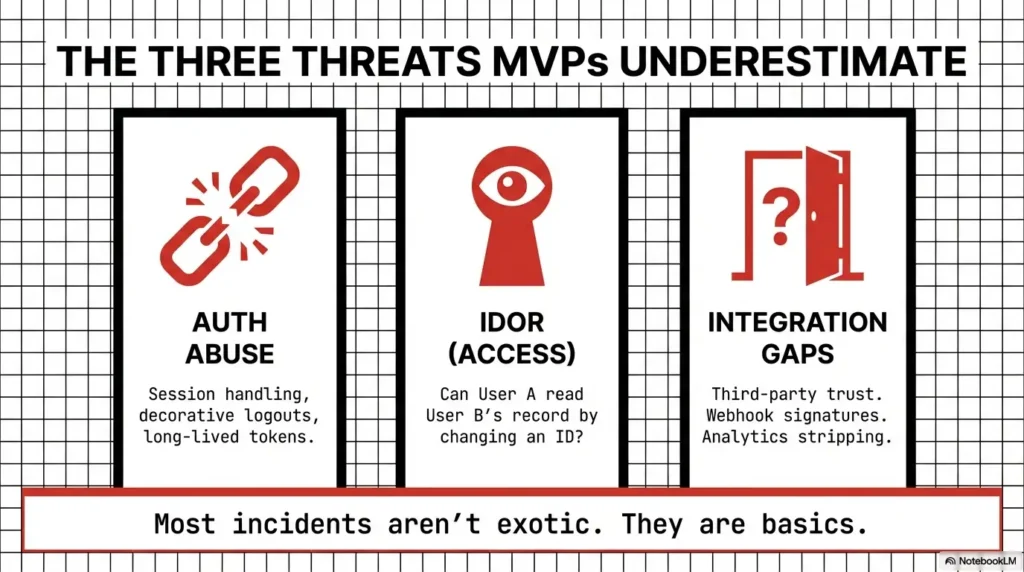

The Three Threats Most MVPs Underestimate

Session and auth token mishandling

Classic patterns: long-lived tokens, weak invalidation on password reset, inconsistent refresh token revocation, and insecure defaults in auth middleware. I once saw a team discover “logout” was decorative—sessions stayed valid across devices for days.

Object-level access bugs (IDOR-style exposure)

When IDs are guessable or authorization checks happen only at UI level, one crafted request can cross tenant boundaries. OWASP lists this class at the top for a reason; it is frequent, exploitable, and trust-destroying.

Third-party integration trust gaps

Every integration is a transitive trust decision: payment providers, analytics, CRM syncs, customer support widgets, AI tools. If webhook signatures aren’t validated, or scopes are overbroad, you’ve imported risk at high speed.

Here’s what no one tells you… your analytics script can widen your attack surface

Analytics and tag manager tools are useful, but they can introduce sensitive data exposure paths, performance regressions, and dependency risk. Keep events minimal, strip secrets, and audit what gets sent from client and server.

Eligibility Checklist: Ready for MVP Launch?

- Yes/No: Token lifetime and revocation policy documented

- Yes/No: Object-level authorization tested on top 3 APIs

- Yes/No: Webhook signatures verified and replay-protected

- Yes/No: Sensitive events redacted in analytics payloads

Neutral next action: Any “No” becomes a ticket with owner and due date before release.

- Harden sessions first.

- Test object-level access explicitly.

- Treat third-party callbacks as untrusted input.

Apply in 60 seconds: Add one negative test: “Can user A read user B’s record by ID?”

Common Mistakes That Create Expensive Rework

Mistake #1: modeling architecture, not user behavior

If your model starts with boxes and arrows but no concrete user journeys, your controls drift into abstraction. Threats happen in behavior, not diagrams.

Mistake #2: treating “internal tools” as trusted by default

Internal tools are often under-tested, over-permissioned, and reachable through VPN assumptions that age badly. Model them as high-impact surfaces.

Mistake #3: “we’ll add rate limiting after launch”

This sentence has started more emergency on-call nights than caffeine. Unrestricted resource consumption is both reliability and cost risk. OWASP calls this out directly for APIs.

Mistake #4: no owner, no deadline, no fix

Security debt without ownership is just a hope document. A mitigation without a date is a wish. A wish is not a control.

Decision Card: Fix Now vs Defer

Fix Now if exploit path is public-facing, easy to automate, and impacts identity/data.

Defer only if blast radius is limited, compensating control exists, and debt is documented with a due sprint.

Time/Cost Trade-off: One pre-launch day usually beats one post-incident week.

Neutral next action: Tag each finding “Fix Now” or “Defer with compensating control.”

Don’t Do This: Security Theater at MVP Speed

Copy-paste checklists with zero product context

A generic list can’t tell you that your public invite flow plus weak role checks equals tenant takeover. Context matters more than checkbox volume.

Risk scoring so complex no one uses it

If scoring requires a mini course, it will be skipped by sprint three. Keep it simple, and rerun often. One practical approach is to track only a handful of leading indicators in a founder-facing dashboard; if you need a model, use security metrics for founders that tie directly to release decisions.

“Pass/fail” thinking instead of top-risk reduction

Security is not an exam you pass once. It’s a queue you manage continuously. Reduce the worst risks first, then keep moving.

Short Story: The launch that almost became a postmortem

A four-person B2B SaaS team I worked with had two days to launch a pilot. They had crisp onboarding, slick analytics, and one hidden problem: admin role changes were trusted on the client. During our 60-minute threat sprint, an engineer casually asked, “What if someone intercepts this request and flips role_id?” Silence. Then panic.

We added one server-side authorization check, one audit log for role changes, and one alert on unusual admin activity. Total engineering time: about 3.5 hours. Three weeks later, an enterprise prospect asked a hard question about admin abuse controls during security review. They answered confidently, passed the review, and closed the pilot. No fireworks. Just one boring, practical check that protected trust when it mattered most. That’s the whole game at MVP stage: tiny controls, outsized consequences.

Show me the nerdy details

Security-by-design guidance emphasizes shifting responsibility from end users to software makers and reducing exploitable defaults. For MVP teams, this translates to safer defaults in auth, permissions, and configuration before broad exposure.

Turn Findings Into a Pre-Launch Security Gate

Define a minimum security bar for release

Your gate can be tiny and effective:

- No unresolved high-risk auth or authorization findings.

- Critical logs enabled for sensitive actions.

- Top three abuse paths have implemented controls.

Convert each risk into a ticket with acceptance criteria

Bad ticket: “Improve auth security.” Good ticket: “Refresh token invalidates on password change; integration test fails if old token still accepted.” Precision reduces debate and rework.

What to defer safely (and how to document that debt)

Deferral is fine when explicit. Record: risk statement, compensating control, owner, due sprint, and trigger for re-evaluation (traffic threshold, enterprise customer, new integration).

Open loop: which one unresolved risk can kill trust fastest?

Ask this before every release. If the answer is “cross-tenant data exposure,” your next engineering hour already has a job.

Quote-Prep List: Gather Before Buyer Security Review

- Most recent one-page threat model (dated)

- Top 3 mitigations implemented + PR links

- Auth/session policy summary

- Access control test evidence for key APIs

- Incident response owner + escalation path

Neutral next action: Store these five artifacts in one shared folder before outbound enterprise calls.

When procurement gets involved, preparing responses in advance with a concise vendor security questionnaire playbook can turn long security back-and-forth into a faster, cleaner close cycle.

Next Step: Do This in the Next 24 Hours

Book one 60-minute threat-model sprint on your top onboarding flow

Pick one journey that touches identity and data. Invite exactly the people who can change code this week: one engineer, one PM/founder, one person who knows infra. Keep the room small; results grow.

Leave the session with exactly 3 mitigations, 3 owners, 3 due dates

This is your execution contract. Not 14 “nice ideas.” Three decisions that reduce real risk before users feel it first.

For practical, widely adopted API risk language your team can align on fast, OWASP’s API Security project is a strong reference baseline.

As you operationalize this, connect technical fixes to business commitments with a lightweight penetration testing SOW template, and ensure legal language around scope and outcomes is clear using a practical guide to pentest limitation of liability clauses.

And when launch timing gets tight, a simple pre-release checklist inspired by an OSCP-style VM lockdown checklist can help teams verify hardening basics before they go public.

FAQ

Do US startups need threat modeling before SOC 2?

It may not be explicitly mandated in that exact phrase, but buyers and auditors care whether you identify and manage security risk systematically. A lightweight threat model provides concrete evidence that risk decisions are intentional, not accidental.

How is this different from a penetration test?

Threat modeling is proactive design-time risk identification. Pen testing is point-in-time validation against running systems. Think of threat modeling as choosing where to reinforce the door, and pen testing as paying someone to try kicking it in. If you need a buyer-friendly explanation for non-technical stakeholders, this overview of penetration testing vs vulnerability scanning helps set clean expectations.

Can we do this without a dedicated security engineer?

Yes. Most MVP teams do. The key is a repeatable format, narrow scope, and clear ownership. Security specialists help, but consistency matters more than titles at this stage.

How often should MVP teams repeat the one-page model?

At minimum once per sprint or whenever one of these changes: auth flow, data model, major integration, or public exposure level. A monthly cadence is a floor, not a ceiling.

What’s the minimum evidence to show investors or enterprise buyers?

A dated one-page model, top risks with owners, mitigations implemented, and proof of retest. Keep it concise and current. Stale evidence hurts credibility more than short evidence.

Is this enough for HIPAA- or fintech-adjacent products?

Usually not by itself. Use this as your operational baseline, then layer formal control frameworks, legal/compliance review, and deeper assurance processes as required by your domain.

Which roles should join the 60-minute session?

One decision-maker (founder/PM), one engineer owning the target flow, and one engineer with backend/infra visibility. Optional: designer or support lead if they influence account recovery and admin operations.

Should we block launch if one high-risk item remains?

If it impacts identity, authorization boundaries, or cross-tenant data exposure, yes—block or add a strong compensating control with explicit time-bound remediation. Trust is easier to keep than to recover.

How do we prioritize security when roadmap pressure is high?

Use risk-to-effort ranking: choose controls with high risk reduction per engineering hour. The best early controls are boring, testable, and hard to bypass.

What tool should we use: doc, board, or spreadsheet?

Use whatever your team updates weekly. A plain doc is often enough. If you track many findings, add a board. If you need scoring history, use a spreadsheet. Tool choice is secondary to cadence and ownership.

Final word: The curiosity loop from the opening was simple: can a fast team protect trust without heavyweight ceremony? Yes—if you commit to one page, one hour, and one habit: convert risk into owned action before launch. You don’t need a perfect model. You need a living one.

In the next 15 minutes, do one thing: open your calendar, schedule a 60-minute threat-model sprint for your highest-value onboarding flow, and pre-create three empty tickets labeled Owner, Due date, and Acceptance test. Your future self on incident day will quietly thank you.

Last reviewed: 2026-02.