The Startup-Proof Pen Test Statement of Work (SOW)

A penetration test can be “done” and still leave you exposed—not because the technical findings failed, but because the contractual guardrails weren’t there.

Built for the moment every startup hits: one extra endpoint, one vague rule, or a report filled with screenshots but zero answers. If your scope, Rules of Engagement (RoE), and evidence handling aren’t locked down, you risk downtime, authorization disputes, and audit-unfriendly deliverables right before a major launch.

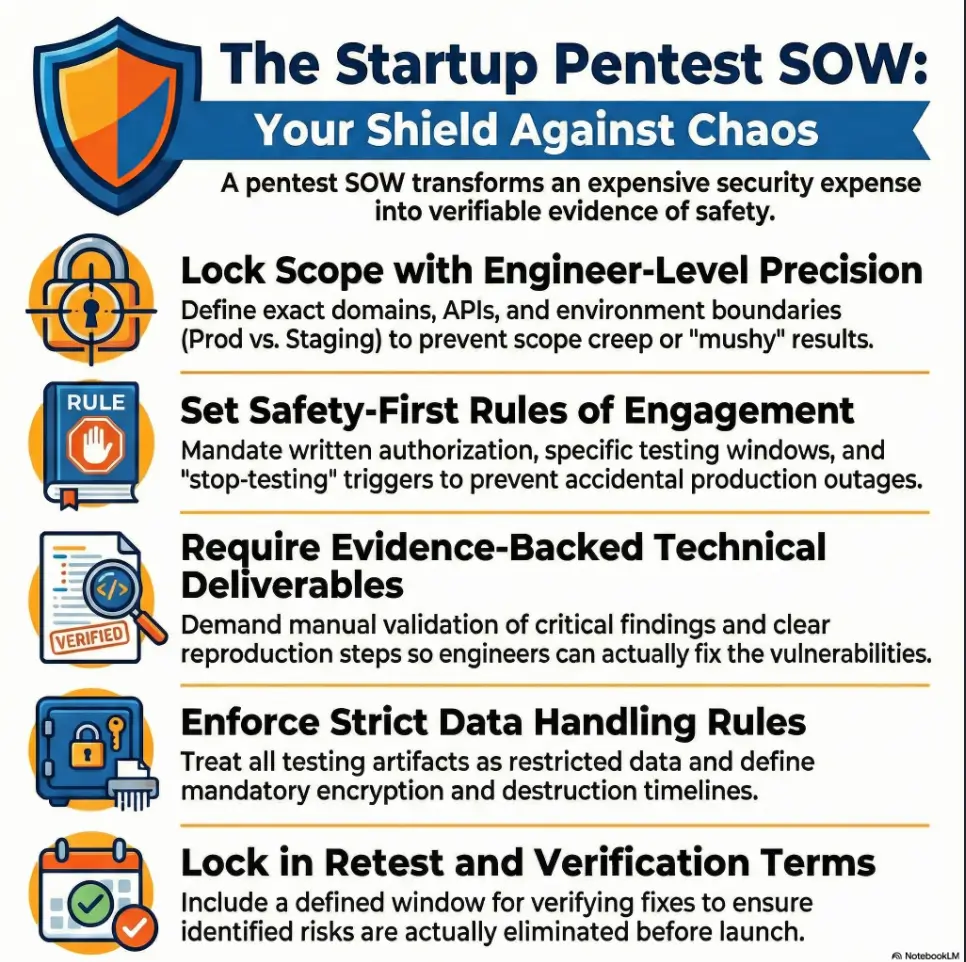

“`A Pen Test SOW defines exactly what is tested, the methodology used, what “done” looks like, and how sensitive artifacts are handled. It prevents scope creep, protects production operations, and creates defensible proof for SOC 2, customers, and insurers.

This template provides a 12-clause spine to lock your methodology, escalation paths, retest terms, and liability boundaries—enabling you to negotiate fast without gambling with your production environment. (If you want a practical “what the deliverable should look like” reference, compare your vendor’s output against a pentest report template so you can spot scan-and-screenshot vibes early.)

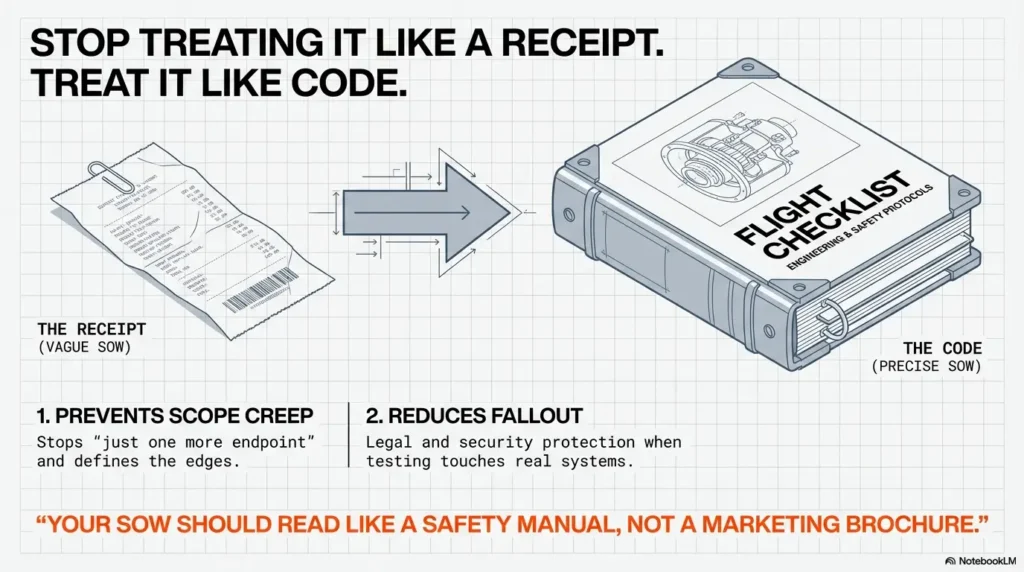

A strong Pen Test SOW protects you twice: it prevents scope creep and reduces legal/security fallout when testing touches real systems. At minimum, your SOW should lock scope, rules of engagement, timelines, deliverables, evidence handling, access, confidentiality, liability, pricing, retest terms, ownership, and comms/escalation. If any clause feels “obvious,” that’s exactly where startups get burned—quietly, expensively, and right before a launch.

Table of Contents

Safety / Disclaimer (read before you copy-paste)

This is general information, not legal advice. A penetration test can create real operational and legal risk: downtime, data exposure, and “who authorized what” disputes. Have counsel review your final SOW—especially the language for authorization, liability limits, indemnity, confidentiality, and data handling. Also: if your vendor asks for broad access to identity (SSO/IAM) or cloud control planes, treat that as a board-level decision, not a hallway yes.

- Operational safety: unclear rules can cause accidental load, lockouts, or cascading alerts.

- Legal safety: vague authorization can turn legitimate testing into a messy argument later.

- Evidence safety: screenshots, packet captures, and exports can be more sensitive than the vulnerabilities.

- Explicit written authorization is non-optional.

- Define what “stop testing” looks like before anyone starts.

- Treat testing artifacts as restricted data by default.

Apply in 60 seconds: Add a one-sentence “Authorization + Window” line you can point to during an incident.

Who this is for (and who it isn’t)

If you’re a US-based startup buying a pentest for SOC 2 readiness, ISO 27001 prep, a customer security review, cyber insurance, or a board request—this is for you. You want a vendor-ready SOW skeleton that you can negotiate in one meeting, with enough precision that engineers don’t roll their eyes and counsel doesn’t panic.

For you if…

- You need clarity on what testers can touch (and what they absolutely can’t).

- You’re worried about scope creep disguised as “just one more endpoint.”

- You want a report you can actually fix from—not just file away (use a professional pentest report format as a baseline for what “fixable” looks like).

Not for you if…

- You need a full Master Services Agreement rewrite (separate doc; different negotiation center).

- You’re doing bug bounty only (different rules of engagement).

- You’re in an active incident (you want IR + forensics, not a standard pentest).

A quick scene (composite, but painfully common): the founder buys a “pentest,” the vendor runs tools against the login page, the report arrives two days later, and the enterprise buyer asks one question: “Did you test authorization beyond the UI?” The room goes quiet. The SOW never required it—so nobody did it. That’s not a security failure; it’s a purchasing failure.

Scope lock: what’s in-bounds (and what’s not)

Scope is where most pentests become either magic or mush. Your job is to describe targets like an engineer, not a poet. The vendor’s job is to tell you what depth is realistic inside your timeline and budget. The SOW is where those meet—with edges, exclusions, and a shared definition of “coverage.”

Define targets like an engineer

- Asset list: domains, subdomains, IP ranges, apps, APIs, mobile packages, cloud accounts/tenants, admin panels.

- Environment clarity: production vs staging vs sandbox (and which is allowed).

- Auth boundaries: which roles will be tested (e.g., user, manager, admin) and what “admin” actually means.

The scope edges most startups forget

- Third-party dependencies: Auth providers, payment processors, messaging, CI/CD, ticketing, analytics, CDN/WAF.

- Shadow surfaces: legacy endpoints, “old” mobile builds, forgotten subdomains, internal dashboards.

- Tenant boundaries: multi-tenant systems need explicit “no-cross-tenant” guardrails and test accounts.

The one sentence that decides whether you get value

Add a short Test Objectives paragraph. Not “run OWASP Top 10.” Instead: “Validate that a low-privilege user cannot access another tenant’s invoices,” or “Attempt privilege escalation from support tools to production admin.” Objectives force the vendor to prioritize real attack paths, not just a checklist of activities. (If you’ve ever watched someone burn hours chasing the wrong path, codify your “don’t spiral” principle—this is basically the contract version of the OSCP rabbit hole rule.)

- Yes/No: Do you have a clean asset inventory (domains, apps, APIs, cloud tenants) you can share today?

- Yes/No: Can you provide at least 2–3 test roles (user/admin) within 48 hours?

- Yes/No: Do you know whether prod is allowed, and under what window?

- Yes/No: Do you have an on-call contact during testing hours?

Neutral next step: If you answered “No” to any item, bake the missing piece into the SOW prerequisites.

Rules of Engagement: the clause that keeps your pager quiet

Rules of Engagement (ROE) is where you stop pretending the test is “just a scan.” This is the part that prevents accidental outages and awkward legal conversations. If ROE is vague, your ops team becomes the incident response team—without consent, without context, and usually without sleep.

Written authorization (don’t rely on email vibes)

- Explicit permission to test identified assets during defined windows, signed by an authorized company representative.

- Named points of contact (primary + backup) with phone/Signal number for urgent escalation.

- Testing window with timezone, plus “quiet hours” if you have sensitive batch jobs.

Safety rails that prevent accidental outages

- No destructive payloads and no stress testing unless it’s a separate engagement with its own controls.

- Rate limits for API calls and login attempts to avoid lockouts and WAF meltdowns (pair this with a defined discovery cadence so you don’t get “scan noise” misread as signal—very similar to how you avoid false positives in service detection when you’re validating what’s real).

- Opt-in only: phishing, social engineering, physical testing, and anything involving staff.

Let’s be honest: if your ROE says “reasonable efforts” and nothing else, you’re betting your uptime on two strangers having the same definition of “reasonable.”

Short Story: The “harmless” brute-force (120–180 words) …

A startup once allowed “credential testing” with no rate limit language. The vendor’s tooling was polite in their lab, less polite in the real world. Their SSO provider flagged anomalies, temporarily throttled logins, and support tickets began arriving like rain: “I can’t access my dashboard.” The testers, meanwhile, saw the throttling as a security control worth noting and continued to probe around it. Nobody was malicious; everyone was busy.

The problem was the SOW: it didn’t define lockout thresholds, it didn’t set stop-testing triggers, and it didn’t require immediate escalation when authentication instability appeared. The fix took 30 minutes—after three hours of confusion. Later, the founder said the quiet part out loud: “I thought ROE was boilerplate.” It wasn’t boilerplate. It was the steering wheel. (If you want a clean way to enforce “timeboxed aggression” without collateral damage, borrow the mindset behind timeboxing Hydra—the technique is less important than the boundary.)

Methodology + standards: how you avoid scan-and-screenshot reports

“We follow best practices” is not a methodology. In a good SOW, methodology is verifiable: what gets tested, how depth is proven, and how findings are validated without causing harm. OWASP’s Web Security Testing Guide is widely used as a practical framework for web and API testing, and PTES is commonly referenced for end-to-end penetration testing phases. For planning and conducting technical testing, NIST has published a guide focused on structured security testing and assessment processes. Your SOW doesn’t need to worship any one document—it needs to make the vendor’s approach inspectable.

Require a recognized approach (and make it measurable)

- State the approach (web/app/API/mobile/cloud) and what “coverage” means in that approach.

- Require manual validation for any high/critical finding (no tool-only severity claims).

- Define expectations for authenticated vs unauthenticated testing.

API-specific depth guarantees

- Auth flows: tokens, refresh logic, session invalidation, impersonation risks.

- Authorization: object-level access control, role checks, tenant isolation.

- Abuse cases: rate limits, replay, mass assignment, data filtering, export endpoints.

Show me the nerdy details

Ask for a short “validation rubric” in the SOW: for each severity level, how the vendor proves exploitability safely. Example: for auth issues, require a minimal PoC that demonstrates unauthorized access without dumping full datasets. For injection findings, require evidence of control (e.g., time delay or error-based confirmation) instead of destructive payloads. This pushes the work toward verification and away from noisy scanning. (And yes, “evidence that proves without dumping data” is the same muscle you build when you’re training yourself to capture a clean proof screenshot—minimal, accurate, defensible.)

- Demand manual validation for high/critical findings.

- Define authenticated testing expectations up front.

- Make “coverage” a written deliverable, not an assumption.

Apply in 60 seconds: Add a single line: “High/Critical findings must include safe proof of exploitability.”

Access + test accounts: the clause that decides timeline reality

Here’s the part everyone underestimates: access. Not because it’s technically hard, but because it lives at the intersection of security, operations, and “who owns this system.” Access is where timelines quietly die. If you want a pentest to finish on time, the SOW must describe who provides what, by when, through which channel.

What you provide (and when)

- Tester IP allowlisting, VPN instructions, and any required device posture rules.

- SSO test users, API keys, and RBAC roles (define what each role can do).

- Staging datasets (preferably synthetic) if prod data is sensitive.

- Approved secure channel for secrets (never plain email; specify the method you use).

What they provide

- Tester IP ranges, expected user agents, and tooling constraints (so your SOC doesn’t panic).

- A pre-flight checklist that confirms prerequisites before day 1 (if you’re a templates person, you’ll recognize the power of a single source of truth—same reason people love an initial access checklist in practice).

- A list of “red flags” that should trigger escalation (lockouts, latency spikes, unexpected data exposure).

Here’s what no one tells you: “Waiting for access” is the #1 hidden cost in pentests. Your SOW is where you make that cost visible.

Deliverables: define “done” in writing

Deliverables are where you protect yourself from two common failures: the report that’s too fluffy for engineers, and the report that’s too technical to help leadership make decisions. Your SOW should force a two-layer output: a risk story for humans, and reproduction evidence for builders.

Minimum deliverable package (startup-friendly, buyer-proof)

- Executive summary: top risks, business impact, and what to fix first.

- Technical report: per finding—affected asset, reproduction steps, evidence, impact, and remediation guidance.

- Coverage confirmation: what was tested, what depth was achieved, and what was excluded (with reasons).

Evidence requirements (so engineers can actually fix)

- Request/response samples (redacted), screenshots, and minimal PoCs within safety rules.

- Severity rationale: why it’s high/medium/low (don’t accept “because the scanner said so”).

- Clear remediation guidance (not just “patch it” or “validate input”).

Inputs: (1) How many findings do you expect to fix? (2) How many engineers can work in parallel?

Rule of thumb output: If you fix 5–10 findings with 1–2 engineers, plan 1–2 weeks before a meaningful retest window. If you fix 10–25 findings with 3+ engineers, plan 3–5 business days for fixes plus 2–3 days for retest coordination.

Neutral next step: Put your expected retest window into the SOW so “verification” doesn’t become a forgotten afterthought.

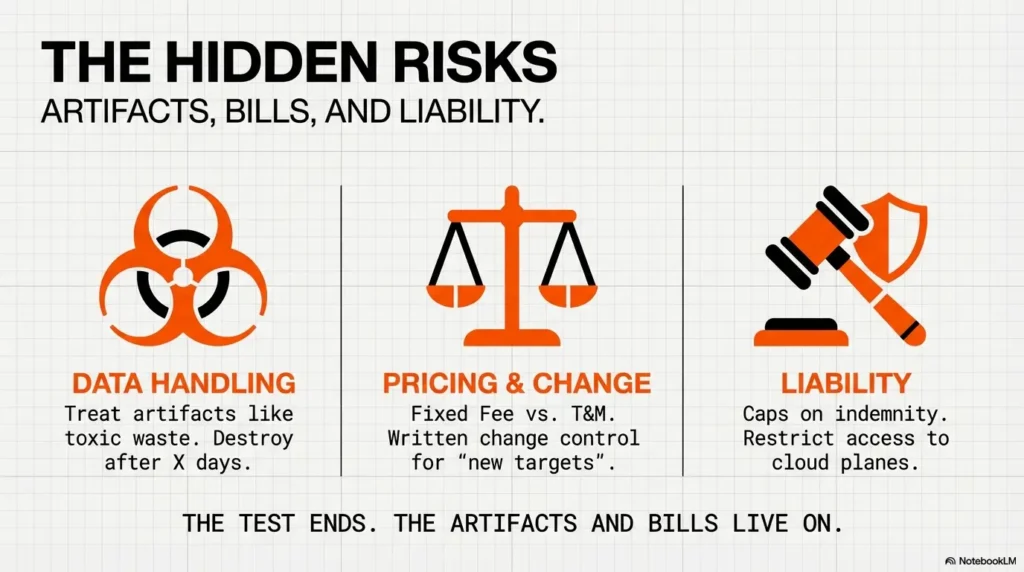

Data handling + confidentiality: your real risk is the artifacts

The test is temporary. The artifacts can live forever—unless you make them stop. Packet captures, screenshots of admin panels, exported CSVs, and proof-of-concept snippets are often more sensitive than the vulnerability itself. This clause should read like a careful checklist for evidence: where it lives, who can access it, how it’s encrypted, and when it’s destroyed.

Data classification + storage rules

- Define “security testing data” as confidential + restricted by default.

- State encryption requirements for storage and transfer.

- Set retention: e.g., “Artifacts destroyed within X days after final report,” unless you request otherwise.

Minimize sensitive data by design

- Prohibit retaining customer PII unless explicitly authorized and minimized.

- Prefer redaction: show just enough evidence to reproduce safely.

- Subcontractors: require written approval and the same controls.

Composite moment: the vendor uploads a draft report to a personal drive “just to share faster.” Nobody panics—until procurement asks where the artifacts live. Your SOW prevents this with one sentence: approved storage only.

Timeline, comms, and escalation: fewer surprises, more learning

Startups move fast, which means ambiguity becomes turbulence. A good comms clause turns the pentest into a short, controlled collaboration instead of a two-week blackout followed by a PDF drop. You want a cadence that preserves momentum and catches critical issues early—without turning the test into a daily status theater.

Cadence that keeps momentum

- Kickoff call (30–60 minutes): confirm scope, ROE, access, and test objectives.

- Mid-test preview: early signal on critical/high findings (even if details are still being validated).

- Closeout: walk-through of findings, prioritization, and retest plan.

Escalation triggers (write them down)

- Critical findings: notify within a defined window (e.g., within 24 hours of confirmation).

- Stop-testing conditions: service instability, unexpected data exposure, or customer-impact risk.

- “Who picks up the phone” during testing hours (primary + backup).

- Uptime risk is unacceptable.

- You can mirror prod config reliably.

- You need aggressive testing depth.

- Staging diverges from prod meaningfully.

- You can constrain ROE tightly (windows + rate limits).

- You need proof against real configs and data paths.

Neutral next step: Put your choice (staging vs prod) into the SOW header so nobody “assumes prod” on day 3.

Price, payment, and change control: scope creep’s natural predator

Pricing is not just money; it’s behavior. The wrong pricing model quietly encourages the wrong work. Fixed-fee without change control invites silent disappointment (“we didn’t test that”). Time-and-materials without guardrails invites anxiety (“how many hours is this going to take?”). Your SOW should make scope changes explicit, fast, and boring.

Pricing model clarity

- Fixed fee: best when scope is tight and prerequisites are solid.

- Time-and-materials: best when scope is uncertain (early-stage systems) but needs caps and reporting.

- Per-asset pricing: useful when you can count assets cleanly (apps/APIs) and define “asset” precisely.

Change control that doesn’t kill speed

- Written change order triggers: “new targets,” “new environments,” “new test type (phishing),” “expanded retest.”

- Rate card or unit price for additions (so negotiating doesn’t stall engineering).

- Clarify what’s included: retest hours, extra targets, re-reporting effort.

Actual pricing varies wildly by scope, depth, and vendor. Instead of guessing numbers, use a structure that makes quotes comparable. (If you’re benchmarking, it helps to understand the difference between penetration testing vs vulnerability scanning so you don’t pay pentest prices for scan-only output.)

| Line item | Unit | Notes to require |

|---|---|---|

| Base pentest | Fixed fee or hours | Define assets + depth + methodology |

| Out-of-scope additions | Per asset or hourly | Change order required, no “silent add-ons” |

| Retest | Included hours or fixed fee | Define window + what counts as “retest” |

| Rush delivery | % uplift or fixed add-on | Tie to timeline, not vague urgency |

Neutral next step: Copy this table into your SOW as the quote format you want every vendor to use.

The 12 clauses checklist (copy-ready SOW spine)

Think of this as your SOW table of contents—your “spine.” You can expand each clause into a paragraph or two, but even in short form, these twelve items keep the engagement legible under stress. And yes, the legal stuff belongs here too. Avoiding it doesn’t remove it; it just moves it into the worst possible moment.

- Scope & in-scope assets (plus explicit exclusions)

- Rules of Engagement (authorization, windows, safety rails)

- Methodology/Standards (what “real testing” means)

- Access & prerequisites (accounts, VPN, allowlists, environments)

- Deliverables (report types, evidence, severity model)

- Timeline & milestones (kickoff → testing → draft → final)

- Communication & escalation (critical findings + stop conditions)

- Data handling & retention (minimization, storage, destruction dates)

- Confidentiality & subcontractors (who touches what)

- Pricing, payment, and change control (scope creep control)

- Liability / indemnity / limitations (caps, carve-outs, responsibility boundaries)

- Ownership & usage rights (report IP, sharing with customers/auditors)

Where startups get sneakily burned

- “Implicit” authorization that doesn’t survive procurement scrutiny.

- Undefined exclusions (“we assumed mobile was in scope”).

- Retest terms omitted—so verification becomes a separate negotiation.

- Asset inventory (domains, apps, APIs, cloud tenants) + environment (prod/staging).

- Test objectives (3–6 bullets that describe what “value” looks like).

- Test accounts/roles and how you’ll provision them.

- ROE: windows, rate limits, stop-testing triggers, escalation contacts.

- Required report format + retest expectations (your vendor should be able to show they can produce a clean, reproducible pentest report rather than a screenshot dump).

Neutral next step: Email this list to vendors and require responses in the same structure for easy comparison.

Common mistakes (don’t do this)

Most pentest disappointments are predictable. They happen when the buyer assumes the vendor will “do the right thing,” and the vendor assumes the buyer knows what they bought. The SOW is where you remove assumptions and replace them with verbs.

Mistake #1: Buying a “pentest” that’s really a vulnerability scan

- No manual validation.

- No authenticated testing.

- No proof of exploitability (or proof is reckless and unsafe).

Mistake #2: Letting “prod” happen by accident

- No environment clarity.

- No rate limits.

- No stop-testing clause.

Mistake #3: Forgetting retest and verification

- Fixes ship, but nobody confirms the risk is actually gone.

- Customer review asks for verification evidence you can’t produce quickly.

Mistake #4: Ignoring authorization language

If anything is questioned later—by a customer, an insurer, or an internal audit—you want a single page that says who authorized testing, what was tested, and when. Not for drama. For clarity. (This is also where good evidence hygiene matters; even small process decisions—like a consistent screenshot naming pattern—can be the difference between “audit-ready” and “we’ll get back to you.”)

When to seek legal/security help

Sometimes “move fast” is a liability. Pull in counsel and security leadership early if your testing scope touches regulated data, payment systems, identity infrastructure, or production control planes. The goal isn’t to slow down—it’s to avoid a slow-motion mess later.

Talk to counsel before signing if you have…

- Regulated data (HIPAA/PHI, GLBA, FERPA), payment scope, or government contracts.

- Unusually broad access requests or vague authorization language.

- Liability/indemnity terms that cap responsibility in ways that don’t match the risk.

Pull in security leadership if…

- You’re testing production, cloud control planes, or identity systems (SSO/IAM).

- You need a red team / adversary simulation (different engagement, different ROE).

FAQ

What’s the difference between a pentest SOW and an MSA?

The SOW defines what work happens: scope, ROE, deliverables, timeline, and pricing for this engagement. The MSA defines how the relationship works: legal boilerplate like indemnity, liability caps, dispute resolution, and general terms. Startups often negotiate the SOW faster—but the MSA is where many “gotchas” hide, so have counsel scan both.

What should a pentest SOW include for SOC 2 readiness?

Focus on audit-proof clarity: scoped assets, documented methodology, evidence handling, and a final report that includes severity rationale and remediation guidance. SOC 2 reviewers typically want proof you did meaningful testing and handled findings responsibly, not just that you bought a PDF.

How do we define scope for API pentesting without missing endpoints?

In the SOW, require an asset inventory plus “discovery rules” (how subdomains/endpoints found during testing are handled). Provide an API spec when possible, define which versions are in scope, and include test objectives that force authorization testing (tenant isolation, role boundaries, object-level access control). (On the discovery side, even one small toggle can change your coverage: if your vendor is scanning hosts, ask whether they’re using host discovery appropriately—e.g., when to use -Pn vs -sn in Nmap—so “we didn’t see it” doesn’t become the excuse.)

Should a pentest be done in production or staging?

Staging is safer when it mirrors prod reliably and you want aggressive depth. Production is justified when staging diverges, when the real risk lives in prod-only configuration, or when you need proof tied to real controls. If prod is allowed, your ROE must include tight windows, rate limits, and explicit stop-testing triggers.

What are “rules of engagement” in a penetration test contract?

ROE is the written “how to test safely” agreement: authorization, windows, allowable techniques, prohibited actions, rate limits, escalation contacts, and stop conditions. It’s the clause that turns testing from improvisation into controlled work.

Do pentesters need written authorization, and what should it say?

Yes. Written authorization should identify the vendor, the in-scope assets, the testing window, and the company signatory with authority to grant permission. It should also name escalation contacts and clarify that testing is limited to the defined scope.

How long should a pentest take for a SaaS startup?

It depends on asset count and depth (auth testing takes longer than surface scanning). Your SOW should separate phases: kickoff, testing window, draft report, remediation window, and retest. The more clearly you provide access and test accounts, the less time you lose to coordination. (Time is a real risk surface; if you want an ultra-practical way to plan human energy around security work, adapt an OSCP-style time management plan to your internal remediation window.)

What’s a retest, and should it be included in the SOW?

A retest verifies fixes. Include it whenever your pentest is tied to a customer requirement, compliance milestone, or board commitment. The SOW should define what counts as “retest” (verification of fixed findings vs expanded new scope) and specify the timing window.

Who owns the pentest report and can we share it with customers?

Ownership and usage rights are negotiable. Many startups need to share a report (or a redacted version) with enterprise customers or auditors. Put this in writing: who owns the report IP, whether you can share it externally, and what redactions are required. (If you’re doing this as part of an internal roadmap, it’s worth thinking about “what comes next” after a baseline pentest—especially if your team is building security depth. A guide on what certification to pursue after OSCP can help you map skills to business needs without randomly collecting badges.)

How do we handle PII or customer data during a pentest?

The SOW should require minimization: avoid collecting PII when possible, redact evidence, and treat artifacts as restricted data. Define storage/encryption requirements and enforce retention/deletion timelines. If regulated data is involved, involve counsel and security leadership early.

Conclusion + next step

Remember the hook: the “pentest” that arrives as a PDF and leaves you with nothing but anxiety? That outcome isn’t fate. It’s a contract that never forced clarity. When you lock scope, ROE, evidence handling, and retest terms, you get what you actually wanted all along: confidence you can defend—to customers, auditors, and your own future self at 2 a.m.

- Define scope edges so you don’t pay for ambiguity.

- Make ROE explicit so ops doesn’t suffer.

- Lock retest terms so you can prove closure.

Apply in 60 seconds: Copy the 12 clauses list into your SOW and fill in just three things: assets, ROE window, and deliverables.

Your 15-minute next step: paste the “12 clauses checklist” into a doc, then add (1) your asset inventory, (2) a one-page ROE (windows + stop conditions), and (3) your report expectations. Send it to two vendors and require them to quote using your structure. You’ll learn more from those two responses than from a week of anxious Googling. (If you’re training your own team to execute tighter, faster security work, it helps to have a repeatable note system—something like an OSCP-style pentesting note-taking system—so the institutional memory survives beyond one PDF.)

Last reviewed: 2026-01-30