The Calm Path to Vulnerability Disclosure

A bug report is either a quiet knock on your door or a flare shot over Twitter, and the difference is often one boring file in one predictable place. If you’re shipping a US SaaS product, a clear Vulnerability Disclosure Policy (VDP) and a standards-aligned security.txt stop security reports from getting lost in support tickets or swallowed by a form that hates URLs.

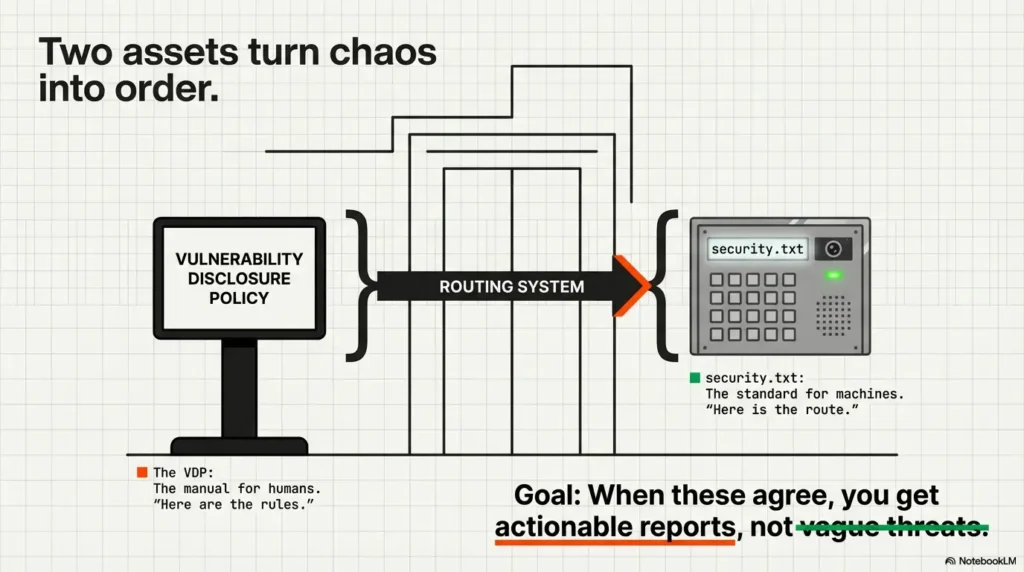

VDP: A public, human-readable page explaining how to report issues, what’s in scope, and what “good-faith” testing means.

security.txt: The machine-readable pointer at /.well-known/security.txt that routes researchers to your contact info.

This post gets you to “ship it today” clarity: public locations, plain-English safe harbor, and copy-ready templates you can publish this afternoon. We’re moving past the “contact support” era to make your reporting door obvious, measurable, and boringly reliable.

- No hero promises

- No vague scope

- No accidental legal trapdoors

security.txt at https://YOURDOMAIN/.well-known/security.txt (the standardized location defined by the IETF). Keep wording crisp: define scope, safe harbor, how to report, what you won’t accept, response timelines, and coordinated disclosure expectations. Use security.txt to point to your VDP URL and a monitored contact channel so reports land in the right inbox fast.

Table of Contents

1) Ship it today: The minimum viable VDP + security.txt

Two assets, two jobs: VDP page (humans) vs security.txt (machines)

Think of your VDP as the lobby sign and your security.txt as the door buzzer. Humans read the VDP. Tools and scanners fetch security.txt automatically. When these two agree, you get fewer “hey I found something???” emails and more reports with steps, impact, and the right target.

I learned this the annoying way: years ago, we had a “Contact Support” link and no VDP. A researcher did the reasonable thing, filed a ticket, and the ticket got auto-closed as “billing-related.” That bug then went on a tiny tour of the internet. Nobody was malicious. Our process was.

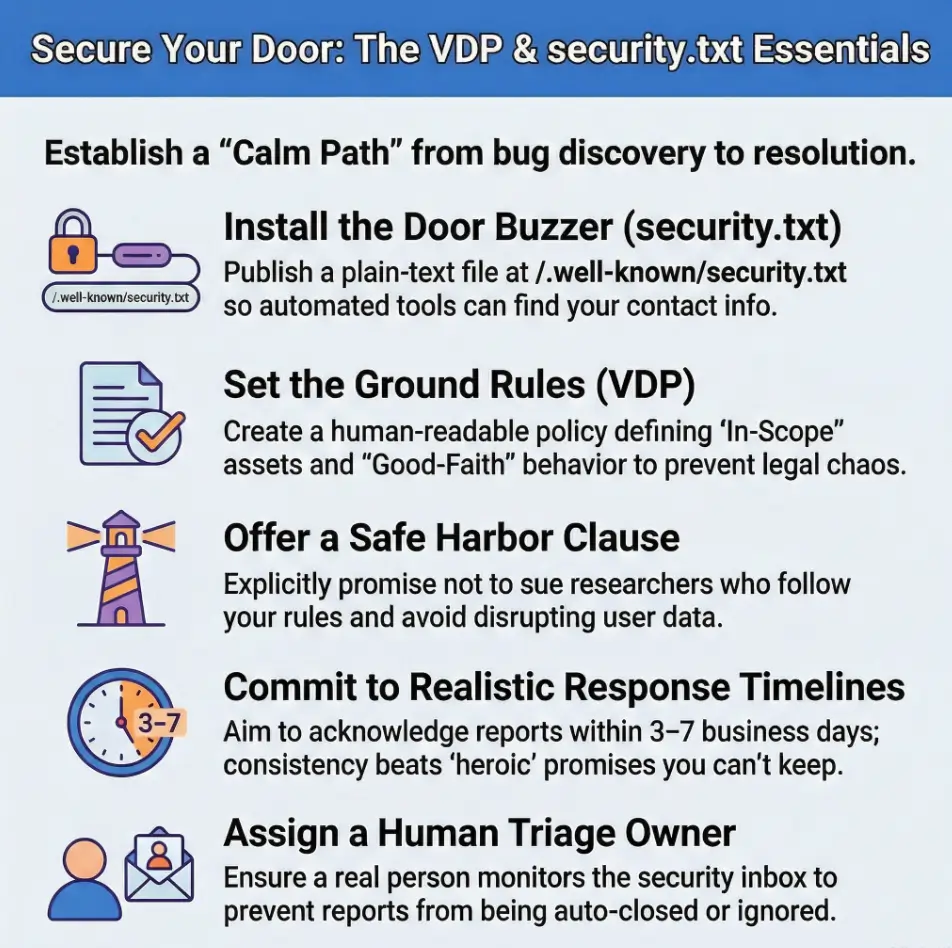

Your “MVP” checklist: contact, scope, safe harbor, timelines, disclosure rules

- Contact: a monitored channel (email, form, or platform), plus optional PGP key

- Scope: what’s in and what’s out (domains, apps, APIs, test envs)

- Safe harbor: what you authorize in good faith and what’s prohibited

- Timelines: realistic acknowledgement and update cadence

- Disclosure: coordinated disclosure expectations, without chest-thumping promises

- Make it easy to contact you fast

- Make scope measurable, not poetic

- Make safe harbor specific enough for counsel to sleep

Apply in 60 seconds: Create a shared inbox label called “SECURITY-REPORTS” and route security@ into it with alerts.

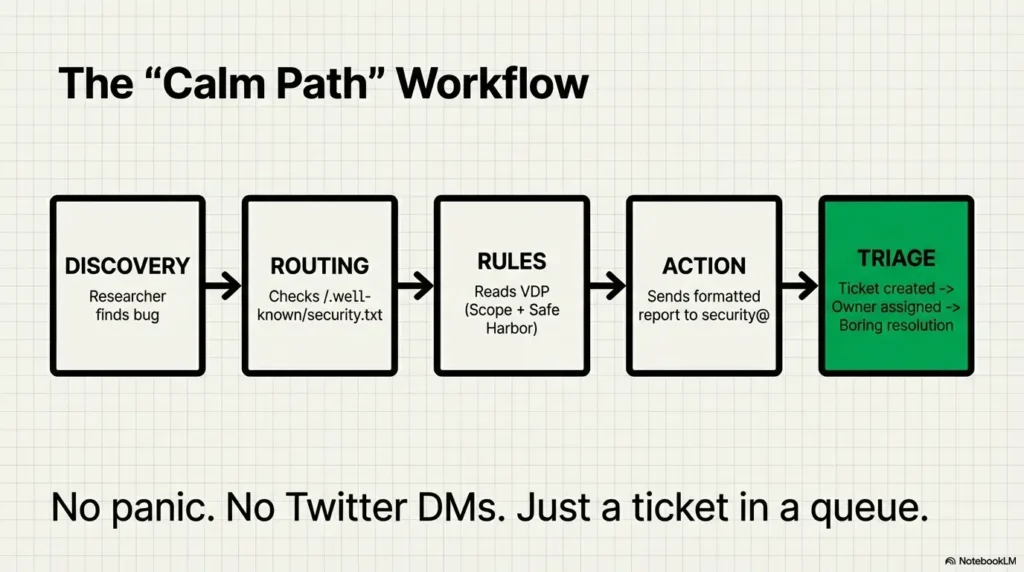

The hidden win: fewer Twitter DMs, more actionable reports

A public, standard location reduces chaos. Researchers (and their tooling) can quickly find: “Who do I contact, and what are the rules?” When that answer is obvious, people behave better. Not always, but more often. That’s worth a small afternoon of work.

2) Public location first: Where security.txt must live (and why)

The canonical path: /.well-known/security.txt (standard)

The IETF standard for security.txt (RFC 9116) defines a “well-known” location: https://YOURDOMAIN/.well-known/security.txt. That predictability is the whole point. Tools don’t want to guess your URL structure. They want to fetch one file and move on.

Root fallback: /security.txt as legacy compatibility, not the star

Some older guidance and validators still check /security.txt. If you want belt-and-suspenders compatibility, you can serve both. But treat the well-known location as canonical. (If you only do one, do the standardized one.)

If both exist: which one “wins” (spoiler: well-known)

In practice, scanners and modern tooling prefer the well-known location because it’s the standard. Your best move is to keep content identical in both places if you publish both, and avoid confusing redirects or mismatched contacts.

Small operator note: don’t let marketing own these URLs. I once watched a VDP link get “rebranded” into a campaign landing page with a cookie banner that blocked the content. The researcher wasn’t impressed. Neither was the incident postmortem.

3) Wording that works: A VDP structure readers won’t misinterpret

Open with intent: “We welcome good-faith reports” (and define “good-faith”)

Start with what you want: reports that help you fix issues. Then define “good faith” in operational terms. If you don’t define it, people will. And they will screenshot your vagueness like it owes them money.

Good-faith usually means: you avoid privacy violations, you minimize disruption, you don’t extort, you report promptly, and you follow scope rules.

Define scope like a map: domains, apps, APIs, repos, and exclusions

Scope is where most “legal chaos” is born. Write it like a deployment inventory. If it’s in scope, list it. If it’s out of scope, list it. If you don’t know, say how a researcher can ask.

- In scope:

app.yourdomain.com,api.yourdomain.com, iOS/Android app, public docs site - Explicitly out of scope: third-party hosted marketing site builder pages, employee personal devices, physical offices

- Conditional scope: staging environments (only if explicitly listed, and only if non-production data)

Promise what you can keep: acknowledgements, updates, and resolution windows

Resist the temptation to write heroic SLAs you can’t maintain. A simple, honest cadence beats a fast lie. Example: “We aim to acknowledge within X business days and provide status updates every Y days for in-scope reports.” That’s realistic and sets expectations without handcuffing you. If you want a clean way to align “policy promises” with “operational reality,” borrow the structure from a vulnerability remediation SLA and keep your timelines measurable.

- Define scope with assets, not vibes

- Define “good faith” with behaviors

- Define timelines you can actually meet

Apply in 60 seconds: Replace any “we will” promises with “we aim to,” unless you truly mean “will.”

4) Safe harbor clarity: The paragraph that prevents panic (and lawyers)

What safe harbor usually covers: authorized testing boundaries + good-faith expectations

Safe harbor is your “we won’t punish the helpful people” clause, within boundaries. In the US, many organizations adapt language aligned with government guidance on coordinated vulnerability disclosure and vulnerability disclosure policies, including CISA’s VDP template. The goal: encourage responsible reports while clearly prohibiting harm. If your team is still formalizing how security work fits into the business, pairing this with a lightweight security testing strategy keeps policy, testing, and triage from drifting apart.

What you still prohibit: extortion, social engineering, privacy violations, disruption

This is where you get specific. You can welcome research while still banning the stuff that wrecks lives or uptime:

- Extortion or ransom demands

- Social engineering of employees or contractors

- Accessing, modifying, or exfiltrating user data beyond what’s necessary to prove impact

- Denial-of-service testing or heavy automated scanning that degrades service

“Here’s what no one tells you…” safe harbor fails when scope is fuzzy (make it measurable)

If scope is vague, safe harbor becomes a misunderstanding factory. A researcher thinks they’re “helping” on anything under your brand. Your counsel reads it as “we authorized weird stuff.” Make the boundary measurable: domains, IP ranges (if you must), apps, and exact behaviors that are OK versus not OK.

Short Story: The Bug Report That Almost Turned Into a Lawsuit (120–180 words) …

We once had a partner integration that lived on a separate subdomain. It looked like ours. It wasn’t ours. A researcher found an auth issue and, trying to be helpful, tested it across multiple accounts. Their email started polite, then got anxious: “Your policy says you authorize good-faith research.” Our legal team saw “authorization,” our ops team saw “unknown access,” and everyone’s blood pressure got a pay raise.

The researcher wasn’t trying to cause harm. They were trying to prove impact. But our scope section didn’t list the integration subdomain, and our safe harbor didn’t state limits on data access. We resolved it, but the emotional damage was real. The fix wasn’t a better lawyer. The fix was a better sentence: list what you own, list what you don’t, and clearly state boundaries on data access and disruption.

5) Template pack: Copy-ready VDP clauses (modern, plain-English)

Below are copy-ready clauses you can paste into a VDP page. Adjust specifics (domains, timelines, contact) and have counsel review if your org is regulated or you have strict contractual obligations. The goal is clarity, not intimidation. If you’re also reviewing your legal posture around third-party testing, it’s worth skimming penetration test limitation of liability language patterns so your VDP doesn’t accidentally promise what your contracts don’t support.

Intake clause: what to include in a report (steps, impact, PoC, affected assets)

Reporting a vulnerability

Please include enough detail for us to reproduce and verify the issue:

- Product/feature and affected URL, endpoint, or app version

- Steps to reproduce (numbered steps preferred)

- Expected vs actual behavior

- Impact description (what could an attacker achieve?)

- Any proof-of-concept code or screenshots (redact sensitive data)

If you’re unsure whether something is in scope, contact us first and we’ll guide you.

Coordinated disclosure clause: timelines, credit, and publication expectations (without overpromising)

Coordinated disclosure

We support coordinated vulnerability disclosure. If your report is in scope and submitted in good faith, we aim to acknowledge receipt within [X business days] and provide status updates at least every [Y days] until resolution or closure. We may request additional information to validate impact.

If you would like public credit, tell us your preferred name/handle. We may list acknowledgements at our discretion after the issue is resolved.

Non-commitment clause: “we may not respond to out-of-scope” without sounding hostile

Out of scope

To keep response times fair for everyone, we may not respond to reports that are clearly out of scope, lack reproducible steps, or involve prohibited testing (e.g., denial-of-service, social engineering, or unnecessary access to user data).

Operator note: write like a calm adult. Threatening language doesn’t “scare off bad actors.” It mostly scares off the helpful people and leaves you with the worst kind of silence.

6) security.txt fields: What to include (and what to avoid)

Core fields you’ll actually use: Contact, Policy, Acknowledgments, Encryption, Expires

The RFC defines how security.txt should be structured and which fields can appear. Most SaaS teams only need a handful:

- Contact: email (

mailto:security@yourdomain.com) or a secure form URL - Policy: your VDP URL (canonical)

- Acknowledgments: optional URL for credits

- Encryption: optional URL to a PGP key

- Expires: a date so you’re forced to review and keep it fresh

Link strategy: point to one canonical VDP URL, keep it stable

Choose one simple URL, like /vdp or /security, and keep it stable even if your site redesigns. If you must move it, use a clean redirect that doesn’t break tooling (no login walls, no infinite redirects, no “choose cookies to view policy”).

Avoid “broken windows”: expired dates, dead inboxes, and vague contact routes

Nothing says “we don’t actually want reports” like an expired Expires date or a mailbox that bounces. Treat this like an on-call number: it should be boringly reliable. If you’re building the rest of your baseline program, pairing this with a simple security metrics for founders dashboard helps you prove the VDP isn’t just “policy theater.”

Show me the nerdy details

Formatting basics: security.txt is a plain text file using a simple “Field: value” format. Many fields accept URLs (including mailto:). Tools often fetch /.well-known/security.txt automatically, parse fields, and follow the Policy link for the human-readable VDP. Keep lines clean, avoid HTML, and validate that your server returns it with a normal 200 OK response.

Example security.txt (copy-ready)

Contact: mailto:security@YOURDOMAIN.com Policy: https://YOURDOMAIN.com/vdp Acknowledgments: https://YOURDOMAIN.com/security/acknowledgments Encryption: https://YOURDOMAIN.com/.well-known/pgp-key.txt Expires: 2026-08-31T23:59:59Z Preferred-Languages: en

Neutral action: After publishing, fetch the file from a clean browser session and confirm the content matches exactly.

7) Common mistakes: The fastest ways to create security debt in public

Mistake: publishing a VDP that invites “anything anywhere anytime” (unbounded scope)

If your scope reads like “all our stuff forever,” you just authorized chaos. Your researchers will interpret it broadly. Your legal team will interpret it narrowly. Your ops team will interpret it at 2:07 a.m. during an incident. Nobody wins. A practical hedge is to align the VDP scope language with how you already scope security work in security testing strategy docs, so policy and practice don’t quietly contradict each other.

Mistake: using legal threats instead of safe harbor language (researchers bounce)

A VDP that starts with “Unauthorized access is prohibited and will be prosecuted” may be technically true, but it’s strategically self-sabotage. Safe harbor language exists so good-faith researchers know you won’t treat them like criminals for being helpful within clear boundaries.

Mistake: redirects that break tooling or land on generic HTML (validators fail)

Keep /.well-known/security.txt a plain text file. Avoid “smart” routing that transforms it into an HTML page or a marketing redirect. Tools expect a predictable, parseable response. Your future self also expects that.

Personal scar: I once chased a “missing security.txt” alert that turned out to be a CDN rule rewriting unknown paths to /index.html. So the file existed… as a homepage. It was the most technically correct wrong thing I’ve ever seen.

8) Don’t do this: Anti-pattern wording that triggers chaos

“We will respond in 24 hours” (unless you truly will)

If you can’t guarantee it on a holiday weekend with two people sick and a board meeting on Monday, don’t promise it. Write a target you can meet. Consistency beats speed theater.

“We pay bounties” (if you don’t) and “No public disclosure ever” (unrealistic)

Don’t imply money unless you have a real program. If you want a VDP without payouts, say so plainly. Also, “No disclosure ever” reads like you’re trying to bury problems. Coordinated disclosure is about timing and safety, not permanent silence.

“Let’s be honest…” vague promises become screenshots in someone’s thread

Anything vague will be interpreted in the least charitable way at the worst possible time. Replace “promptly,” “reasonable,” and “quickly” with a measured cadence, and keep your scope and prohibitions crisp. If you want a simple way to operationalize “measured cadence,” mirror the same cadence logic you’d use when you read a penetration test report: timelines, owners, and concrete next actions beat vibes.

- Promise targets, not heroics

- Ban harmful testing explicitly

- Make ambiguity rare and boring

Apply in 60 seconds: Search your draft for “reasonable,” “prompt,” and “as soon as” and replace each with a concrete cadence or remove it.

9) Who this is for / not for: Fit check before you publish

For: SaaS, startups, agencies, public websites, APIs, and app teams

If you operate anything on the public internet, you benefit. Even if your “product” is mostly behind login, your perimeter still has marketing pages, docs, auth flows, APIs, and integration endpoints. Bugs don’t care about your org chart. If your surface area includes auth and identity flows, consider pairing the VDP with your core identity narrative, especially SAML SSO for SaaS and how you handle enterprise trust signals.

Not for: organizations needing export-controlled reporting workflows or formal bug bounty ops (yet)

If you handle export-controlled systems, certain government contracts, or strict regulated workflows, you may need a more formal intake mechanism and legal review. You can still publish a VDP, but you’ll want guardrails and potentially a coordinated intake platform.

When you should upgrade: adding a platform, triage SLAs, or third-party coordination

If you start receiving higher volume or higher severity reports, or your enterprise customers ask “what’s your vulnerability disclosure process?”, that’s a sign to upgrade: formal triage, severity taxonomy, and a tighter on-call rotation. For many teams, the “upgrade moment” is also when you formalize incident posture, for example by adding an incident response retainer so you’re not improvising under pressure.

Money Block: Eligibility checklist (binary yes/no + one-line next step)

- Do you run a public web property or API? Yes/No → If yes, publish VDP + security.txt.

- Do you have a monitored security contact? Yes/No → If no, set up

security@first. - Can you acknowledge reports within 3–7 business days? Yes/No → If no, set expectations explicitly.

- Do you know your in-scope assets? Yes/No → If no, start with your top 3 domains/apps.

- Do you prohibit disruption and privacy violations? Yes/No → If no, add it now.

Neutral action: Circle the first “No” and fix only that this week.

10) Rollout mechanics: Where to link your VDP so humans actually find it

Footer link, /security or /vdp URL, and a short “Report a vulnerability” CTA

Put it where people look when they’re hunting for policy pages: the footer. Make the link text explicit. “Security” is fine; “Report a vulnerability” is even better. Keep it outside login walls.

Support + Trust Center alignment: don’t bury it behind login

If you have a Trust Center, link the VDP there too. If you have a support portal, add a small “Security issue?” callout that routes to the VDP and the security inbox. The fastest way to lose a good report is to force a researcher to create an account, confirm an email, and then fill out a form that rejects URLs.

Internal routing: ticketing + on-call + severity tags (make triage boring, fast)

Make triage boring. Boring is good. Boring means it works. Route inbound reports to a ticketing queue with severity tags and owners. Decide who reads it first, who can pull engineers in, and who writes back to the reporter. If your team is small, the fastest “boring win” is to standardize the queue and then invest in baseline enablement, like 1 hour a month security training so engineers know what “good” looks like when a report lands.

Money Block: Decision card (When A vs B; time/cost trade-off)

If you’re small (0–2 security people): Use security@ + ticketing queue + simple VDP. Time: set up in 1–3 hours. Trade-off: more manual triage.

If you’re growing (high volume or enterprise pressure): Add an intake platform or structured form + triage workflow. Time: days to weeks. Trade-off: better routing, less spam, clearer audit trail.

Neutral action: Pick the smallest option that you can keep consistent for 90 days.

FAQ

What is a Vulnerability Disclosure Policy (VDP)?

A VDP is a public page that explains how people should report security vulnerabilities to you, what’s in scope, what behaviors are authorized in good faith, what’s prohibited, and what timeline they can expect. It’s a process contract, written for humans.

Where should security.txt be located on a website?

The standardized location is /.well-known/security.txt on your domain. Some teams also serve /security.txt for legacy compatibility, but the well-known path is the modern, standards-aligned target.

What should a security.txt file contain?

At minimum, a monitored Contact route and a Policy URL pointing to your VDP. Many teams also include Expires to force periodic review, plus optional links for encryption keys and acknowledgements.

Do I need safe harbor language in my VDP?

If you want good-faith researchers to report privately instead of going public, safe harbor language helps. It clarifies what you authorize within scope and what you won’t pursue legally when the researcher follows the rules. It also clarifies prohibited behaviors (extortion, disruption, privacy violations).

What should be in scope vs out of scope for VDP testing?

In scope should be your owned and operated assets: specific domains, apps, APIs, and repositories. Out of scope commonly includes denial-of-service testing, social engineering, physical security, third-party systems you don’t control, and any access to user data beyond what’s necessary to prove impact.

Can researchers publicly disclose before a fix is available?

Set expectations for coordinated disclosure: ask for a reasonable window while you investigate and fix, and commit to status updates. Avoid demanding “never disclose” forever. You want alignment on timing and safety, not a permanence clause that nobody respects.

Should we offer bug bounties or just a VDP?

A VDP alone is enough to start. Bug bounties are a separate commitment (budget, triage capacity, rules). If you can’t reliably triage, paying for more inbound reports can overwhelm you. Start with VDP + routing; add bounty later if it matches your maturity and goals.

How fast should we acknowledge a vulnerability report?

Choose a target you can meet consistently. Many teams pick a few business days for acknowledgement and a weekly update cadence for in-scope reports. The best timeline is the one you won’t quietly abandon in month two.

How do we handle reports about third-party dependencies?

Say how you handle coordinated disclosure for third-party components: you’ll validate the report, coordinate with the vendor or maintainer when appropriate, and communicate status updates. If you’re part of a larger ecosystem, referencing coordinated vulnerability disclosure practices helps set expectations.

How do we avoid getting spam and low-quality submissions?

Use tight scope, clear “what to include” requirements, and a single canonical contact route. Consider a structured form or platform if volume is high. Most importantly, publish what you will not accept: disruption, social engineering, and vague “something seems off” reports with no reproduction steps.

12) Next step: One concrete action you can do in 30 minutes

Publish /vdp (or /security) and add /.well-known/security.txt pointing to it

If you do nothing else today, do this: publish one stable URL for your VDP and make security.txt point to it. The standard exists so researchers don’t have to hunt. Help them help you.

Add a monitored alias (e.g., security@) + triage owner

Name an owner. Not a team. A human. (Teams are wonderful, but teams can also be invisible at 6:40 p.m. on Friday.) Make sure at least two people can access the inbox and ticket queue.

Set Expires so you’re forced to review, not forget

Expires is a small act of humility: “We will revisit this.” Put a calendar reminder when the date approaches. The best security policies are the ones that stay alive.

Money Block: Mini calculator (inputs ≤3; output 1–2 lines)

Inputs: (1) expected reports/week, (2) average triage minutes/report, (3) available triage hours/week

Output: If (reports × minutes) > (hours × 60), you must tighten scope or add triage capacity before promising faster timelines.

Neutral action: Pick conservative inputs and set timelines based on the result, not optimism.

Infographic: The “Calm Path” from Bug to Fix

Looks for /.well-known/security.txt

Contact + Policy link (VDP)

Scope + safe harbor + prohibitions

Ticket + owner + updates

Resolution, then credit (optional)

Goal: fewer misunderstandings, faster verification, and a paper trail you’ll be glad you have later.

Two final buttons for teams that want official-ish language and coordinated disclosure framing. These are useful references even if you don’t copy them verbatim.

Conclusion

Remember the question from the top: will the next researcher report calmly or post angrily? You can’t control people, but you can control the door you build. A VDP with clear scope and safe harbor, plus a standards-aligned /.well-known/security.txt, turns “random inbound chaos” into a boring, fixable queue. And boring, in security, is a compliment.

Your next step in the next 15 minutes: publish a simple /vdp page, create /.well-known/security.txt that points to it, and make sure a real human owns the inbox. That’s how you trade legal chaos for operational calm. If you want the rest of your foundations to match this calm, lock down the basics that tend to leak into incidents, like startup secrets management and cloud misconfigurations, so the reports you get are rarer, cleaner, and easier to close.

Last reviewed: 2026-02