The Dangerous Reality of Penetration Test Reports

The most dangerous line in a penetration test report is not “Critical.” It’s “Medium” paired with a screenshot that quietly proves an attacker path.

If you’re a founder, you didn’t pay for a PDF so you could debate CVSS scores at midnight. You paid to find the few findings that can freeze procurement, trigger breach clauses, or turn “minor” into multi-tenant chaos. The problem is the report reads like tool output, while buyers read it like a trust audit.

Keep guessing, and you lose time twice: once in engineering churn, and again in enterprise security review purgatory.

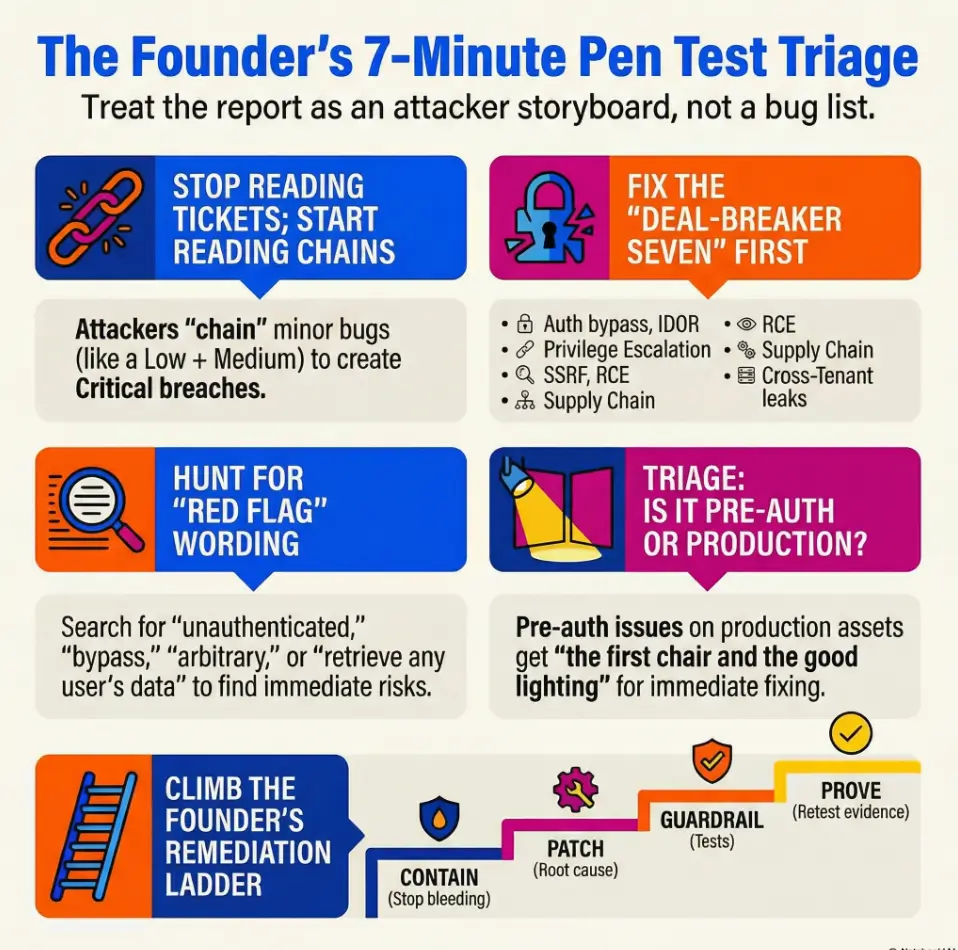

This guide shows you how to read a pen test report for impact: spot chainable issues (IDOR, broken access control, SSRF-to-metadata), triage by exploitability and exposure, and convert fixes into a dated remediation plan with retest-proof evidence.

Read this like an attacker.

Track it like a CFO.

Explain it like a vendor buyers trust.

- ✓ Know what to triage first.

- ✓ Know what to ask your testing firm.

- ✓ Know what to document so deals move again.

A pen test report is a point-in-time, scoped narrative of how a tester could compromise a target. Its real value isn’t the severity label. It’s the reproducible steps, affected assets, and the “what happens next” chain.

Table of Contents

Read for impact: What a pen test is actually telling you

The report is a story: attacker path, not a checklist

A good pen test report is basically a crime novel. Not the cozy kind with teacups. The kind where the villain tries a door, finds it unlocked, walks to the server room, and leaves with your data wearing your badge.

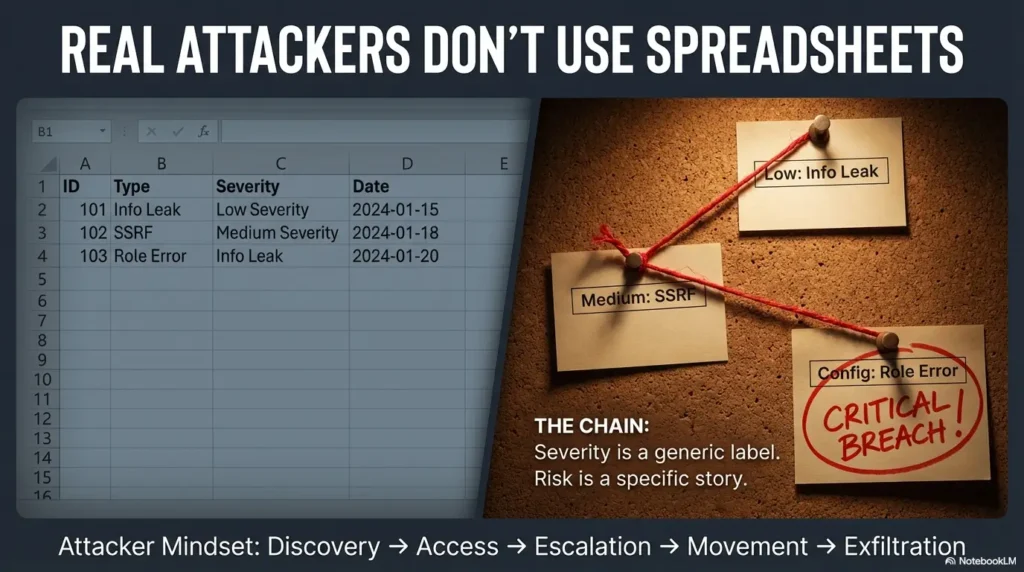

The fastest founder mistake is treating findings like independent tickets. Real attackers chain. A “low” that reveals internal hostnames plus a “medium” SSRF plus a “misconfigured” cloud role can end up behaving like a full-on breach. That’s why a security testing strategy should define what “good signal” looks like (narrative, validation, scope gaps), not just what the scanner output says. This is why NIST’s security testing guidance emphasizes methods, scope, and validation, not just a severity label. It’s also why mature buyers ask for your remediation plan, not your “score.”

Personal anecdote: I once saw a team celebrate “no criticals” like it was a champagne moment. Three weeks later, the buyer’s security team chained two mediums into an account takeover demo in a follow-up call. The room got quiet in a way you can feel in your teeth.

Severity is not risk: why “Medium” can still ruin your quarter

Severity is often a blend of exploitability + impact in a generic world. Risk is exploitability + impact in your world: your customer base, your data types, your architecture, your compensating controls, and your actual production exposure.

A “Medium” on a production auth boundary for a multi-tenant app can be an enterprise deal-killer, which is why teams that ship SaaS seriously invest in API authentication and authorization for SaaS as a product primitive, not a patch. A “High” in a dead staging environment might be noise. Your job is to read the report like a CFO reads contracts: what’s the worst plausible downside, and how quickly can it happen?

- Find the chain, not just the label.

- Map findings to production exposure and customer impact.

- Convert “severity” into a dated plan with proof.

Apply in 60 seconds: Circle any finding that touches auth, tenant boundaries, secrets, or production. Those are your “read twice” items.

Here’s what no one tells you… the finding is less important than the chain

Ask this on repeat: “If I were the attacker, what would I do next?” The report often tells you, but politely, in a paragraph most people skim.

If your vendor didn’t include an attack narrative, you can still build one: order the findings by what enables the next step. Discovery → access → escalation → movement → exfiltration. That’s the spine. If you want to get ahead of this before the next engagement, a lightweight MVP threat modeling for startups pass makes “chain thinking” feel less like panic and more like planning.

- Fix now if: pre-auth, production, sensitive data, or chainable with one more step.

- Plan if: requires unusual access, non-production only, or mitigated by strong compensating controls (with evidence).

- Escalate if: buyer review is active or renewal is within 60 days.

Neutral next action: Put one owner and one date on your top 3 “Fix now” items before the day ends.

Ignore this and you’re in trouble: The 7 finding types founders must treat as urgent

If you only have bandwidth for one pass, prioritize these seven. They’re the greatest hits of “how did this happen?” postmortems and the most common procurement red flags.

Account takeover paths (auth bypass, session theft, weak MFA)

Anything that lets an attacker become a user (or become you) deserves VIP treatment. Look for auth bypass, session fixation, token leakage, insecure password reset flows, weak MFA enforcement, and “remember me” implementations that remember too much.

Personal anecdote: A founder once told me, “But we require MFA.” Turns out, only for the web app. The API accepted bearer tokens forever. The attacker doesn’t care about your UI.

Data exfiltration vectors (IDOR, broken access control, object storage leaks)

OWASP has been blunt for years: broken access control is a top-tier source of real-world incidents. In practice, this shows up as IDOR (Insecure Direct Object Reference), missing authorization checks, or sloppy object storage permissions. If the report includes “retrieve any user’s data,” you stop reading and start scheduling.

Privilege escalation (role abuse, tenant boundary breaks, admin API exposure)

In multi-tenant products, “role” is your perimeter. If a regular user can become an admin, or if an admin can cross tenants, treat it like a fire. Even if you think “no one would do that,” someone eventually will. Buyers assume it’s possible until proven otherwise.

Lateral movement (SSRF to metadata, network pivoting, internal service trust)

SSRF isn’t “just a web bug.” It can be a hallway pass to cloud metadata, internal services, and identity credentials. If your report mentions metadata endpoints or internal trust relationships, that’s a chain-starter. This is where “two lows make a critical” stops being a metaphor and becomes a meeting, especially when cloud misconfigurations and overly permissive roles turn a single request into a credential vending machine.

Remote code execution (deserialization, injection, CI/CD secrets → prod)

RCE is the classic “game over,” but modern versions often arrive through pipelines: build secrets, exposed registries, CI tokens, or dependencies. If the report touches your CI/CD, treat it as both a technical and business risk: it affects every customer, not just one endpoint.

Supply chain blast radius (dependency takeover, build pipeline compromise)

You may not “own” the dependency, but you own the outcome. If the report flags weak dependency controls, unsigned artifacts, or excessive build permissions, you’re in the supply-chain neighborhood. That neighborhood has fast cars and low patience.

“One bug, many customers” multi-tenant cross-over risks

This is the big one for enterprise: any finding that crosses tenant boundaries. If a single issue can expose multiple customers, it’s not a bug, it’s a trust collapse scenario. Buyers will ask what you changed to prevent recurrence, not just whether you patched it.

Exploitability first: The 5 questions that decide whether to panic or plan

Is it pre-auth or post-auth? (and why pre-auth wins the queue)

Pre-auth issues widen the attacker pool. Post-auth issues can still be catastrophic, but they often require a foothold. The queue should reflect that: pre-auth production issues get the first chair and the good lighting.

Is it remote and reliable, or theoretical and fragile?

Pen test vendors sometimes include “possible” or “could.” Your job is to locate “reproducible” and “reliable.” If reproduction steps are detailed and evidence is clear, treat it as real. If the path is fragile, you can plan, but still track it. Fragile today becomes reliable tomorrow when the attacker finds a better angle.

Is production affected, or only a lab/staging artifact?

Scope matters. If the report is based on staging, treat it as an early warning system. If it’s production, treat it as a current weather report with thunder you can hear.

Is there sensitive data, regulated data, or customer secrets involved?

Even if you’re not handling regulated data, you’re handling customer trust. Exposure of API keys, access tokens, invoices, support tickets, audit logs, or configuration secrets can be enough to trigger breach notifications, contract clauses, or a procurement pause. If you want a practical baseline for containment, rotation, and the “where do secrets still leak?” scavenger hunt, keep a living playbook for startup secrets management.

Can it be chained? (the “two lows make a critical” rule)

Chaining is the attacker’s hobby. Your defense is to assume they have weekends.

Show me the nerdy details

Chaining often follows a predictable pattern: (1) discovery (info leak, SSRF, directory listing) → (2) access (auth weakness, token exposure, default creds) → (3) escalation (role abuse, deserialization, injection) → (4) movement (internal trust, metadata creds) → (5) impact (data exfiltration, destructive actions). When you assess “chainability,” look for findings that provide identity (tokens, creds), network reach (SSRF/internal), or authorization bypass (IDOR/ACL gaps). Those are the accelerants.

- Exploitability (1–5):

- Exposure (1–5):

- Impact (1–5):

Result: 100 / 125

Neutral next action: Sort your findings by this score, then sanity-check the top 3 with your tech lead in one 15-minute call.

Find the attacker’s happy path: How to skim 40 pages in 7 minutes

Start at Executive Summary, then jump to “Attack Narrative” / “Methodology”

Skimming is a skill. If you read the report straight through, you’ll end up knowing the tester’s favorite toolchain and still not know whether you’re safe.

Start with: Executive Summary → Scope → Attack narrative (or methodology) → Findings list. You’re looking for the “what could happen” and “what was in scope,” not the glossary.

Personal anecdote: I once tried to “be responsible” and read a report cover-to-cover at 11:30 PM. I learned six new acronyms and missed the one sentence that said an endpoint was publicly accessible in production. The next day I felt like a clown in a blazer.

Follow the reproduction steps: you’re looking for certainty

A real finding should have reproducible steps and evidence. The more precise the steps, the more confidence you should have that the vulnerability is exploitable. If reproduction is vague, ask for clarification. “Trust me” is not a control.

Look for asset lists: what’s actually in scope and what’s missing

Scope is where pen tests quietly fail. If the report didn’t cover your mobile app, key APIs, admin panels, cloud configuration, or internal tools, it’s not “bad news,” but it is incomplete signal. Treat it like a lab result that didn’t test the thing you’re worried about.

Evidence matters: screenshots, requests, payloads, timestamps

Evidence is how you avoid argument loops. A screenshot of a privileged response, a recorded request/response pair, or an example payload does more than ten Slack messages and a spirited debate.

Red-flag wording: Phrases that mean “this is real” (even if severity is low)

“No user interaction required” / “unauthenticated attacker”

Translation: the attacker doesn’t need your customer to click anything, and they don’t need an account. That’s a wide doorway. Wide doorways get walked through.

“Bypass” / “arbitrary” / “retrieve any user’s data”

These words usually indicate impact that procurement teams understand instantly. “Arbitrary file read,” “arbitrary code execution,” “authorization bypass,” “retrieve any object” are not “maybe” words. They’re “someone will ask you about this in a spreadsheet” words.

“Default credentials” / “hardcoded secrets” / “publicly accessible”

Default creds and hardcoded secrets are how breaches become embarrassingly fast. Public exposure is how they become embarrassingly discoverable. If you see these, don’t negotiate with yourself. Rotate secrets, lock access, add monitoring, then fix root causes. If you need buyer-friendly, non-handwavy wins that reduce “ambient risk,” a surprisingly effective chunk is security headers ROI work because it’s visible, verifiable, and often bundled into procurement checklists.

Let’s be honest… if you don’t understand the sentence, it’s not “fine”

If a finding reads like noise, your job isn’t to pretend it’s harmless. Your job is to translate it. Ask your vendor: “Explain this like I’m smart, but I don’t live in your tool.” Good testers can do that in two paragraphs.

- Pre-auth + production language belongs at the top.

- “Any user’s data” is a buyer’s nightmare sentence.

- Secrets exposure demands immediate containment.

Apply in 60 seconds: Search your PDF for “unauthenticated,” “bypass,” “arbitrary,” “public,” and “token.” Make a short list.

Who this is for / not for: Use this guide correctly

For: founders owning risk, deals, and roadmap tradeoffs

If you’re the person who feels it when a deal stalls, this guide is for you. You don’t need to become a pentester. You need to become someone who can ask sharp questions, fund the right work, and communicate clearly to buyers.

For: teams without a dedicated security lead (yet)

If you’re running lean, you can still run disciplined. You can track owners, dates, interim controls, and retest proof without hiring a full security org tomorrow. This is also where a simple set of security metrics for founders helps you talk about progress without turning the conversation into vibes.

Not for: writing your own exploit code or “testing production yourself”

Don’t. If you need validation, use safe internal test environments, feature flags, and vendor retesting. Accidental production chaos is a terrible way to learn, and an even worse way to explain yourself later.

Not for: substituting this for legal/compliance review on contracts

This is decision support, not legal advice. If your customer contract requires specific timelines, attestations, or breach notification obligations, involve the right counsel and security stakeholders. Also, if you’re negotiating language around retesting, exclusions, and who “owns” downstream impact, it helps to understand pen test limitation of liability patterns before you sign.

Common mistakes: How founders accidentally waste a pen test (and money)

Treating CVSS like truth instead of a clue

CVSS can be useful, but it can’t see your business context. It can’t feel procurement pressure. It also can’t tell whether your staging environment is a cardboard set or a clone of production. Use it as a clue, then apply your real-world constraints.

Closing findings on paper without verifying the fix

“Fixed” is not a Jira status. “Fixed” is: patch applied, tests added, access controls verified, monitoring updated, and retest evidence collected. If you can’t prove it, buyers will assume it’s aspirational. If you want a clean standard for what “done” means (with deadlines buyers recognize), align your workflow to a vulnerability remediation SLA.

Personal anecdote: I watched a team mark “hardcoded secret removed” as done, then discovered the secret was still in a Docker image layer. The fix was real, but the artifact was still haunted.

Letting “accepted risk” become “forgotten risk”

Sometimes you accept risk. That’s okay. The failure mode is accepting it quietly without a compensating control or a revisit date. “Accepted” without “reviewed again on” is just procrastination with a blazer on.

Ignoring scope gaps (mobile apps, APIs, cloud configs, internal tools)

Pen tests are scoped. Attackers aren’t. If scope skipped key systems, don’t panic, but do update your plan: add targeted testing, scanning, or configuration reviews where needed.

Only fixing symptoms (WAF rule) while keeping the root cause

WAF rules can buy time, but they rarely solve systemic authorization or trust-boundary problems. Buyers know the difference between a bandage and a rebuild. Use interim controls, but schedule root cause work. If you’re choosing “bandage vs rebuild” under pressure, it helps to understand the tradeoffs in WAF vs RASP vs CSP for startups so you can communicate mitigations without implying the problem evaporated.

- Affected assets list (endpoints, services, repos, cloud accounts).

- Exploit preconditions (pre-auth/post-auth, roles required, network access).

- Customer impact statement (what data/actions are at risk).

- Interim mitigations you can deploy within 24 hours.

- Proof requirements (retest evidence, logs, screenshots, change records).

Neutral next action: Put these five items in your tracker so every discussion starts with shared facts.

Don’t do this: The 6 fastest ways to lose buyer trust during security review

“We’ll fix it next quarter” with no dates, owner, or interim control

Security reviewers hear this as: “We don’t run our own house.” If you need time, that’s fine. But show the plan: interim mitigation now, root fix date, retest date.

Sharing the full report without redaction or NDA boundaries

Pen test reports contain sensitive details: endpoints, payloads, screenshots, and sometimes secrets. Share carefully: buyer-safe summary first, under NDA, with redactions where appropriate. If you overshare, you can create a new risk while trying to prove you’re low risk. It’s… not ideal.

Overpromising “complete remediation” when it’s actually partial

Buyers forgive imperfect. They do not forgive misleading. If you mitigated but haven’t fully remediated, say that. Then show what mitigations are in place and when root cause work lands.

Arguing severity instead of presenting a risk-based plan

Arguing severity feels defensive. A risk-based plan feels competent. You’re not trying to “win” the report. You’re trying to win trust.

Hiding repeated findings (buyers notice patterns)

If the same class of issue appears repeatedly, acknowledge it and describe your systemic fix: secure defaults, tests, code review gates, training, or guardrails. Repetition without learning is where trust goes to die. One low-cost way to reduce repeat offenses is structured 1 hour a month security training that’s tied to real findings, not generic awareness theater.

Sending a SOC 2 report as a substitute for remediation evidence

SOC 2 and pen tests serve different purposes. Buyers often want both, and they want them in the right sequence: remediation plan + proof for the pen test findings, plus your broader control story.

Turn findings into a plan: The founder-grade remediation ladder

Step 1: Stop the bleeding (disable endpoint, rotate secrets, tighten access)

Containment is not “panic.” It’s professionalism. Disable risky endpoints, rotate exposed secrets, reduce overly broad permissions, and add rate limiting or additional verification where needed.

Personal anecdote: I’ve watched teams spend two weeks planning a “perfect” refactor while a token leak sat in plain sight. The fix was hard, but the first move was easy: rotate, revoke, and monitor. The relief was immediate.

Step 2: Patch the root cause (authorization, input handling, trust boundaries)

Most lasting fixes are boring in the best way: consistent authorization checks, explicit allowlists, clear tenant scoping, and safer parsing patterns. This is the work buyers trust because it reduces recurrence.

Step 3: Add guardrails (tests, scanners, alerting, secure defaults)

This is where you turn a fix into a capability. Add tests that fail if access control breaks. Add scanning where it makes sense. Add monitoring that catches abnormal access patterns. Security isn’t a “one sprint” event. It’s a set of rails that prevent you from accidentally driving into a lake.

Step 4: Prove it (retest, evidence pack, change log)

Proof is what closes deals. Retest results, screenshots, updated configs, and a clean change log can turn a tense security review into a calm “thanks for the update.”

- Interim controls buy time without pretending the problem vanished.

- Root cause fixes reduce future findings and buyer friction.

- Evidence packs turn “trust me” into “here it is.”

Apply in 60 seconds: For your top finding, write one sentence each for: containment, root fix, guardrail, proof.

What to ask your vendor: The email template that gets actionable answers

The goal of vendor communication is not to sound technical. It’s to extract clarity: what is affected, how real is it, how to mitigate now, and how to prove later. If you’re still tightening your procurement posture, pairing this with a vendor security questionnaire mindset helps you answer like a company that expects to be taken seriously.

“Show me the exact affected endpoints/assets”

Ask for a concrete list. Vague scope creates vague fixes, and vague fixes create vague buyer confidence (which is a polite way to say “none”).

“Can this be exploited with a normal user role?”

This question is gold because it frames attacker reality. “Normal user role” is often easier to obtain than you think (stolen credentials, self-signups, partner accounts). If “yes,” elevate priority.

“What mitigations work today while we build the real fix?”

Interim mitigations keep you safe during backlog reality. Rate limiting, feature flags, permission tightening, token rotation, and additional verification steps can reduce risk immediately.

“Which finding is most chainable, and why?”

Make the vendor do the chain thinking with you. Good vendors love this question because it lets them show judgment, not just tool output.

“What would you retest, and what evidence should we keep?”

Retesting is where deals get unstuck. Ask what evidence buyers will accept: screenshots, configs, logs, diffs, a retest letter, or all of the above.

We were two emails away from a signed enterprise contract. The buyer’s security reviewer sent a single question: “Can your customers access each other’s audit logs?” Our founder forwarded it with the kind of optimism that only exists before you read the pen test report closely. The report had a “Medium: IDOR” buried on page 27, filed under “limited impact,” because the tester only pulled one record in a staging tenant.

In production, the endpoint was the same, the authorization check was missing, and the record was not “limited impact” at all. It was an audit log. The buyer didn’t yell. They did something worse: they paused. We fixed the endpoint, added a test, wrote a buyer-safe summary, and scheduled a retest. The deal recovered. The lesson stuck: severity is a label; trust is the outcome.

| Year | Finding class | Typical expectation range | Notes |

|---|---|---|---|

| 2026 | Pre-auth production takeover / tenant break | 24–72 hours for mitigation; 7–30 days for root fix | Varies by contract; proof matters as much as speed. |

| 2026 | Auth/broken access control affecting sensitive data | 7–45 days | Interim controls can unblock a review while fixes ship. |

| 2026 | Lower-impact or non-prod issues | 30–90 days | Still track owners/dates to avoid “forgotten risk.” |

Neutral next action: Align your due dates to your customer’s contract expectations, then document interim mitigations immediately.

FAQ

What should founders look at first in a pen test report?

Start with scope + executive summary, then find anything pre-auth, production, auth-related, or tenant-boundary related. After that, identify chainable items and anything involving secrets or sensitive data. Your first pass is triage, not education.

Does a “passing” pen test mean we’re secure?

No. A pen test is a point-in-time assessment of a scoped target. It can be high-quality signal, but it’s not a guarantee. Security is the combination of secure design, operational controls, monitoring, and how quickly you remediate what you learn.

How do I know if a finding is exploitable or just theoretical?

Look for detailed reproduction steps and concrete evidence (requests/responses, screenshots, payloads). If the vendor can reproduce reliably, assume an attacker can too. If reproduction is vague, ask for clarification and retest criteria.

What pen test issues block enterprise deals the most?

Broken access control, tenant boundary failures, account takeover paths, secrets exposure, and any pre-auth production exploit tend to cause immediate procurement concern. Buyers usually accept “not perfect” if they see a dated plan and credible proof.

How fast should we remediate Critical/High findings?

Move fast on anything pre-auth, production, or chainable. Even if a root fix takes time, ship interim mitigations quickly (access tightening, secrets rotation, feature flags, rate limiting) and schedule a retest. Buyers often care most about “what changed this week?”

Can we share a pen test report with customers safely?

Often, yes, but carefully: under NDA, with redactions as needed, and ideally as a buyer-safe summary plus targeted evidence instead of the full exploit-heavy report. The report can contain sensitive operational details you don’t want circulating.

What’s the difference between a pen test, vulnerability scan, and red team?

A vulnerability scan is broad and automated. A pen test is targeted, manual validation with exploitation attempts in scope. A red team is typically more adversarial and objective-driven (often testing detection and response), sometimes with broader social/physical angles depending on scope.

Should we retest after fixes, and what counts as proof?

Yes, for high-impact findings, retest is your fastest trust-builder. Proof usually includes: retest confirmation, screenshots or request/response evidence, config diffs, and a short change log. Keep it buyer-readable.

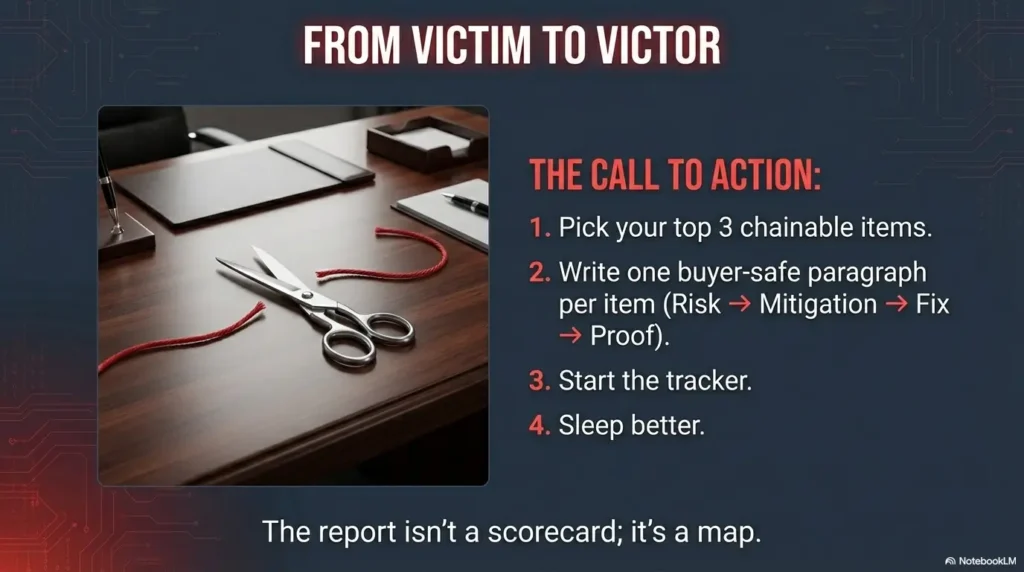

Next step: Build a 1-page “Fix + Proof” tracker today

Create a single table: Finding → Asset → Owner → Due date → Interim control → Retest status

This is the part that turns a scary PDF into momentum. A tracker is not busywork. It’s how you keep “accepted risk” from becoming “forgotten risk,” and how you answer buyer questions without a three-day scramble.

Personal anecdote: My favorite moment in any security review is when the buyer asks a hard question and the team responds in under 60 seconds, calmly, with dates and proof. It feels like clean music. Not because everything is perfect, but because the team is in control.

Pick the top 3 “chainable” items and schedule a retest window

Don’t retest everything. Retest what buys you the most risk reduction and the most buyer trust. If you’re actively in enterprise sales, put a retest window on the calendar as soon as fixes are staged.

Share a buyer-safe summary: what changed, when, and what’s next

Buyers don’t want your full report. They want a clear narrative: the risk, the mitigation, the root fix, and the proof. Keep it short and factual. Your confidence should come from evidence, not adjectives.

- Yes if it’s pre-auth, production-exposed, or crosses tenants.

- Yes if it affects sensitive data or privileged actions.

- Yes if it’s easily chainable with one other finding.

- Maybe if it’s post-auth but reachable by normal users.

- No (usually) if it’s non-prod only with strong separation and proof.

Neutral next action: Mark each top finding as Yes/Maybe/No and assign an owner before your next sales pipeline review.

Closing the loop from the hook: That report isn’t a scorecard. It’s a map of how your business can be hurt. When you read it as a chain, prioritize by exploitability and exposure, and pair fixes with proof, you stop being “the vendor who says it’s fine” and become “the vendor who runs a tight ship.” That difference shows up in fewer escalations, faster security reviews, and fewer 2 AM surprises.

Your next 15-minute step: Open the report, pick three chainable items, create the tracker table, and write one buyer-safe paragraph per item: risk → mitigation now → root fix date → retest plan. You’ll feel the fog lift.

Last reviewed: 2026-02-18