Mastering Enumeration: From Clues to Credibility

A weak Kioptrix practice report rarely fails because the learner missed the open ports. It fails because the enumeration section reads like a shoebox full of clues: banners, screenshots, scan results, and conclusions all rattling together.

That is where most beginners lose the room. They did the work, but the writing makes the evidence feel louder, shakier, and less reproducible than it really is. Instead of showing what the host returned, they slide too quickly into what they think it meant. Keep doing that, and your report starts leaking credibility long before exploitation or validation even enter the page.

This post helps you document enumeration clearly by separating observed evidence from inference, tightening service-by-service structure, and using cleaner reporting language.

- Observation

- Validation

- Interpretation

- Limitation

Because clear notes travel farther. Because screenshots are not arguments. Because “likely” is often stronger than pretending you proved too much. The difference between a messy lab report and a trustworthy one is often just a few better sentences.

Table of Contents

Why Enumeration Notes Fail Before the Exploit Ever Starts

When “I scanned the box” tells the reader almost nothing

Many beginners write enumeration as if the reader was standing beside them the whole time. The report says, “I ran a scan and found services,” and then leaps directly into a conclusion. But the reader was not there. The reader did not watch the terminal blink. The reader needs a path, not a mood.

A good enumeration section answers a humble question: what did the target reveal, under what conditions, and why did that move matter? That is the difference between a report and a memory. One can be checked. The other can only be trusted on personality, and personality is a terrible evidence format.

Why messy recon notes weaken even good technical work

I have seen perfectly decent lab work wear a fake mustache because the write-up was chaotic. The learner noticed the right things, but wrote them in a way that made every clue look louder than it was. Ports became “major attack vectors.” Banners became “confirmed versions.” A directory listing became “proof of compromise risk.” The result was not stronger. It was shakier.

OWASP’s Web Security Testing Guide frames testing as a methodology and describes web security testing as collecting evidence of deficiencies in controls, not as theater or guesswork. That is a useful north star for lab reporting too.

The real goal: make your path reproducible, not theatrical

In a practice report, the best compliment is not “dramatic.” It is “easy to follow.” Reproducibility is the quiet currency of technical credibility. If someone else can read your enumeration section and understand the sequence of observations that led to the next action, your report is doing its job.

- Write the host response before the interpretation

- Record the trigger for each next step

- Prefer calm wording over inflated certainty

Apply in 60 seconds: Replace one vague sentence like “I scanned the box” with one sentence naming scope, one sentence naming observed services, and one sentence naming the next action.

Start With Evidence: What to Record Before You Interpret Anything

Access conditions, host scope, and test context

Before you document a single port, record the frame. What host were you testing? Was the target a single intentionally vulnerable VM in an authorized local lab? What network path existed between your attacking machine and the target? Was name resolution involved, or only direct IP access? These details feel plain, but plain is where good reports live.

A short environment statement often does more work than a dramatic screenshot. Something as simple as “Authorized practice lab, single target reachable on local private network, enumeration performed from Kali Linux VM” keeps the reader grounded. It also prevents later confusion when your report mentions ports or services that only made sense in that lab context. If your setup keeps wobbling before you even reach the evidence, a Kioptrix Kali setup checklist can help stabilize the basics before the write-up begins.

Open ports, service banners, and response behavior as raw facts

Raw facts are the stones of the path. Open TCP ports, banner text, HTTP response headers, page titles, anonymous login behavior, directory listings, redirects, error messages, SSL certificate details, SMB share names, and visible protocol negotiation all belong here. Not because every fact is equally important, but because facts arrive before interpretation.

Notice the rhythm: port 80 responded, HTTP title displayed, server header returned, directory enumeration produced these paths, one path changed the next decision. That is a sequence. Sequences feel small. They are also how trust is built. If you want to see that rhythm in a narrower web context, compare your notes against a cleaner Kioptrix HTTP enumeration flow.

Why timestamps, tool context, and command purpose matter

You do not need to dump every command like confetti on a windy day. But you do need enough tool context that the reader understands what kind of question you asked the target. “A TCP scan identified these ports” is helpful. “A directory enumeration pass against the web root produced these discoverable paths” is helpful. “I used a thing and stuff happened” belongs in the graveyard of drafts.

NIST’s SP 800-216 emphasizes formalizing how security reports are accepted, assessed, managed, and communicated. Even though that document targets vulnerability disclosure programs rather than student lab reports, its spirit is useful here: disciplined intake, disciplined handling, disciplined language.

Eligibility checklist: Is this enumeration note ready to keep?

- Yes / No: Does it name the host, service, or path involved?

- Yes / No: Does it record what the target actually returned?

- Yes / No: Does it avoid claiming a vulnerability from a single hint?

- Yes / No: Does it explain why the note mattered for the next step?

- Yes / No: Could another learner reproduce the reasoning from your text?

Neutral next action: If you answered “No” twice or more, rewrite the note before it graduates into the final report.

Separate Facts From Guesses Before They Get Married

Observed vs inferred: the line that keeps your report honest

This is the hinge of the whole article. An observed fact is something the target actually returned. An inference is what you think that fact suggests. Both matter. But once they blur together, your report begins to wobble like a table with one philosophical leg.

For example:

- Observed: Port 80 returned an Apache-style page title and a server header.

- Inferred: The host may be running Apache, but version certainty requires caution because headers can be misleading or altered.

That is better than writing “Apache confirmed” when all you really saw was a header. Restraint is not weakness. It is technical hygiene. This is exactly where many writers trip over banner grabbing mistakes and end up giving a flimsy clue more authority than it earned.

How to phrase uncertainty without sounding weak

Beginners often fear words like “suggests,” “appears,” or “likely,” as if caution makes them sound less competent. In practice, the opposite happens. Controlled uncertainty makes you sound like someone who understands evidence boundaries. The weak report is not the one that says “likely.” The weak report is the one that says “confirmed” three paragraphs before confirmation exists.

Useful phrases include:

- “The response suggests…”

- “This behavior is consistent with…”

- “Under current lab conditions, this appears to…”

- “Enumeration alone does not confirm…”

Which findings deserve “confirmed,” “likely,” or “unverified”

A simple confidence label at the end of each meaningful mini-finding can clean up the entire section.

Confirmed works when the evidence is direct and reproducible under your current conditions. Likely works when multiple clues point the same way but at least one leap remains. Unverified works when a clue is interesting but too thin to support the headline your inner dramatist desperately wants.

I still remember reading an early student report that called a generic service banner “critical exposure.” It had the emotional energy of a thunderstorm and the evidence density of cotton candy. A confidence label would have saved it.

Show me the nerdy details

Confidence labels do not replace evidence. They make evidence easier to sort. Use them after the mini-finding, not before. Example: “HTTP server header exposed product family information that informed later directory enumeration. Confidence: Likely.” This preserves the evidence chain and avoids turning the label into a decorative badge.

Port by Port, Story by Story: Documenting Enumeration So It Reads Cleanly

HTTP enumeration: titles, headers, directories, and visible clues

For web services, your report should read like a tidy inspection rather than a grocery receipt. Start with the reachable service and visible behavior. Name the port. Name the title. Name notable headers. Name the immediate content impression. Then document the next checks that had a reason to exist, such as directory discovery, robots.txt review, default pages, form presence, or error behavior.

Bad pattern: “Visited website and found interesting stuff.”

Better pattern: “Port 80 returned a reachable HTTP service with a default-looking page title. Response headers exposed server-family clues. Directory discovery was then used to test for accessible paths that might clarify application structure.” If you are deciding how to describe that directory step cleanly, the contrast between Dirb vs Gobuster can also sharpen how you explain what kind of question the tool was actually asking.

FTP, SSH, and SMB: what belongs in the report and what does not

Service-by-service reporting should feel modular. FTP gets login behavior, anonymous access status, banner text, directory visibility, and whether access changed the next move. SSH gets port status, banner or protocol cues, and whether the service was simply noted for later credential relevance. SMB gets share enumeration behavior, accessible resources, naming clues, and any authentication boundary that matters.

What does not belong? Inflated speculation. A visible SMB share name is not a vulnerability by itself. An SSH banner is not a full story. A service existing is often just that: a service existing, minding its own business, not auditioning for a dramatic finding title. The same caution helps when you are working through SMB listing behavior without actual access, where the boundary between observable response and imagined capability can get slippery fast.

Service-by-service structure that helps readers follow the chain

A practical structure looks like this:

- Service identified

- Raw behavior observed

- Follow-up validation performed

- Why that mattered

- Confidence label

If you repeat that rhythm across each relevant service, your report gains a quiet symmetry. Readers relax when structure is consistent. Reviewers do too.

Decision card: Host-by-host vs service-by-service

- You have one target or a very small lab scope

- The report follows one machine from recon to conclusion

- You want chronology to feel obvious

- One host exposes several meaningful services

- You want the evidence chain for each service isolated

- The report risks becoming a tangled braid

Neutral next action: Pick one structure and stay faithful to it for the whole enumeration section.

Don’t Skip the “Why”: Show Why Each Enumeration Result Mattered

Which observation triggered the next action

A report becomes readable the moment it starts answering “why next?” If a web header suggested a product family, say that it justified content discovery or version-sensitive checking. If anonymous FTP access succeeded, say that it justified reviewing available files. If an HTTP page exposed naming clues, say that those clues informed directory guesses or username hypotheses.

The point is not to narrate every thought that passed through your head at 1:13 a.m. with three tabs open and one moral crisis about syntax. The point is to show the specific observation that caused the next move.

How to explain pivots without bloating the write-up

You do not need paragraph-long transitions. Often one sentence is enough: “Because the HTTP response exposed a default page and recognizable server-family clues, directory enumeration was performed to identify accessible content paths.” That sentence carries evidence, interpretation, and action without turning into soup.

Readers should never have to guess why you pivoted. When they do, the report starts to feel like a magic trick. Technical writing should be many things. It should not be a rabbit in a hat.

Why sequence matters more than screenshot volume

The order of observations matters because enumeration is cumulative. One clue by itself may be thin. Two or three related clues, presented in the right sequence, become a useful rationale. Sequence also protects you from overclaiming because it forces you to show how confidence increased, instead of pretending it appeared all at once like a thunderbolt from a banner string. If you already keep a structured Kioptrix recon log template, this is the section where those quieter notes start paying rent.

- Name the trigger

- Name the follow-up

- Name the limit

Apply in 60 seconds: Add the phrase “This led to…” after each major observation, then trim until only the real pivots remain.

Let’s Be Honest: Screenshots Are Not a Substitute for Clear Writing

What a screenshot proves and what it does not

Screenshots are useful. They are also frequently overpromoted. A screenshot proves that, at a specific moment, on a specific system, the displayed content appeared on your screen. It does not automatically prove your interpretation of that content. A screenshot of a banner proves the banner existed in the captured session. It does not, by itself, prove the exact software version is true in all respects.

This is where many reports tip into accidental exaggeration. The image is real, so the writer begins to treat every conclusion around it as equally real. That is a very human mistake. It is also avoidable.

How to caption images so they support, not confuse, the claim

Your caption should do three jobs: state what the image shows, state the condition under which it was captured, and state the boundary of what it confirms. Example: “HTTP response from target web service during authorized local-lab enumeration, showing reachable page title and server header. The image supports service identification cues but does not independently confirm precise software version.”

That kind of caption is not glamorous. Neither is a seatbelt. Both improve outcomes. If you want a companion habit for keeping image evidence tidy, a consistent screenshot naming pattern quietly reduces chaos later.

When one screenshot is enough and when text is better

If three screenshots all prove the same small point, use one. If the key issue is the logic of a pivot, text is often better. Images are strongest when they anchor an important observable state. Text is strongest when it explains why the state mattered. Together, they behave beautifully. Separately, they can become a traffic jam.

We cut the nine screenshots to two, added three sentences explaining which discovered paths changed the next action, and suddenly the section made sense. Nothing magical had happened. The evidence was already there. The report had simply stopped mistaking volume for clarity. That is one of the strange little mercies of technical writing: often, the fix is not “more.” It is “more honest about what each piece is doing.”

Common Mistakes That Make Enumeration Look Sloppy

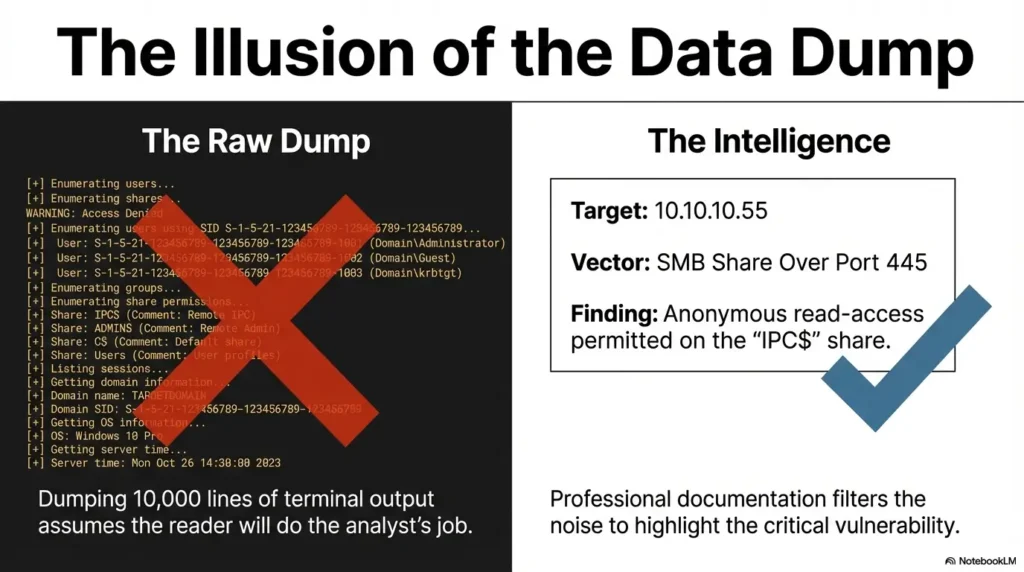

Mixing raw output, conclusions, and assumptions in one paragraph

This is the classic mud puddle. A writer pastes raw output, inserts a strong conclusion, adds an assumption, and then leaves the reader to separate them like someone sorting lentils from gravel. Do not make the reader do your housekeeping.

Instead, keep the paragraph clean. One sentence for the observation. One sentence for the validation or follow-up. One sentence for the interpretation. One sentence for the limitation. Suddenly the paragraph has bones.

Writing tool-centered notes instead of evidence-centered notes

Reports are not product demos for your command history. The tool matters only to the extent that it explains what kind of question you asked the target. “I ran gobuster” is less useful than “Directory enumeration against the web root discovered accessible paths that clarified application structure.” Put the evidence in the front seat. Let the tool ride quietly in the back.

Treating banners like proof of version and vulnerability

This mistake is so common it practically has a loyalty program. A banner suggests. It can inform. It can point. It does not, by itself, complete the story. Headers and banners can be incomplete, customized, suppressed, misleading, or simply too thin to support a version-specific claim. That is why broad notes about service detection false positives are often more valuable than another burst of certainty.

Dropping findings into the report without explaining relevance

An open port with no narrative role is just a fact in a hallway. If it mattered, say why. If it did not matter, keep it brief. Not every piece of enumeration deserves a miniature opera. Some items are supporting cast. Let them be supporting cast.

Mini calculator: How noisy is your paragraph?

Input 1: Count how many times you used words like “confirmed,” “critical,” or “vulnerable.”

Input 2: Count how many direct observations appear in that same paragraph.

Output: If certainty words outnumber direct observations, the paragraph is probably overselling the evidence.

Neutral next action: Replace one certainty word with either a direct observation or a limitation sentence.

Don’t Do This: The Small Reporting Habits That Inflate Weak Claims

Calling something “critical” before scope and impact exist

Severity language should arrive late and carefully, not sprint into the room wearing a cape. In a training lab, especially during enumeration, you usually do not yet have enough scope or impact context to label something “critical.” Use the evidence you have. Save the thunder for when the clouds are actually there.

Copy-pasting command output with no interpretation layer

Raw output is not self-explanatory to every reader. Even technically fluent readers benefit from a sentence that says what mattered in that output. Think of your job as translation, not decoration. A report should not force the reader to do line-by-line archaeology unless the output itself is the central evidence artifact.

Turning training-lab findings into production-style alarm bells

Authorized practice labs are valuable precisely because they let learners observe and document vulnerable behavior safely. But the tone should remain anchored to that context. Do not write as if your Kioptrix VM is a national emergency in loafers. A calm lab frame protects both ethics and accuracy.

CISA’s vulnerability disclosure policy materials emphasize clear reporting methods, acknowledgement expectations, and defined rules of engagement. That same discipline is worth borrowing for student reports: clear scope, clear boundaries, clear language. Readers who want the broader frame can also compare this habit to a more formal vulnerability disclosure policy mindset, where boundaries matter as much as findings.

Show me the nerdy details

One practical test for inflated language is substitution. Replace the product name or service with a neutral phrase like “the observed service” and read the sentence again. If the sentence still sounds like a headline from a tabloid, your wording is probably too strong for the evidence currently shown.

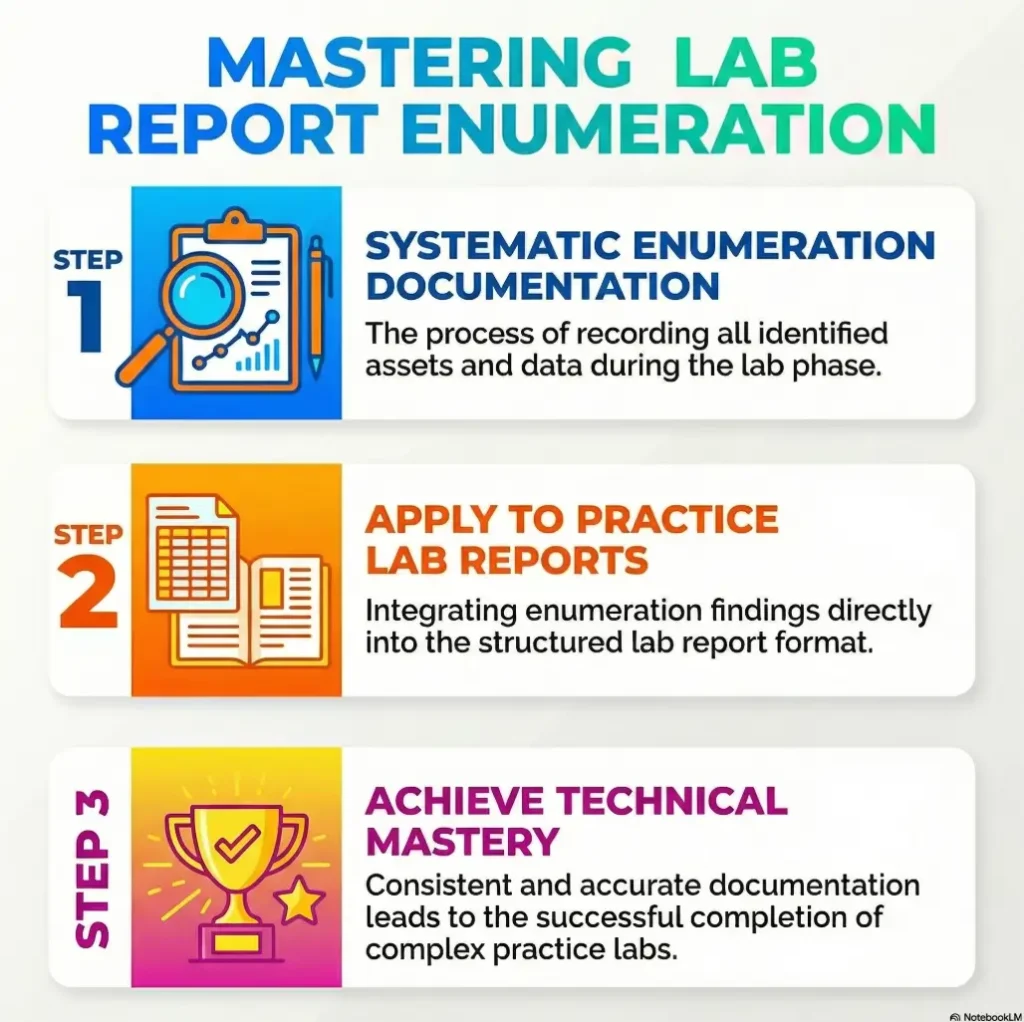

Use a Repeatable Frame: Observation, Validation, Interpretation, Limitation

Observation: what the target actually returned

Begin with the artifact itself. “Port 80 responded to HTTP requests and returned a default-looking page with a visible title and server header.” Clean. Grounded. Reproducible.

Validation: what you checked next to reduce guesswork

Then show the next check. “Directory enumeration was performed against the web root to identify accessible paths that might clarify application structure.” This is where you reduce the gap between clue and conclusion.

Interpretation: what the evidence suggests under current conditions

Now interpret, but with discipline. “The combination of reachable content, recognizable headers, and discovered paths suggested a web application surface worth deeper review.” Notice what this does not do. It does not overclaim a precise vulnerability from a thin trail. For a fuller model of this restraint in practice, a broader technical write-up structure can help you keep evidence and interpretation in separate lanes.

Limitation: what you still cannot prove from enumeration alone

Finally, name the boundary. “Enumeration alone did not confirm exact software version or exploitability.” This one sentence can save a report from becoming overconfident. It also signals maturity, which is a lovely trait in both humans and paragraphs.

- Observation anchors the claim

- Validation shows the reasoning step

- Limitation prevents accidental exaggeration

Apply in 60 seconds: Rewrite one noisy paragraph into four sentences using observation, validation, interpretation, and limitation in that order.

Infographic: The Clean Enumeration Flow

Record the host response, port, path, banner, title, or behavior.

Run the next check that reduces ambiguity.

State what the combined evidence suggests under current conditions.

Say what enumeration alone still cannot prove.

Use: one mini-block per meaningful service. Avoid: jumping straight from banner to verdict.

Here’s What No One Tells You: Clean Enumeration Writing Saves Time Later

Better enumeration notes make exploitation sections easier to write

When enumeration is clean, later sections stop feeling like patchwork. You do not need to re-remember why you checked a path, chased a share, or revisited a service. The logic is already on the page. Good enumeration notes are future-you’s best apology.

Cleaner logic reduces contradiction in findings and evidence

Contradictions often appear because early notes were too vague. A service was “confirmed” in one paragraph and “appeared to be” in another. A path was “interesting” with no explanation, then later used as if its importance had been obvious all along. Clean early writing lowers this friction.

Why disciplined recon writing improves credibility for beginners

Beginners sometimes think credibility comes from sounding more advanced. Usually it comes from sounding more careful. When you write with clear boundaries, reviewers trust you more, not less. That trust is precious. It is also earned line by line, not through dramatic adjectives with their hair on fire. For that reason alone, it helps to read this article beside broader Kioptrix report writing tips rather than treating enumeration as a separate planet.

Who This Is For / Not For

Who this is for

- Students documenting Kioptrix or similar authorized practice labs

- Beginners building report-writing discipline for security coursework

- Entry-level practitioners who need clearer evidence chains in technical write-ups

Who this is not for

- Anyone seeking guidance for unauthorized targeting or real-world intrusion

- Readers looking for exploit walkthroughs rather than documentation method

- Reports that need a full enterprise risk assessment rather than practice-lab reporting discipline

This distinction matters. The purpose here is better evidence writing in authorized training environments. Not live-target tactics. Not operational tradecraft. Just the often-neglected art of making a technical path readable and honest.

A Strong Enumeration Section Template You Can Reuse

Short environment statement

Example: “Enumeration was performed against a single authorized practice-lab host on a local private network from a Kali Linux VM. The purpose of this phase was to identify reachable services and observable behaviors that could guide further validation.”

Host-by-host or service-by-service evidence blocks

Example block:

Service: HTTP on port 80

Observation: The service returned a reachable page with a visible title and server-family header.

Validation: Directory enumeration identified accessible paths relevant to application structure.

Interpretation: The combined responses suggested a meaningful web application surface for further review.

Limitation: Enumeration alone did not confirm exact version or exploitability.

Confidence: Likely

Claim-proof-limit wording for each meaningful observation

If you prefer an even tighter rhythm, use a compact claim-proof-limit format:

- Claim: What you are saying

- Proof: What the host actually returned

- Limit: What remains unproven

Confidence label at the end of each mini-finding

End the block with one of three labels: Confirmed, Likely, or Unverified. That small habit keeps the report from drifting into accidental overstatement. If you want a fuller model for stitching those blocks into a finished deliverable, a complete Kioptrix lab report can serve as a useful comparison point.

Quote-prep list for your final cleanup pass

- The exact host or service being described

- The observable return that supports the sentence

- The next validation step you took

- The limitation you still need to name

- The confidence label that fits the evidence

Neutral next action: Run this list against each major mini-finding before you submit the report.

FAQ

How detailed should enumeration be in a Kioptrix practice report?

Detailed enough that a reader can follow the evidence chain without seeing your terminal history. Include the meaningful observations, the validation steps they triggered, and the limits of what you can conclude from enumeration alone. Do not include every command unless the command itself matters to understanding the result.

Should I include every command I ran or only the important ones?

Only the important ones, or at least only the ones that clarify what kind of question you asked the target. A report is not a full shell transcript. It is a structured explanation of what you observed and why it mattered.

How do I document a banner without overclaiming the version?

Write the banner as an observation, then explicitly state that the banner suggests a product family or possible version clue but does not independently confirm precise versioning. That sentence alone will make your report stronger.

What is the difference between observed evidence and inferred evidence?

Observed evidence is what the target directly returned, such as a port state, banner, page title, or error message. Inferred evidence is the conclusion or hypothesis you draw from those returns. Good reports separate the two.

Do screenshots matter more than written explanation?

No. Screenshots support evidence, but they do not replace explanation. The image shows a state. Your text explains why that state mattered and what it does not prove.

How should I organize enumeration for multiple services on one host?

Choose either host-by-host or service-by-service and stick with it. If the host exposes several meaningful services, service-by-service often stays cleaner. The real win is consistency.

Is it okay to mention likely vulnerabilities during enumeration?

Yes, but keep the wording restrained. Use language like “suggests,” “consistent with,” or “worth further validation,” and name the limitation. Enumeration is usually the place for hypotheses, not final verdicts.

What should I do when enumeration results are inconclusive?

Say so directly. “Under current conditions, the result remained inconclusive” is a respectable sentence. Then document the next validation you attempted or the reason the uncertainty remained.

Next Step

Take one prior Kioptrix write-up and rewrite the enumeration section using a four-part structure: observation, validation, interpretation, limitation. That single revision usually reveals where the report was drifting from evidence into assumption.

That is the curiosity loop from the beginning, now closed without fireworks. The issue was never that your notes were too simple. It was that they were too blended. In the next 15 minutes, choose one old paragraph and rebuild it in the four-part frame. Then add a confidence label. You will usually see the weak joints immediately: a claim with no observation, a banner doing too much work, a screenshot standing in for explanation, or a conclusion that arrived three stops too early. That tiny rewrite is often the moment a beginner report starts sounding like it can breathe.

Last reviewed: 2026-03.

Differentiation Map

| What competitors usually do | How this outline avoids it |

|---|---|

| Treat enumeration as a generic recon checklist | Frames enumeration as a reporting discipline with evidence flow |

| Use vague headings like “Scanning” or “Information Gathering” | Uses specific, intent-rich headings tied to writing quality and report clarity |

| Focus on tools more than interpretation | Centers observable facts, reasoning, and reproducibility |

| Encourage oversized claims from thin clues | Builds in confidence labels, limitations, and restrained wording |

| Dump screenshots without narrative | Teaches captioning, proof boundaries, and relevance |

| Blend lab write-ups with real-world adversarial tone | Keeps the scope anchored to authorized practice environments |

| End with a bland summary | Finishes with a concrete revision action the reader can take immediately |